Related Content

June 30, 2021

This blog post is the second in a series of three articles that will explore the unique challenges audio content moderation poses for platforms creating audio-focused digital spaces. The first article in this series focused on the choices around recording audio for the purpose of content moderation in audio only digital social spaces. This article will discuss how platforms can choose to review audio for content moderation. The third article will cover cautionary tales from unmoderated audio spaces and current approaches and models of audio content moderation that have been enacted and publicly announced.

On June 21, Facebook rolled out Live Audio Rooms, its new audio-focused digital social spaces connected to public figures and certain Facebook Groups. Five days earlier, on June 16, Spotify launched its new audio-focused platform, Greenroom. On May 3, Twitter announced that Spaces, its audio-focused feature, would become available to those of the platform’s nearly 200 million users who have over 600 followers. This continues a growing trend, beginning with the launch of Clubhouse last March, of emerging and established tech companies wading into the world of social media focused on audio. While these spaces are new, the problem of moderating audio content to address hate is not.

This CBS news clip from 2012 on the problem of harassment of women in online games includes several examples of voice-based harassment, with one woman targeted by gender-based hate in the clip stating “Everyone is always the same: I’m either fat and ugly or a slut.” In 2013, Gabriela Richard, a current ADL Belfer Fellow, produced an ethnographic study of harassment in online games and found that people from marginalized communities were often targeted specifically in voice communication settings. She wrote, “It isn’t always clear how in-game voice harassment (which can’t be as easily recorded as a text or picture message) is monitored or policed.”

While games such as those investigated by Richard have features that make audio moderation in those spaces distinct in some ways, voice chat in games and audio-focused social media spaces like Clubhouse overlap in terms of content moderation considerations. One of those considerations is around recording, which we discussed in the first blog of this series. Another of those considerations is how a platform chooses to review audio content if it decides to record.

Livestreaming vs. Static Audio

Suppose a tech company decides to record platform audio for content moderation. In that case, there are two potential pathways around how it will record audio content. The first is static audio recordings; select audio is recorded, stored, and then reviewed later. The second is livestreaming audio; audio content is recorded and potentially reviewed as it is produced. The dominant mode of recording for audio content moderation is static audio due to the ethical and technical challenges of livestreaming moderation.

For example, Clubhouse has chosen a version of the static model of recording for audio content moderation. Its guidelines state that it will record audio in a particular space on the platform while a channel is “live” or when people are actively talking in a specific Clubhouse space. If an incident is reported during this time, the company will store the audio and keep it for an investigation. If nothing is reported while a space is live, Clubhouse will delete the audio.

Several choices follow around how to investigate audio recorded in this way. A human content moderator can listen to part of the recording or its entirety. Then, the moderator can make a judgment based on what they hear and their understanding of the platform’s policies and their proper enforcement. Platforms could theoretically hire enough human moderators to cover their millions of users adequately. Still, most tech companies prefer to use automated solutions to scale their content moderation efforts to the size of their platforms while keeping down the costs of employing a massive number of human moderators.

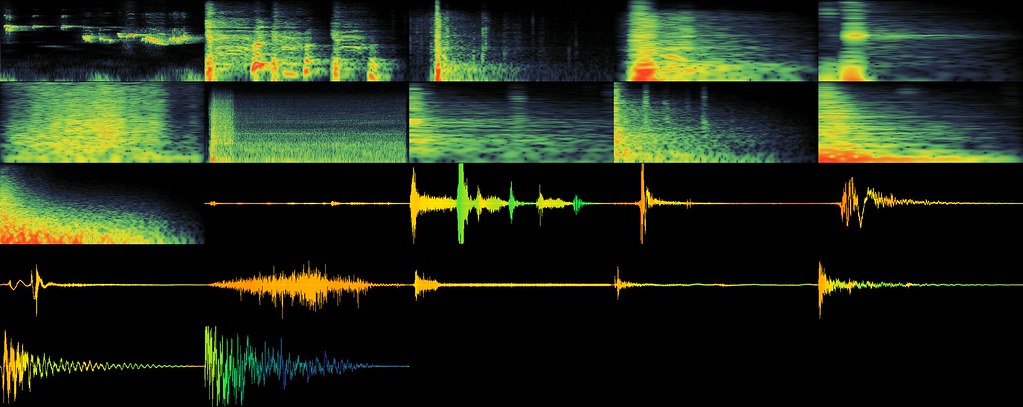

Automated tools that use artificial intelligence for detecting hate, harassment, and extremism in text are used by many tech companies, even if they have many limitations. Tools for audio content are less well-developed even than those. Often, audio content is transcribed into text via automated transcription software. Then, the transcribed text runs through more established text-based automated content moderation tools.

More Than Just Words

The process of automated transcription runs into difficulties, some mirroring the linguistic issues that plague efforts to use AI technology to detect hate and harassment on online platforms. Meaning in speech is more than just words—volume, pitch, and other non-textual cues affect statements. Voice-based conversations are less linear and sometimes overlap in a way that text-based conversations cannot. When audio recordings of speech are transcribed, these cues and nuances are flattened and often only contain the words spoken, resulting in harmful mistakes for content moderation systems trained to evaluate textual content as the whole of a comment’s intended meaning. Additionally, the problem of recognizing cultural context that exists in AI content moderation systems is present in commercially available transcription software. Both content moderation systems and transcription services are built based on recognizing patterns in existing content. Traditionally, the content these systems are built to recognize comes from specific cultural contexts and may contain regional dialects of speech—for example, patterns in English as spoken in certain parts of the United States. Expecting a content moderation or transcription product not built to recognize patterns in English as spoken in other parts of the world or in unrepresented American communities could result in harmful, unintended errors.

More broadly, this extra level of processing before human review provides more opportunities for dangerous errors in automated assessment to creep in because the distance between the user and content moderation is much greater. Instead of running on textual content created by a user, AI content moderation systems run on text created by an automated transcription product that was in turn based on words spoken by a user.

This challenge grows exponentially and becomes more prone to error when considering livestreaming audio content moderation. Instead of recording static audio from a digital space and reviewing it later, content moderation could happen continuously and in real-time in live audio spaces. Human content moderators could monitor audio spaces constantly and flag violative content as they hear it. Another possibility is technology that applies transcription software and AI content moderation tools to audio content consistently and in real time, translating spoken words to text content, running it through AI tools for various kinds of hate and harassment, and identifying the offending content. Both of these options seem technically daunting, ethically questionable when considering the potential for wrongful enforcement, and invasive for users in an audio-focused digital space. At the same time, these kinds of all-encompassing content moderation efforts are already the norm in text-based spaces.

In February, Facebook announced that 97.1% of the hate speech removed from the platform in Q4 was detected proactively—before anyone reported it—using its AI content moderation systems. While this metric does not say anything meaningful about the prevalence of hate on Facebook, it shows that proactive, automated detection of text content on social media platforms is an established practice and tools exist to do it. How these tools develop—or don’t—for audio-focused platforms in ways that help create respectful, inclusive spaces and improve the experience of vulnerable and marginalized communities will be critical as these spaces expand in size and prominence.

As with other digital social spaces, it is essential that tech companies creating these spaces center the experiences of marginalized communities when building out their platforms and content moderation practices. When creating policy, when designing the product, when building the product, at every stage in the creation and management of a digital social platform, tech company staff and leadership should be asking how the choices they are making for their platform will impact the experience of the most vulnerable communities who are most likely to experience harm there. Without this centering of marginalized communities, audio focused digital social platforms are likely to repeat many of the mistakes of social media past that have made current platforms such havens for hate, harassment and even extremism.

Part three of this series, “Cautionary Tales and Current Innovations,” will explore the impact of the lack of moderation in audio spaces on players and norms in online games. It will also look at current innovations in audio content moderation related to the growth of audio-focused spaces.