Related Content

Summary

The United States midterm electoral campaigns are in full force as candidates prepare advertisements and voter outreach. Social media is an integral part of their campaigning as more than half of all Americans get news from a social media platform.

But false or misleading information (including misinformation, spread without malice or coordination, and disinformation, purposely created to manipulate or cause harm) runs rampant on platforms, subverting democracy and leading to deadly consequences. One prominent example of where platforms have stumbled is the outbreak of war in Ukraine. False and misleading information spread by the Russian government became viral, amassing millions of comments, likes, and shares. Rewriting policies on the fly or thinking of misinformation as a problem only to be dealt with during election cycles guarantees misinformation will be treated as a short-term issue. Are social media platforms ready to combat misinformation related to the 2022 midterms? Overwhelmingly, we find they are not.

ADL analyzed eight major platforms’ policies (Twitter, Facebook, Instagram, Reddit, Discord, TikTok, Twitch, and YouTube) regarding elections and misinformation. For this report, we decided to use the term “misinformation” to encompass all misleading information, regardless of intent, because it is the term that tech platforms use in their policy guidelines.

In reviewing major platforms’ policies, we found:

- No platform bans election misinformation completely.

- No platform has a distinct disinformation policy of any kind.

- No platform allows full access to independent third parties to audit its claims of increased security and improved misinformation detection.

- Platform's policy rules and updates are difficult to find, scattered across pages of announcements, blog posts, and community guidelines.

The impact that false narratives have on our democracy is sobering. Lies spread online about the integrity of the 2020 presidential election, the most secure in history, culminated in the attack against the U.S. Capitol on January 6, 2021. The insurrection was planned on social media. Not only do lies about the 2020 election persist, but in the past two years, at least 19 states have also enacted laws making voting harder.

Misinformation is an ever-present threat and comes in many forms. But platforms’ policies do not address distinguishing between misinformation and disinformation. The difference between the two is not always straightforward and it does not help that platforms conflate them. For example, Twitter defines misinformation as “claims that have been confirmed to be false by external, subject-matter experts or include information that is shared in a deceptive or confusing manner.” But the company’s definition is more suited to describing disinformation and highlights that platforms discuss misinformation, but do not explicitly deal with disinformation. Posts or content containing information that is spread to purposefully confuse and sow chaos surrounding the election is qualitatively different from information that may be mistaken but not malicious. No platform makes this distinction or even uses the word “disinformation” in its community guidelines.

Social media platforms must better prepare for the midterms and future election cycles by clarifying and strengthening their misinformation policies and enacting policies specific to disinformation. They cannot wait for crises like the January 6 insurrection but must be proactive to avoid treating false and misleading information as short-term issues. Otherwise, policymaking after the fact allows such content to thrive. Platforms need to take greater responsibility for their role in spreading misinformation; that they haven’t should concern all Americans.

Policy: On August 11, 2022, Twitter announced a comprehensive approach to the 2022 midterms and how it intends to combat election misinformation through an information hub, tools to flag misinformation, and increasing protections for election officials and candidates.

What works:

- A platform-wide misinformation policy

- A coherent plan for the 2022 midterms

- An election information hub

- Flagging/labeling misinformation when posted

- Increased protections for election officials and candidates

- Sitewide ban on political ads

- High transparency for political ads

What to fix:

- Stricter misinformation rules are not year-round

- No specific disinformation policy

- Harmful misinformation is banned in only particular cases

- Policy rules and updates are not centrally located and easy to find

- Does not allow third parties to audit its research or site

Twitter implements its "Civic Integrity Policy" during an election cycle. Violations of this policy include:

- Misleading information on how to participate in elections

- Suppression or intimidation of participation

- Misleading information about outcomes

- False or misleading affiliations

Not all political misinformation is banned under this policy. Inaccurate statements about officials, candidates, and parties are allowed, as are biased or polarizing content, and parody. Violating policy can result in punishments of increasing severity, culminating in a permanent account lock. In addition to the Civic Integrity Policy, Twitter says it is also rolling out other changes. It will create U.S. state-specific event hubs where users can receive reliable information on voting in their locality; advanced labels for misinformation, such as candidate labels to identify who is running for office; and better protections for officials, candidates, journalists, and others. These protections include reminders to use a strong password, detections and alerts to respond to suspicious activity, and increased login defenses.

Twitter has proactively protected users and provided them with tools to access what they deem trustworthy information, provided in part through collaborations with Associated Press and Reuters. Twitter previously created an ad transparency center which has since been archived after the company removed political and issue ads in 2019.

ADL still has serious concerns about Twitter's policy, however.

The Civic Integrity Policy is only used during elections and not year-round. Otherwise, Twitter is not as strict in moderating political misinformation. While it defines misinformation as “claims that have been confirmed to be false by external, subject-matter experts or include information that is shared deceptively or confusingly,” it only takes action against misinformation in particular situations. These include:

- Crisis situations: armed conflict, public health emergencies, large-scale natural disasters

- False or misleading information on COVID-19 that may lead to harm

- Misleading/synthetic media: media that has been significantly altered, shared in a deceptive manner or with false context, or that will likely result in public confusion or harm, such as deepfakes

- Civic integrity: manipulating or interfering in elections or civic processes

Beyond these circumstances, there is no indication that Twitter will enforce its strike system, reduce visibility or perform any of its policy violation actions on other forms of harmful misinformation. For example, Twitter will take action against misinformation on the efficacy of COVID vaccines, but not against similar misinformation on the polio vaccine despite comparable harm.

Twitter claims it conducted a study in the U.S. and Brazil to prevent misleading Tweets from being recommended through notifications. The company contends that impressions on misleading information dropped by 1.6 million per month because of its experiment, but there is no way for the public to access the study. Twitter must be more transparent so third-party organizations can audit its research.

Facebook (owned by Meta)

Policy: Facebook has two informational hubs with resources for the 2022 midterms. These hubs are both available on Meta’s Government, Politics, and Advocacy page. However, most of the company’s policy is the same as the one used for the 2020 elections.

What works:

- A plan for the 2022 midterms

- A platform-wide misinformation policy

- Election information hub, with resources relevant to every kind of user

- Flagging/labeling misinformation when posted

- Increased protections for election officials and candidates

- High transparency for political ads

- Ability to turn political ads off

What to fix:

- Harmful misinformation is banned in only particular cases

- Policy rules and updates are not centrally located and easy to find

- No specific disinformation policy

- Few policy changes post-2020

- Does not allow third parties to audit its research or site

Facebook’s first hub, “Election Hub: United States Midterm,” focuses on candidates, officials, and election organizers. The hub includes direct links for resources and election integrity and material on how to launch a campaign, reach and engage voters, and get out the vote. While helpful to some, this information is not helpful for the average Facebook user who has no intention of running a campaign. The “Top Resources” page shows users how to categorize ads or create a page for a government agency or non-profit organization. There are also resources available for further protection of election officials and candidates. Of the five items Facebook lists, only one enables users to learn more about the platform’s commitment to transparency.

Facebook’s “Election Hub: The United States Midterm.”

The second hub, “Preparing for Elections,” describes Facebook’s general plan for elections, but also includes a quick fact sheet on the company’s process for the 2022 midterms. This hub is more helpful for average users than the first since they may be interested in learning how to turn political ads on or off, how misinformation labels work, and why content may be removed.

Facebook’s “Preparing for Elections” page.

Although the second hub provides a wealth of material on Facebook’s general election policies, updates for the 2022 midterms are relegated to the fact sheet describing work that it has either completed or policies that have been in place since 2020. There is also a post available on Meta’s Newsroom, but it reiterates much of the same information from the fact sheet.

Facebook will implement voting notifications in English and Spanish. Additionally, the company is investing $5 million in new fact-checking and media literacy initiatives, including college campus training for first-time voters. But these are scant improvements; Facebook should explain what it has done since 2020 to protect election integrity, given that misinformation garnered six times more clicks than credible news during the 2020 election cycle on the platform. Facebook claims it has removed 150 coordinated inauthentic behavior networks since 2017, but offers no evidence on whether its efforts were successful aside from its word.

Unlike Twitter, Facebook has barely updated its misinformation policy for the 2022 midterms. Its Election Hub contains a link on combating misinformation that directs users to its misinformation strategy. This strategy, called “remove, reduce and inform,” has been in place since 2016 and was updated in 2019. It takes a three-pronged approach to combat misinformation: Facebook removes content that potentially violates its policy, reduces the ability of users to find misleading content, and then provides users with credible information

Facebook’s “Remove, Reduce, and Inform” news page.

The policies described in Facebook’s 2019 misinformation strategy are outdated and unclear. For example, Facebook claims it ensures users see less low-quality and irrelevant content in their news feeds. The platform introduced a policy allowing users to override political ads, but only if requested by the user. Users can change their ad preferences directly on a social or political ad by clicking the information tab on that ad or by going to their settings and changing their ad preferences. But it is a bit disingenuous for Facebook to say users can “turn off” these ads, since in our research, the only options are “no preference” or “see less.”

At the same time, Facebook tells advertisers in the Election Hub that they can “boost” posts directly from their pages, targeting users via age, location, and gender. We cannot verify whether Facebook’s strategies to de-amplify content are comprehensive or successful because Facebook limits third-party research. So even though it may have an ad transparency center, where one can access all ads across Facebook platforms, that does not necessarily mean Facebook provides adequate research transparency. Facebook should make its research data available so that third-party organizations can verify its claims.

Facebook’s “Helping to Protect the 2020 U.S. Elections” news page.

Instagram (owned by Meta)

Policy: Instagram shares many of the same policies as Facebook.

What works:

- A plan for the 2022 midterms

- A platform-wide misinformation policy

- An election information hub

- Flagging/labeling misinformation when posted

- A voting information center

- Ability to turn political ads off

- Increased protections for election officials and candidates

- High transparency for political ads

What to fix:

- Policy rules and updates are not centrally located and easy to find

- No specific disinformation policy

- Harmful misinformation is banned in only particular cases

- Does not allow third parties to audit its research or site

- Few policy changes post 2020

- Difficult to determine which policies and resources are available for Instagram versus Facebook

Many of Instagram's features, such as Stories, are integrated into Facebook's features. Facebook's policy updates often describe changes the company makes in terms of features rather than the changes on specific platforms. For example, Facebook states, "We’re continuing to connect people with details about voter registration and the election from their state election officials through feed notifications and our Voting Information Center." "Feed notifications" may refer to Facebook’s news feed or Instagram’s feed. Facebook and Instagram also use the ad library to provide transparency around political ads. Instagram, like Facebook, provides a way to see fewer political ads.

It is difficult to delineate which changes affect Facebook, Instagram, or both. Which policy shifts will be instituted for which site, and for what feature? For example, where will the Voting Information Center be located? Will there be links to it on the other site? It was only within a Facebook news article that we discovered the location of the Voting Information Center; it will be available at the top of both Facebook and Instagram users’ feeds.

Moreover, Meta’s Instagram-specific policy for determining violative misinformation is unclear. The page for Meta's misinformation policy is titled "Meta's Transparency Center", but the link to its policy is in "Facebook Community Standards." Instagram’s Community Guidelines often provide links to the Facebook Community Standards for certain topics, like threats of violence, but there are no links to the misinformation policy on the page.

Meta’s “Misinformation” page under “Facebook Community Standards.”

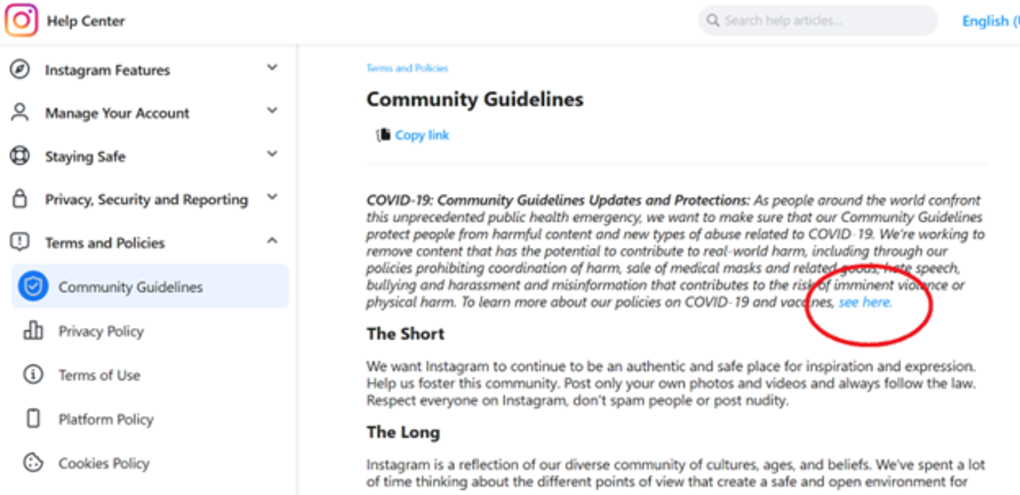

The only clear information on Instagram’s misinformation policies is a page hidden in the help center. This page cannot be accessed through the Community Guidelines page directly. A user must either search for it in the search bar or find the link on the “COVID-19 and Vaccine Policy Updates and Protections” page. Instagram, and its parent company Meta, should standardize and refine their information and help centers so that information can be easily accessed.

Instagram's “Community Guidelines” page. The link to its Covid-19 Community Guidelines is within the red circle. There is no way to access the complete misinformation policy from this page.

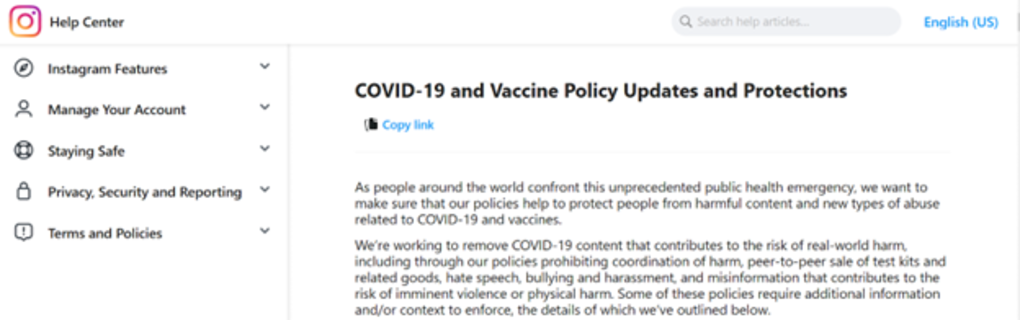

Instagram’s “Covid-19 and Vaccine Policy Updates and Protections” page.

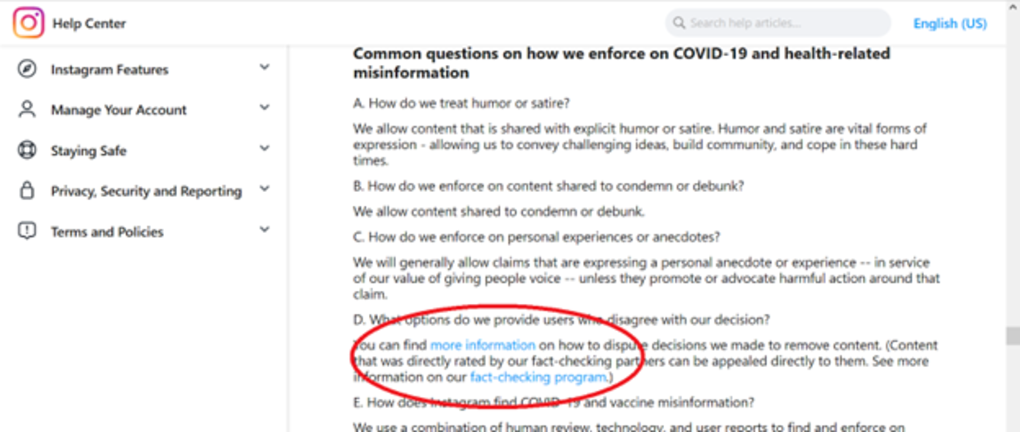

The links provided to access Instagram’s misinformation policy.

Instagram’s misinformation policy page, after clicking “fact-checking program.”

TikTok

Policy: TikTok echoes many of Meta and Twitter’s policy decisions, including having an election center where users can access credible information on how and where to vote, who is on the ballot, resources for deaf users, and other election-related information. TikTok is also rolling out a series of specialized hashtags and labels for government officials, politicians, and political parties.

What works:

- A coherent plan for the 2022 midterms

- A platform-wide misinformation policy

- An election information hub

- Flagging/labeling misinformation when posted

- Sitewide ban on political ads

What to fix:

- Policy rules and updates are neither centrally located nor easy to find

- No specific disinformation policy

- Harmful misinformation is banned in only particular case

TikTok has a detailed set of categories of banned misinformation. TikTok limits the following:

- Incites hate or prejudice

- Is related to emergencies that incite panic

- Medical misinformation that can cause harm to an individual’s physical health

- Content that misleads community members about elections or other civic processes

- Conspiratorial content including content that attacks a specific person or a protected group, includes a violent call to action, or denies a violent or tragic event occurred

- Digital forgeries (synthetic media or manipulated media) that mislead users by distorting the truth of events, and engaging in coordinated inauthentic behavior such as the use of multiple accounts to exert influence and sway public opinion

TikTok’s categories of banned misinformation are comprehensive. It is also one of three platforms reviewed (Twitch and YouTube being the others) that bans misinformation targeting a protected group. This is important because of the rising violence vulnerable groups face. Yet TikTok’s well-designed policy does not deter misinformation, as reports show.

Like Facebook, Instagram, and Twitter, TikTok flags misinformation as it is posted. The four platforms have also stated they will try to reduce the amplification of misinformation. TikTok states on its election integrity page that content, such as unverified polling information, will either be ineligible for recommendation on a user’s feed or users will be redirected to verifiable information. Content that undergoes fact-checking or cannot be fact-checked will not be recommended in users’ feeds.

However, TikTok’s policy rules and updates are difficult to find, scattered across pages of announcements, blogs, and community guidelines instead of gathered in a centralized hub. We found that this was a recurring problem in many of the platforms. It could be solved by investing the resources to create a centralized hub and have continual edits to that hub based on new blog posts or policy changes.

Policy: Reddit has no general misinformation policy, but allows individual subreddits and community moderators to determine their own guidelines. It only bans health misinformation as a company-wide policy, and this ban is categorized as “violence” rather than misinformation.

What works:

- An election information hub with resources

- High transparency for political ads

What to fix:

- No platform-wide misinformation policy

- Low enforcement of transparency measures for political ads

Reddit is a decentralized platform comprising thousands of subreddits, user-created boards focused on specific topics or interests and monitored by moderators who are either company employees or volunteers. Each subreddit determines whether misinformation is allowed or not and, if not, what kinds and what the punishment should be. Who makes the rules and how strictly they are enforced depends on the culture of the subreddit; some are more egalitarian than others, relying on member input when deciding rules. Different subreddits on similar topics can have different rules, such as health misinformation on subreddits for medical professionals. For example, the r/nursing subreddit states no “anti-science rubbish” is allowed. The subreddit r/Residency, on the other hand, has no such rules and no rules on misinformation.

Reddit does have an election hub for the 2022 midterms. However, unlike either Meta or Twitter, it does not detail policies or efforts to combat misinformation. Reddit has announced partnerships with organizations like Vote Riders or the League of Women Voters to increase voter education. It will also host an AMA (Ask Me Anything, subreddits for question-and-answer interactive interviews) series called “The 2022 Midterms On Reddit”, where communities will host discussions with experts on the voting process. These efforts are modest at best.

Reddit allows political advertising. It bans deceptive or untrue statements determined by the Reddit sales team and not a third party. Reddit says it is dedicated to transparency and requires all political ad campaigns to be posted on the r/RedditPoliticalAds subreddit. Political ads must also have comments on for at least 24 hours. However, while comments are permitted, it does not mean that users receive any actionable feedback from advertisers. Reddit “strongly encourages” political advertisers to engage with users in ad comments, but does not mandate them to do so, leaving advertisers with the choice to ignore the suggestion. As a consequence of Reddit’s hands-off approach, users lack information. Reddit should, at minimum, have a sitewide mdisinformation policy that all subreddits must follow.

Discord

Policy: Discord has no 2022 midterm misinformation policy; the policy it has is vague and unhelpful.

What works:

- A platform-wide misinformation policy

- Sitewide ban on political ads

What to fix:

- No 2022 midterm policy

- No specific disinformation policy

- Harmful misinformation is banned in only particular cases

- Does not allow third parties to audit its research or site

- Few policy changes post-2020

- Flagging/labeling misinformation when posted

- Policy rules and updates are not centrally located and easy to find

Discord, like Reddit, is relatively decentralized. Discord is a platform that is separated into thousands of unique channels that can encompass topics as varied as homework help to gaming tips and tricks. Each channel, like a subreddit, has its own moderators and guidelines that are unique to that channel, in addition to the sitewide guidelines.

Discord's misinformation policy is as stated: "Content that is false, misleading, and can lead to significant risk of physical or societal harm may not be shared on Discord. We may remove content if we reasonably believe its spread could result in damage to physical infrastructure, injury of others, obstruction of participation in civic processes, or the endangerment of public health." It is short, vague, and lacks many of the characteristics of the other misinformation policies reviewed. Discord fails to define “societal harm.” The platform does not explain its review process when potentially objectionable content is found.

Discord has not updated its policy or made any policy announcements for the 2022 midterms, although it provided resources for the 2020 elections comprising a blog post listing links to third-party websites where users could gather voter education materials and learn how to register to vote. Unlike Reddit, Discord bans misinformation sitewide. Though lacking the specificity of Meta or Twitter, Discord similarly states that certain instances of false content will be taken down or moderated. Also like Meta and Twitter, these categories cover obstruction of civic processes such as voting, endangerment of public health, and physical damage to property or people. Finally, Discord is one of the four platforms reviewed (Twitter, TikTok, and Twitch are the others) that bans political ads on its site, by virtue of all advertising being banned from Discord.

Discord’s policy is not detailed and lacks relevant information, with details scattered across blog posts or announcements. Discord bans “endangerment of public health” but fails to clarify its meaning: Does this apply to vaccine misinformation broadly or Covid-19 misinformation specifically? Users must navigate to a different page to find Discord’s explanation of its health misinformation policy, which bans health misinformation likely to cause harm, including all anti-vaccination content. This information is neither readily available in Discord’s community guidelines nor is there a direct link. Like Meta, Discord should create a centralized hub where all community guidelines and policies are listed and explained.

Twitch

Policy: Twitch has no specific plans or policy proposals for the 2022 midterms, although its misinformation policy is robust.

What works:

- A platform-wide misinformation policy

- Sitewide ban on political ad

What to fix:

- No 2022 midterms policy

- Harmful misinformation is banned in only particular cases

- No specific disinformation policy

- Does not allow third parties to audit its research or site

- Policy rules and updates are not centrally located and easy to find

- Flagging/labeling misinformation when posted

Twitch shares many structural similarities with Discord and Reddit. It also allows users to create their own channels with unique guidelines and moderators. However, Twitch has a more thorough misinformation policy unlike the two platforms. Twitch’s policy is more in line with that of Twitter or TikTok. Like TikTok, Twitch also bans political advertising on its platform.

Like all of the platforms reviewed so far that have misinformation policies, Twitch only restricts select categories of misinformation:

- Misinformation that targets protected identities, based on characteristics such as race, sexual orientation and gender identity

- Harmful health misinformation and conspiracy theories related to dangerous treatments

- Misinformation promoted by conspiracy networks tied to violence/promoting violence

- Civic misinformation that undermines the integrity of a civic or political process

- Public emergencies that impact public safety

Of the eight platforms reviewed, only Twitch and TikTok ban misinformation targeting protected identity groups and ban misinformation from “conspiracy networks.” This level of specificity is welcome given the vague nature of Meta and Twitter's categories, although Twitch faces the potential problem of more specific bans leading to narrower policies.

Twitch bans conspiracy networks tied to violence, but what if the conspiracy network is not promoting violence? What if a group is not a “conspiracy network”? The platform would need increasingly specific bans to cover the wide range of content associated with misinformation. Twitch should ban misinformation from conspiracy networks altogether, rather than constantly qualifying which kinds of misinformation a network is allowed to spread.

Another solution is to separate its policy into misinformation and disinformation. Conspiracy networks, by their nature, maliciously spread misleading information. By definition, this is disinformation. By further defining disinformation, Twitch could save itself the trouble of specifying every possible form of misleading content.

YouTube

Policy: YouTube’s misinformation policy is well-designed and accessible, but its midterm policy is not significantly different from the other platforms that have one. This includes a voting information panel, redirection to trustworthy sources when searching for midterm content, and a media literacy campaign.

What works:

- A platform-wide misinformation policy

- A coherent plan for the 2022 midterms

- A voting information panel

- A well-designed community guidelines page

What to fix:

- Flagging/labeling misinformation when posted

- Harmful misinformation is banned in only particular cases

- Does not allow third parties to audit its research or site

- No specific disinformation policy

YouTube makes its community guidelines on misinformation available in an accessible, intuitive way. Its misinformation policy directs users to detailed pages related to election and vaccine misinformation. The Covid-19 medical misinformation page describes what kinds of content are not allowed and lists 28 examples, from “Denial that Covid-19 exists” to “Claims that people are immune to the virus based on their race.” Some pages, like the elections misinformation page and vaccine misinformation page provide videos to clarify YouTube’s policy on misinformation further. The consequences of posting misinformation are also linked to each of the pages. YouTube also has a clear policy on political advertising and information with links to their transparency reporting on political ads.

But YouTube’s policy varies little from the seven other platforms reviewed. Like the others, it only bans specific categories of misinformation:

- Suppression of census participation

- Manipulated content

- Misattributed content

- Promoting dangerous remedies, cures, or substances

- Contradicting expert consensus on certain safe medical practices

These five categories are in addition to the three categories that receive their own pages: election misinformation, Covid-19 medical misinformation, and vaccine misinformation. It is unclear why some categories receive separate pages, and others do not. YouTube’s categories are fairly comprehensive but are still lacking. For example, it does not ban misinformation that targets a protected group or identity, like TikTok and Twitch.

YouTube’s plan for the 2022 midterms is available in a blog post separate from its election misinformation page. Like many of the other platforms, YouTube’s plan is a mixture of providing information and redirection, along with de-amplification. At the top of the platform headline, an “information panel” will be provided, where users can click to gather more information directly from Google (YouTube’s parent company) on “how to vote” or “how to register to vote.” On Election Day, this panel will also provide voting information and live election updates provided by organizations YouTube designates as “authoritative sources.” Searching for federal candidates will also reveal a panel similar to the information panel. This panel will allow users to see key information about the candidate, including their party affiliation.

YouTube claims it has started removing videos and enforcing its guidelines against channels that spread misinformation about the midterms and the 2020 U.S. presidential election. But we cannot know whether these efforts work because third-party organizations have not audited them.

YouTube’s misinformation policy on its community guidelines page.

Conclusion

Platforms are reactive rather than proactive when they craft misinformation policies, especially those on elections. The 2016 elections were replete with bad actors and foreign interference, yet no platform distinguishes between misinformation and disinformation. The latter word is missing from the policies of the eight platforms analyzed in this report. Until social media platforms treat misleading information systematically rather than as a game of content moderation whack-a-mole, it will continue to spread unchecked. This will mean more polarization and more unrest in our society. According to experts, including ADL’s own, we are already at greater risk of political violence and threats to democratic processes and institutions. Social media platforms must act decisively to stem the tide of election misinformation and all forms of misleading content.

Recommendations

- Establish a disinformation policy. Every platform conflated misinformation with disinformation within its policies. No platform distinguished the two in terms of service or content policy guidelines. For example, a tweet that states, “I think voting starts on Sunday,” differs from one that instructs individuals to vote on Sunday to have them miss an election. Misinformation and disinformation also require different moderation approaches. Redirection and education would combat the former; the latter should require removal. By not delineating between these two types of misleading information and painting misleading information with a broad brush, platforms weaken the efficacy of their policies. Platforms’ policies need to cover misinformation and disinformation separately.

- Maintain election misinformation policies and resources throughout the year. Twitter only applies its Civic Integrity Policy during elections. Meta is instituting new protections and candidate pages for the 2022 midterms. But platforms should enforce these policies throughout the year; federal and state gubernatorial elections are not the only civic events in American political life that generate surges of significant misinformation. For example, misleading content labels and candidate profiles used by Twitter would also be helpful for school board elections or city council elections. Additionally, as we saw with the insurrection, election misinformation can have serious ramifications months after an election takes place.

- Keep a centralized hub of updated community guidelines and include them in transparency reports. Every platform has pages devoted to its community guidelines or trust and safety, but some were not updated or lacked the context to understand the guidelines posted. Even YouTube’s well-organized community guidelines page did not contain its blog posts. Every blog post, update, or community policy change should be on a single webpage–as well as in transparency reports–so that users can easily access this information. Platforms should also periodically remind users through notifications or messages of the existence of these resources.

- Make internal research data available to third-party organizations. Nearly every platform claimed it improved its misinformation tools, but there is no way to determine whether they work. Allowing third-party researchers to audit platforms’ studies would lend more credence to claims of decreased misinformation and could improve the public's trust of platforms’ integrity.