New Challenges Ahead for the Next Generation of Social Media

Introduction: ADL and New Technologies

The timeless mission of the Anti-Defamation League (ADL) is to stop the defamation of the Jewish people and secure justice and fair treatment to all. The mission of ADL’s Center for Technology and Society is to ask the question “How do we secure justice and fair treatment for all in a digital environment?”

Since 1985, when it published its report on “Electronic Bulletin Boards of Hate,” ADL has been at the forefront of evaluating how new technologies can be used for good and misused to promote hate and harassment in society. At the same time, ADL has worked with technology companies to show them how they can address these issues and work to make the communities their products foster more respectful and inclusive.

We continue this work today alongside Silicon Valley giants such as Facebook, Twitter, Google, Microsoft, Reddit, and others.

This report represents a forward-looking review of the hopes and issues raised by the newest ecosystem of community fostering technology products: social virtual reality, or social VR.

Social VR is an emerging application of virtual reality technology that brings human users together in virtual spaces with other human users for the primary purpose of engaging in social interaction. Users interact in virtual spaces using “avatars”, or forms (often, but not necessarily, human-like forms) that users can control to move around in space and interact with environments and other users. While virtual worlds are by no means new, the unique affordances of virtual reality (VR) create a feeling of “really being there” for many users. This sense of reality can make interactions more impactful — in both positive and negative ways.

As in the early days of social media platforms, there is enthusiasm around the idea that Social VR will foster cross-cultural understanding and motivate positive social change. However, there is research and real-world examples of hate, bias, and harassment in Social VR. This report identifies key examples of hate reported in Social VR to date and points out opportunities and cautionary tales for the Social VR industry. This forward-looking analysis is contextualized in prior findings about bias and harassment in video games and online social networking platforms.

Even though the Social VR industry is new, high-profile incidents of bias, hate, and harassment have already been reported. But because the industry is new, there is also a prime opportunity to design platforms and tools that will reduce and prevent harassment in the future. This report lays the groundwork for future analysis of tools and strategies to address hate in Social VR.

The Social VR industry is growing rapidly. This is due in part to the recent availability of mass-produced consumer VR equipment, such as high-end headsets for use with personal computers (HTC Vive, Oculus Rift), affordable headsets for use with smartphones (Samsung Gear, Google Cardboard), and stand-alone headsets available for less than the price of a personal computer or video game console (Oculus Go, Lenovo Mirage Solo). Social networking platforms like Facebook, tech giants like Google and HTC, and numerous VR start-up companies have developed new VR-focused social networks, content distribution platforms, and easy-to-use tools for software developers. With the creation and distribution of VR content easier than ever, hundreds of companies are developing VR experiences for use in social networking, gaming (both multiplayer and solo), architecture, design, advertising, healthcare, workforce training, and education.

The industry is predicted to boom over the next few years. Estimates for the entire VR market in 2016 were between $1 billion[i] and $2 billion[ii], while estimates for VR hardware sales alone are as high as $1.8 billion in 2018[iii]. In five years, the market for VR and semi-immersive augmented reality (AR) technology and content is expected to reach between $9 billion and $15 billion[iv]. This follows several years of rapid innovation but slow market growth, which some have called a “trough of disillusionment”[v]. Games are already a popular use of VR, but social VR, enterprise applications, health and wellness, and more new verticals are expected to grow and drive adoption of the technology. While 2016 and 2017 saw greater affordability of headsets and growing user numbers[vi], more high quality content, less expensive stand-alone headsets, willing investors, and an expansion of applications across several industries are expected to drive growth in 2018[vii].

To date, at least 16 platforms primarily designed to enable social interactions in VR exist. These platforms vary in terms of the interactions they allow. While all allow some level of interaction between individual users, many also offer social activities for users to pursue together, from watching concerts to playing games to collaboratively building worlds to gambling.

Major Platforms:

Platforms recognized as early entrants in Social VR and/or supported by significant research and development resources.

Facebook Spaces

Facebook Spaces is a virtual reality interactive environment for socializing with Facebook contacts. Facebook photos are used to create the user’s avatar.

Oculus Rooms

Oculus Rooms is a Social VR platform for socializing with other users. Users can play games, watch shows and movies, listen to music, and more in groups with friends.

Oculus is owned by Facebook.

Oculus Venues

Oculus Venues is a Social VR platform focused on entertainment, including concerts, sporting events, and other live events.

Oculus is owned by Facebook.

Rec Room

Rec Room is a Social VR platform focused on playing social games. Users can play pre-designed games or create their own.

VRChat

VRChat is a Social VR platform where users create custom avatars, build worlds, and interact with other users.

AltspaceVR

AltspaceVR is a Social VR platform where users can interact with other people and participate playing games and attending free live events.

AltspaceVR is owned by Microsoft.

vTime

vTime is a Social VR platform for users to socialize with family and friends a smartphone or VR headset. Users interact in detailed, artistic environments.

Mozilla Hubs

Mozilla Hubs is a mixed reality social networking platform. The platform runs on WebVR, an open-source, easy to use development kit that can be designed and accessed through computer browsers as well as using VR headsets.

Mozilla Hubs is developed by Mozilla.

Minor Platforms:

Newer entrants into Social VR and platforms with niche audiences.

Sansar

Social VR platform created by Linden Lab, creators of Second Life. Users in Sansar can socialize, host live events, create personalized spaces, and create and sell items in the world.

BeanVR

BeanVR is a social VR app where users can engage in a variety of activities including games, education, debates, and presentations. Users in VR can speak with users with 2D cameras.

High Fidelity

High Fidelity connects people from around the world to create and meet in virtual worlds within a platform called The Metaverse.

TheWaveVR

TheWaveVR is a music virtual reality platform where users can view, host, and attend shows, concerts, and festivals.

JanusVR

JanusVR turns 2D web pages into 3D spaces. Users can interact with each other in the virtual 3D spaces.

SlotsMillionVR

SlotsMillionVR is an online casino app that allows the user to play for real money in private casino rooms.

SVVR

SVVR’s Multiverse initiative provides developer tools for creating virtual worlds and interactive connections between virtual worlds and the physical world.

What Makes Social VR Different Than Other Technologies

VR technology has several unique affordances that set it apart from other gaming and social networking technologies. Presence (a psychological feeling that you are truly interacting with a virtual environment), immersion (an effect of reality created by the technology displaying the virtual world)[viii], and embodiment[ix] (feeling like your virtual body is your real body) together contribute to an immersive, lifelike experience. Technology-enabled sensory experiences, like 360 vision and three-dimensional audio, spatial mobility in several directions (left/right, up/down, and forward/backward), high-quality storytelling, and easy to use user interfaces help to produce the feeling that you are “really there”. In psychological research, users often report feeling that they are fully immersed in virtual experiences.

These affordances heighten both the promise and the threat of hate in Social VR. As one recent headline put it, “when virtual reality feels real, so does the sexual harassment.”[x] In addition, patterns of hate in social media and long-standing industry norms of biased representation of women, people of color, and others in video games create a precedent for tolerating harassment in Social VR. Indeed, recent news reports and research show that Social VR will become a hotbed of hate, bias, and harassment if preventive measures are not taken soon.

Hopeful Futures: Equity, Justice, and VR

While problems with hate, bias, and harassment are emerging in Social VR, there are several positive theories, indicators, and potential applications of VR that seem poised to advance justice and equity in society. Many of the positive applications of VR discussed so far go beyond Social VR and include the effects of embodying a person different from oneself in virtual worlds and using VR for educational purposes. Such research and uses of VR might open up future routes of development for Social VR. User experience in single-user VR content may translate to the experience of social VR, too. Plus, educational content and empathy training could be combined with multi-user social elements to create scalable or more social experiences.

Empathy

Filmmaker Chris Milk famously dubbed VR an “empathy machine.” He believes VR will make people more compassionate, empathetic, and connected, fostering greater understanding and cooperation among individuals in societies[xi]. The empathy machine idea has motivated global organizations like the United Nations to adopt VR and immersive filmmaking techniques. Milk’s 2015 short immersive film Clouds Over Sidra[xii], for example, was used to seek international funding and support for the UN’s management of the Syrian refugee crisis and it yielded tangible results[xiii].

These hopes for VR echo the hope surrounding the development of the Internet and of social media platforms during the first decade of their existence in mainstream culture. Consumer uses of the Internet developed alongside the utopian ambitions of early Silicon Valley entrepreneurs, many of whom came of age in progressive communities seeking to change the world for the better with the help of technology in the 1960s and 1970s[xiv]. As the Internet became common in homes, platforms like bulletin board systems (BBS) in the 1990s helped people build community around share cultural interests, like television shows[xv]. Since the turn of the century, platforms like Facebook and Twitter gave ordinary people the ability to connect with others around the world. Hopes that the internet would act as a lever for increased political engagement were borne out in real-world events. Twitter and Facebook, for example, were powerful forces behind the organization of real-world protests against the Egyptian government in Tahrir Square[xvi] in 2011 protests and other “Arab Spring” protests in Libya and Tunisia. Enthusiasm for its demoncratizing impact has been tempered somewhat in recent years with rising numbers of hate groups online[xvii][xviii] and ongoing reflections, including from Facebook itself[xix], about the role that social media may have played in allowing U.S. adversaries to influence the 2016 presidential election[xx].

Research has now been undertaken to determine whether the empathy applications of VR will deliver. There are indications from social psychology research that VR may be able to increase empathy and willingness to help people different from oneself. The “walk a mile in another’s shoes” phenomenon, or “Proteus effect”[xxi][xxii], is based on the belief that people may feel less threatened by people of different races after inhabiting an avatar of a different race from their own in VR. The researchers and the tech press alike have hailed these findings as an indicator that VR has great potential as a tool – even a “virtual trick”[xxiii] - for tackling racism. One possible real-world application of VR was demonstrated through a collaboration between legal scholars and psychologists. The team showed that VR immersion in a body similar to a defendant may prompt fairer evaluation of evidence, suggesting possible use to reduce bias in criminal sentencing[xxiv]. Another is the use of VR for sensitivity training for police departments in the hope that it will reduce bias and improve police-community relations[xxv]. However, care is needed because while putting people in avatars of a different race from their own may increase empathy and reduce racist behaviors, other research has shown that racial biases could be heightened instead[xxvi].

Other research has suggested that embodying an elderly person in VR might also reduce bias towards the elderly[xxvii]. Researchers have even found that embodying a “superhero” in a virtual world makes people more willing to help another person in the real world[xxviii].

Fighting Anti-Semitism

VR is also being used to reduce anti-Semitism and fight Holocaust denial. Several experiences to date have dramatized stories from Jewish people affected by the Holocaust, for example. The University of Southern California’s Shoah Foundation iWitness 360 program released the short immersive film Lala in 2017[xxix]. In this film, a Holocaust survivor narrates a small piece of his family’s experience in Poland during World War II. The family is forced to move to a ghetto, and their family dog, Lala, follows them after they leave her behind. Every night, Lala finds the family, and every day, Lala leaves the ghetto to care for a litter of puppies. The narrator concludes from this story that “love is stronger than hate”. The film switches between placing the viewer in the narrator’s home and in an immersive, animated world, connecting memories of the past with the present day.

In 2018, the Anne Frank House, in collaboration with Force Field, released the experience Anne Frank House VR[xxx]. In this experience, the user can take an immersive tour of a virtual reproduction of the Amsterdam house in which the Jewish teenager Anne Frank hid with her family from the Nazi occupation of the Netherlands from 1942 until their capture by the Nazis in 1944. The tour guides the user through the house, with quotes from her published diary and objects that she wrote about detailed throughout.

VR is even being used to prosecute former Nazi officers. In Germany, the German Public Prosecution Service commissioned the creation of a VR rendering of Auschwitz that helped lead to the conviction of former SS guard Reinhold Hanning in 2015[xxxi]. In 2017, Mel Films released a short documentary film called “Nazi VR” about the VR model and the case[xxxii].

More work along these lines is to come. The United States Holocaust Memorial Museum is exploring ways to use VR to deliver educational content that is engaging for young people. The expansion into VR contributes to the museum’s new efforts to educate the public about the “why” — not just the “what” and “how” — of the Holocaust[xxxiii].

Sexual Harassment and Gender Diversity

Some companies are even exploring the use of VR for workplace harassment training. For example, the start-up company Vantage Point creates immersive experiences based on the narratives of victims of sexual harassment and assault[xxxiv][xxxv]. These situations are used to foster empathy, spot sexual harassment, identify stigma and bias, and learn bystander intervention techniques in workplaces and on college campuses. The company argues that the immersive nature of VR can trigger “state dependent learning,” which they describe as “a psychological phenomena where employee response accuracy and memory is at its highest when the training environment is able to most closely replicate the stimuli of the real-world environment.”[xxxvi]

A positive aspect of the VR industry overall is a high level of gender diversity and a demonstrated interest in fostering diversity and inclusion for women as the industry matures. Gender diversity in the industry has drawn significant media attention, with news outlets highlighting women creators and executives in industry reports and lists[xxxvii][xxxviii]. Furthermore, there are several industry groups devoted to supporting women workers in the VR industry, including the international Women in VR networking group[xxxix], which has more than 9,000 members in its Facebook group[xl]. The New York Women in VR Meetup group has nearly 900 members[xli]. With a high representation of women decision makers, addressing persistent problems in social networking platforms, like sexism and harassment of women, can become a priority for the VR industry.

There is a great opportunity to make VR a force for good in the world. The industry is in a relatively early stage and the problems observed to date in Social VR can still be contained. Many researchers and industry players are interested in tackling real-world bias using VR and are actively exploring how to do so. The industry itself seems open to talking about and supporting diversity within its ranks. In addition to finding ways to use VR to reduce bias, hate, and harassment, now is a prime moment for industry actors and researchers to figure out how to prevent the spread of bias, hate, and harassment in Social VR platforms and VR experiences with significant social interaction, like games.

While Social VR is a rapidly growing industry with many potentially positive social benefits, problems with hate, bias, and harassment in other kinds of virtual worlds and online communities are well documented. Like social media platforms and multiplayer games, Social VR is also a technological platform that allows users to interact with people who are not in the same physical location. What has been learned from those fields should therefore be considered when assessing whether and how Social VR will enable socially problematic behavior in the future. Findings from research on video games and social media offer a roadmap for anticipating and addressing emerging problems with hate, bias, and harassment in social VR.

Furthermore, though video games and social media often seem disconnected from the “real life” of the physical world, researchers have long identified a feedback loop between real-world values and biases and the values and biases portrayed in video games or in online communities. The same sort of feedback loop exists between today’s increasingly sophisticated Social VR worlds and the physical world. Video games and social media provide cautionary tales for what could happen in Social VR if hate, bias, and harassment are not addressed early in the technology’s development.

Video Games and Virtual Worlds

Some of the earliest research on virtual worlds sounded the alarm about how biased visual representation of women characters and people of color in video games could affect general cultural attitudes. These concerns were rooted in an understanding that what happens in video games can affect the values that inform how people live their lives in the physical world, and vice versa. As Christine Ward Gailey argued in 1993[xlii]:

Games played in a society embody the values of the dominant culture; they are ways of reinforcing through play the behaviors and models of order rewarded or punished in the society… Games, then, particularly commercially successful ones, are apt to replicate in their structure the values and activities associated with the dominant ideology.

How different groups in society are portrayed in the “virtual” worlds of video games reflects and reinforces pre-existing biases held by members of society in the “real world,” such as racism and sexism. For example, research from the early 1990s onward has extensively documented the gender stereotyping of video game characters’ appearances. Women are usually thin and scantily clad with accentuated breasts (a trend exemplified in Tomb Raider protagonist Lara Croft) and men have large muscles and aggressive attitudes[xliii][xliv]. Psychological research among adolescents and college students shows that viewing these stereotypical portrayals can make real-world cases of sexism seem less shocking[xlv][xlvi] and promote the acceptance of “rape myths”[xlvii], like the belief that women who are victims of sexual assault deserve what happens to them.

Sexism in video games is not limited only to professionally-generated content. Research and news reporting on multiplayer online games have widely observed that women are regularly subjected to harassment from other players[xlviii][xlix]. Women players have developed coping mechanisms to guard against such behavior[l].

Sexual harassment in video games famously spilled over into real-world harassment during the Gamergate scandal in recent years. In one high-profile moment of an ongoing series of episodes in which women in the gaming community were harassed for their views in 2014, gaming journalist Anita Sarkeesian was awarded the Ambassador Award at the Game Developers Choice Awards for her web series Tropes vs. Women in Video Games. The series explained sexist stereotypes in video gaming based on her long experience reporting on the industry. Following the announcement of her award, Sarkeesian and event organizers received bomb threats and death threats. The perpetrators justified their actions by alleging that Sarkeesian had undisclosed financial ties with other critics of the games industry[li][lii][liii].

The episode was one of several that was collectively referred to as #gamergate on social networks like Twitter. The name itself suggests similarities between an attempt to connect Sarkeesian’s alleged (and since debunked) industry ties to the 1970s Watergate political corruption scandal and and the general scandalous mood surrounding the harassment of other high-profile women in the games industry. Gamergate, as the episode is now called, showed that sexism in video games is not limited to the content. Video game fans defend, sometimes with threats of violence, the production and distribution of sexist content in video games. The sexist culture surrounding video games poses real-world threats of harassment, injury, and fear for women involved in the industry.

Racist imagery has also been identified as a problem in video games going back to the 1990s. For example, the popular 3D Realms Software 1990s games Duke Nukem 3D and Shadow Warrior are classic examples of how troubling stereotypes about race drive storytelling in video games[liv]. In Duke Nukem 3D, the game action is premised on eugenic panic about race mixing between invading aliens and white women in a mono-ethnic future Los Angeles. The main character is on a quest to stop the alien invaders in order to save the genetic purity of the human species. In Shadow Warrior, the main character’s stereotyped and generic “Asian” identity is accompanied by a skill set that is portrayed as biologically-based and extensive jokes about the character’s deficient masculinity.

Other researchers have identified similarly racist assumptions at work in the racialization of skills and the civilizational stakes of warfare that underwrite many popular fantasy role-playing games in the 2000s and 2010s, such as the Elder Scrolls, with roots in classic fantasy literature[lv]. Racial stereotypes and racialized violence are simulated in more realistic games as well, notably in the Grand Theft Auto series[lvi] and sports games[lvii].

As with sexist representations in video games, racist ideas seem to bleed through into the real world. Psychological research suggests, for example, that playing violent video games increases ethnocentrism and triggers heightened aggression when a player is presented with someone who is different from themselves[lviii]. Despite this, and despite activism within the industry from individuals like Anita Sarkeesian, hate in video games has become expected, potentially paving the way for the normalization of hate in other virtual worlds.

Social Media

Social media platforms like Facebook, Reddit, and Twitter have proven ripe for the spread of racist, sexist, and anti-Semitic content.

The research institute Data & Society has documented the development and impact of hate groups and the loosely organized communities that spread hateful ideas online, including men’s rights activists, the alt-right, and so-called “Gamergaters.” In a 2017 report[lix], the group identified the affordances of social media platforms as one factor contributing to these groups’ success, arguing that hate groups online have learned how to “leverage both the techniques of participatory culture and the affordances of social media to spread their various beliefs.” These groups, Data & Society said, amplify their impact by creating newsworthy content that is easy for time-strapped journalists to cover, and thus amplify. “Taking advantage of the opportunity the internet presents for collaboration, communication, and peer production, these groups target vulnerabilities in the news media ecosystem to increase the visibility of and audience for their messages,” it said.

Examples of hateful speech online are plentiful. Sexual harassment and hate speech against women, for example, makes news when prominent women delete their social media presences. Culture writer Lindy West, for example, cited sexual harassment as her reason for abandoning her large Twitter following in January 2017[lx]. More recently, Star Wars: Rogue One actress Kelly Marie Tran deleted her Instagram posts to stop experiencing online sexual and racially motivated harassment[lxi].

Sometimes harassment against women online can be career- and life-threatening, as in the case of Chinese technology video blogger Naomi Wu. In her case, sexual harassment from online followers increased following reporting in early 2018 by United States media outlet Vice[lxii]. In their coverage, Vice released identifying information about Wu that could be used by both internet trolls and Chinese authorities to target her in the real world[lxiii]. She claims that she now fears for her freedom from government persecution, her physical safety, and her livelihood[lxiv].

Anti-Semitic hate speech and harassment has been a persistent problem in social media as well. In the summer of 2016, for example, some Twitter users, including neo-Nazis[lxv], began using “triple parentheses” — ((( ))) — around the names of journalists and others who they believed to be Jewish. This was meant as a threatening gesture and used to undermine the expertise and credibility of the targeted individuals[lxvi]. [Some Jewish Twitter users, however, now use the triple parentheses as a way to publicly claim their Jewish identity and reclaim power from such hateful actors.]

Also in 2016, Pepe the Frog[lxvii], originally a cartoon character with no racist or political implications, became identified with the so-called alt-right. Displaying Pepe the Frog allowed anti-Semitic social media users to find other like-minded individuals. The character became a fixture at real-world hate rallies in the United States as well, such as the Unite the Right rally in Charlottesville, Virginia in August 2017[lxviii]. The first phase of the Anti-Defamation League’s Online Hate Index provides additional documentation of anti-Semitic, sexist, and racist hate speech found on social media platforms[lxix].

Recognizing the links between internet hate and hate in the real world, some governments are beginning to act against online hate speech. For example, in January 2018, Germany began enforcing the Netzwerkdurchsetzungsgesetz (NetzDG), the first law of its kind in Europe. This new law requires social media platforms to remove most content that violates Germany’s strict anti-hate speech laws within 24 hours or face fines of up to €50 million ($58 million)[lxx]. Prohibited content includes pro-Nazi material. However, the law is still a work in progress. An amendment has been proposed that would allow users to review and respond to content removal in response to concerns that some material has been inappropriately deleted[lxxi].

Social media provides many examples of why it is important to track and fight hate, bias, and harassment in online communities. While social media has facilitated connections between people around the world, it has also become a hotbed of bias, hate, and harassment. Troublingly, these occurrences are not isolated or “only online.” Symbols that begin online can become symbols of real-world hate, and online harassment can lead to real-world harassment and even government persecution.

Lessons Learned

Numerous lessons emerge from these findings and controversies about hate, bias, and harassment in video games and social media, such as:

- Real-world bias and hate is often mirrored in video games and on digital social media platforms and virtual bias can perpetuate real-world hate and harassment. Therefore, tackling real-world bias and hate requires tackling prejudice in social networks and in the video games industry, and vice versa.

- In online social networks and multiplayer games, users do not always uphold generally accepted social norms of civility and respect. Racist and sexist content may be present.

- Unmoderated social networking spaces can serve the purposes of hate groups.

- Moderation of online social spaces may be necessary to make digital social worlds inviting and bias-free. But because automated systems are influenced by human designers’ biases, moderation needs to be thoughtfully designed and executed by diverse teams trained to understand and guard against bias and hate.

Multiple forms of hate, bias, and harassment are emerging as problems in Social VR, including sexism, racism, and anti-Semitism. Some of these problems bear a strong resemblance to prior problems with hate, bias, and harassment in social media and video games. Given this strong resemblance to hate in other digital media contexts, it’s fair to say that this problem is likely to get worse without real hands on intervention from Social VR companies. One challenge: the scope of the problem is not clear. While it is unfortunate that hate is emerging in a new medium at all, the unique affordances of VR, such as immersion and presence, may also heighten the effect of hate upon those who are targeted. At the same time, there is nothing inherently hateful or harmful about the technology in and of itself, and it will be up to the Social VR community — users and companies — to determine the way forward for this new and exciting medium.

The next section summarizes key incidents of hate, bias, and harassment that have been researched and reported to date in the new medium of Social VR in an effort to highlight the real harms and threats that are being experienced by users as this medium evolves. The section focuses on behavior that can be explicitly perceived externally as sexist, racist, anti-Semitic and otherwise offensive. It is worth noting, however, that content that does not appear to explicitly fall into these categorizes can still be experienced by users as offensive in these ways and others, and that the examples mentioned in this section are only the most obvious and egregious examples of this behavior that have been reported to date.

Sexism and Sexual Harassment in Social VR

Since 2016, several high-profile episodes and studies of sexual harassment in Social VR have been reported. The affordances of the various platforms offer different opportunities for sexual harassment, and many platforms have seen problems with such behavior to date. While research done in collaboration with Oculus, a VR headset manufacturer now owned by Facebook, reports little evidence of sexual harassment in VR[lxxii], several high-profile controversies and other research provides a multifaceted account of harassment in virtual worlds. From pornographic images to sexually-explicit drawings to simulated physical assault, Social VR can be highly threatening for women or anyone else who presents as feminine in virtual worlds.

VR Groping in QuiVR

One of the most infamous incidents occurred in the VR game QuiVR in October 2016. QuiVR is an archery game in which players are immersed in a virtual world with other human players. Players can accomplish game objectives on their own or as part of a team of players in the virtual game world at the same time. Other players are visible around each individual in the form of a pair of hands holding a bow and arrow and a head wearing a helmet.

Player Jordan Belmaire wrote about being “virtually groped” while playing the game with her brother in October 2016[lxxiii]. The only indication of her gender was her voice. As she wrote in a Medium post following the incident:

In between a wave of zombies and demons to shoot down, I was hanging out next to BigBro442, waiting for our next attack. Suddenly, BigBro442’s disembodied helmet faced me dead-on. His floating hand approached my body, and he started to virtually rub my chest.

“Stop!” I cried. I must have laughed from the embarrassment and the ridiculousness of the situation. Women, after all, are supposed to be cool, and take any form of sexual harassment with a laugh. But I still told him to stop.

This goaded him on, and even when I turned away from him, he chased me around, making grabbing and pinching motions near my chest. Emboldened, he even shoved his hand toward my virtual crotch and began rubbing.

There I was, being virtually groped in a snowy fortress with my brother-in-law and husband watching.

Belmaire responded by shouting at BigBro 442 to stop. She became angrier and attempted to run away. The player followed her and continued to grope her until she left the virtual world. Belmaire described unexpectedly feeling violated during the incident. At the end of the post, she reflected that while women were allowed in VR spaces like the QuiVR world, they might not want to return if they faced such behavior.

The company’s CEO, Aaron Stanton, responded with a public statement[lxxiv], voicing his disappointment and vowing to take corrective action. Stanton said the company would explore how to “offer the tools to re-empower the player as it [abuse] happens.” QuiVR soon after updated its Personal Bubble “superpower” feature, which originally was designed to preserve the quality of the gameplay if players were hit by malicious bow and arrow shots from other players or if another player tried to obstruct their view. With the updates, when users enter a person’s the bubble, their hands disappear from the view of the bubble user and they cannot interact with the person who activated the power[lxxv]. AltspaceVR includes a similar bubble feature that limits how close other players can get to a person’s avatar[lxxvi]. These features give individuals control over how close others can get to the “personal space” of their avatar, thereby preventing virtual groping and assault.

The need for personal space features may also play a more general role in promoting player comfort. Experimental psychology research has found that the ideal distance between users in virtual space is between 2 meters and 2.5 meters[lxxvii].

Sexual Harassment in VRChat

The ongoing issues in the platform VRChat provides additional examples of sexual harassment in Social VR. VRChat is an open social world in which the primary objective is for players to interact with each other, just like in a chatroom or public social media forum. Each user is represented by a full-body avatar designed by the player, and many users create avatars that imitate the physical human form. Players can share content, such as images or videos, with other players in the vicinity and alter their avatars during gameplay.

These features have been used to sexually harass people, often those identified by other users as women. For example, Twitch streamer Glacey made gamer news in March 2018 after being approached by a 10-foot-tall avatar altered to show male genitalia at the level of her avatar’s face[lxxviii]. Other users report that the content sharing features have been used to force other players to view sexually explicit photos, videos, and drawings by strangers[lxxix]. After receiving many complaints from users, in 2018 the company created a “panic button” whereby users can “mute” interactions and content from other users in their immediate area[lxxx].

To some observers of the industry, even these new systems are imperfect because they are reactive measures that still expose users to harassment. Plus, the solution requires the victims of harassment to protect themselves after exposure to hateful interactions or distasteful content, rather than pre-empting hate and harassment[lxxxi].

Harassment in To Be With Hamlet

To Be With Hamlet was a performance based on the William Shakespeare play performed in the Social VR platform M3diate as part of the 2017 TriBeCa Film Festival’s Virtual Arcade. This play immersed viewers in a virtual world for a 12-minute performance. Performers acted out their parts off-site in a studio where their movement was recorded and relayed to the virtual world. Avatars in the world — replete with special effects like flames emerging from the feet of the ghost of Hamlet’s father — moved in correspondence with the actors’ movement. The actors could not see or what was happening around their virtual avatars and were completely focused on their performances. Viewers could see one another in the virtual performance space as a set of hands and a head.

However, as with Jordan Belmaire’s QuiVR experience, the limited visual representation of users in the space did not stop some individuals from harassing the actors’ avatars. During one performance, Javier Molina, a member of the creative team, reported seeing a virtual groping incident[lxxxii]:

The first time I noticed something unusual was when one of those blue avatars made a sexual innuendo of grabbing Hamlet’s genitalia and joking about it. Since I was not close to that avatar, I did not hear very clearly what was the context in which the joke was made, but the gestures I observed were sexual and to be considered as Virtual reality sexual harassment. The actor performing at the other end of the communication did not hear the sounds, so his dialog was not disturbed.

Molina noted that the technical affordances of the performance and anonymity of the spectators did not lessen the impact of witnessing such activity in a virtual space:

In this occasion, the inability of the actor to perceived the sexual harassment does not excuse the behavior perpetrated towards the actor. Unwanted sexual solicitation is a standard feature in cyberspaces where men and women can navigate anonymously, and VR was not going to be the exception.

At another performance later in the day, Molina also witnessed a spectator virtually choking and “killing” the character of Hamlet. While this violence was not explicitly sexual, it contributed to dampening the spirits of the team behind the piece. Following this incident, the M3diate team was spurred to consider the best moderation tools to address harassment and hate in their platform.

Research from The Extended Mind

The research firm The Extended Mind has conducted two studies of women and sexism in Social VR that begins to quantify the extent of harassment. In a 2018 survey of 600 regular VR users, researchers found that 49% of women and 36% of men experienced sexual harassment. Harassment included everything from being shown lewd images to virtual groping and virtual assault[lxxxiii].

These findings corroborate a smaller 2017 study by the same researchers in which 13 women used Social VR for the first time in a laboratory setting. Participants reported that they received sexual comments and saw drawings of male genitalia in shared public spaces[lxxxiv]. For example, participants saw drawings of genitalia in shared spaces in AltspaceVR on two different occasions. In describing of one incident, a woman user downplayed the shock value, chalking it up to an expected consequence of a space “ripe for parody”:

Someone had drawn a giant dick in the alt space drawing app. I was looking for landmarks to try and find [person] and I turned around and there was a giant green dick just drawn in space. And so I just said the first thing that came to me which is ‘oh, that’s a dick.’ And I think I heard someone else in the space too — not [person] — also say that. ‘Oh. There’s the dick.’ Like someone had drawn it and was looking for it, they had lost the giant dick... That space is ripe for parody, and for weird, funny shit to happen.

In response to sexual harassment, women users and others who choose to use feminine avatars use a variety of coping skills. For example, users may remain at the margins of public social spaces, avoid talking to other users, and mention romantic partners (real or fictitious) to prevent sexual advances[lxxxv]. Such behaviors mirror recent findings about how women navigate physical-world social spaces to avoid sexual harassment[lxxxvi]. Just as virtual harassment follows similar patterns in the physical world, so too do coping techniques.

Race, Racism, and Anti-Semitism

Racism, racist harassment, and anti-Semitic imagery is also a reality today in Social VR platforms. Precedents from game studies and recent news reports provide evidence of the potential and reality of racism and racist harassment in Social VR. In particular, games represent one way that racist ideas maintain a foothold in society. Racialized avatars in Social VR and characters in VR video games with social elements may perpetuate stereotypes about the links between ideas about race, appearance, and race-linked capacities.

By contrast, several psychology experts have argued that VR may, instead, provide a way to reduce racism, based on their experimental studies. Nonetheless, just because the medium shows the potential to diminish racism in experiments does not erase incidents of hate that have been reported or remove the need to combat hate where it exists today among real users of Social VR platforms.

Social Psychology Research

Social psychology researchers at Stanford University and the University of Barcelona have conducted experiments they argue demonstrate that VR helps reduce racism. The majority of these experiments involve putting individuals — predominantly or exclusively white individuals — into solo or social VR experiences where they use an avatar of a same or different race. Most often, white individuals are placed in non-white avatars. This experiment is used to assess variables like implicit bias and outgroup threat assessment (the degree to which someone associates people different from themselves with danger and unfavorable stereotypes).

Early research of this kind found that putting people in avatars of races different from their own increases responses that are correlated with racist beliefs and behaviors[lxxxvii]. However, more recent research has found that letting participants embody avatars of a different race can sometimes reduce indicators of racism. Yee and Bailenson have called this the “walk a mile in digital shoes” phenomenon[lxxxviii], while other researchers refer to this as “the Proteus effect”[lxxxix]. These findings, however, have been inconsistent even within individual experiments and papers, with some assessment tools finding reductions of racism and others finding no difference[xc][xci][xcii]. This research may be salient for the design of Social VR because using or interacting with avatars of a different race than one’s own may have real-world consequences for how individuals respond to people similar to and different than themselves.

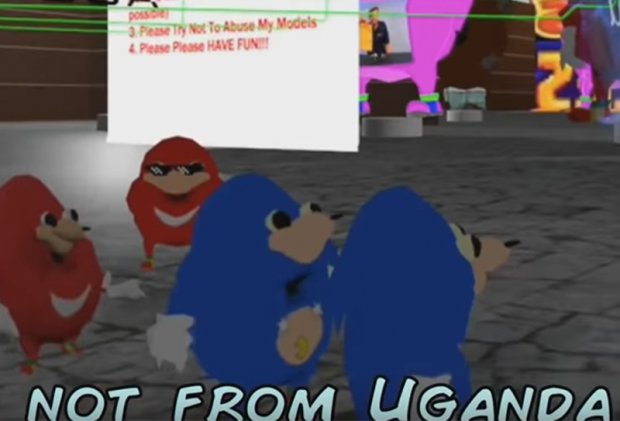

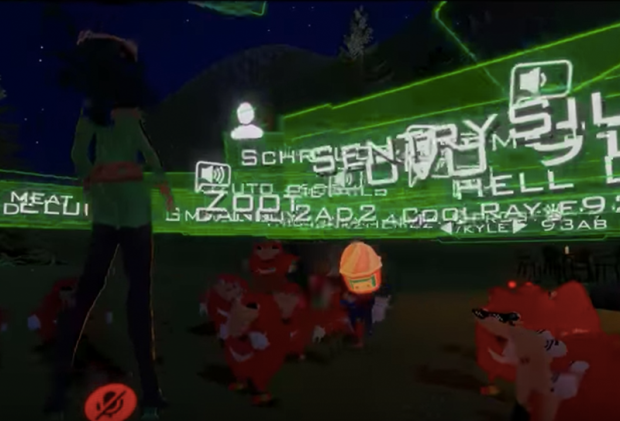

Ugandan Knuckles in VRChat

Among real-life users, there have been numerous reports of racist imagery from users of Social VR. The most famous example is Ugandan Knuckles[xciii][xciv]. Ugandan Knuckles is a character that has been extensively used in VRChat. Originally inspired by the Knuckles character in the Sonic the Hedgehog video game series, in VRChat “Ugandan Knuckles” users imitate a fake, stereotyped “Ugandan” accent and syntax and make clicking noises in imitation of Khoisan languages. Groups of users amass in public spaces in coordinated attacks, overwhelming other users and activities with their large numbers, making statements that mock African cultures and the intelligence of Black people, and conducting fake “Ugandan” rituals to find “the way.” Ugandan Knuckles attacks often target feminine avatars.

Though the image itself, like Pepe the Frog, has inoffensive origins, its use in VRChat seems to be motivated by a desire to dominate shared social space, driving other people out through the use of racist tropes.

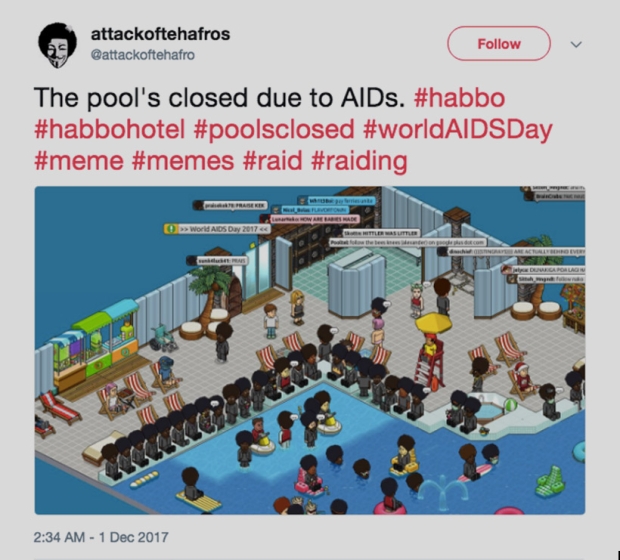

Ugandan Knuckles “raids” have been compared within the industry and among gamers to racist and anti-Semitic “pool’s closed” raids led by 4chan users in the Habbo Hotel two-dimensional virtual world starting in 2006[xcv]. Users would stand around the “pool” — typically using Black avatars with stereotyped features — to block access to the pool and intimidate and keep out other users. Users would sometimes leave comments like “Pool’s closed due to AIDS,” implying a link between Black people and ill health. In some actions, users positioned the bodies of their avatars to form swastikas in public spaces[xcvi].

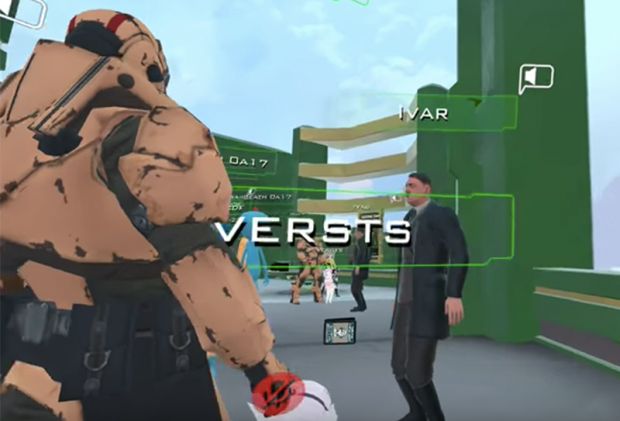

Nazi Imagery and Hitler Avatars

VRChat users are discussing the issue of Nazi imagery on the VRChat Steam community online discussion boards. Some users have expressed the belief that using Hitler avatars and other Nazi imagery should be allowed and should be regarded as humorous because it is already part of gaming culture[xcvii]. For example, one user posted a thread on December 8, 2017 that was titled “Offensive Avatars Allowed?” and wrote:

I didn't think these kinds of models would be allowed, but I've been seeing a pretty large amount of people running around like this, blasting nazi music.

Kind of seems strange when they warn people for saying "Begone thot" [a criticism that a user was being too politically correct] but don't punish genuine anti-semitism.

On January 8, another user replied:

"genuine"? how do you know they aren't just RPing as a character, which is actually a nazi, then what? punish them for making a character like that? that'd be like punishing anime because the japanese used to work with the nazis.

On January 10, a third user chimed in:

A day or two ago, I went on a chill lounge world, and then Christ on the cross floated by. Then became Hitler, complete with a custom heil emote. Another guy turned into Trump, clearly thinking "my moment has come", and then Hitler responded by turned into Kim Jong Un, and the two had a danceoff while the crowd egged them both on.

Probably the highlight of my experience on VRchat in the short time I've been on.

I say leave it be, but do give people the ability to self moderate and such. kick votes and ♥♥♥♥ exist for a reason, and room owners can have their own rules. But trying to enforce global bans on things is just kind of anti-fun.

The apparent tolerance for Nazi music and imagery in these messages suggests that anti-Semitic and otherwise hateful and offensive content is acceptable to some users. Videos and screenshots of VRChat interactions provide further evidence that Nazi imagery and music is used and tolerated by some users of the platform. In VRChat, users have the option to design a custom avatar, a process which is extensively documented and can take just a few minutes[xcviii].

As the conversation above suggests, several users have used this feature to design avatars that look like Hitler, allowing them to use the body of Hitler as their own and appearing as Hitler to other users. While using Hitler avatars, some users sometimes even broadcast Nazi songs or behave in ways that seem to be motivated by racism or sexism.

Research conducted by The Extended Mind corroborates the presence of racism and anti-Semitism in Social VR. In their 2018 survey of 600 VR users, 28% of men, 17% of women, and 29% of people with other gender identities reported receiving racist or homophobic comments in Social VR settings[xcix]. For example, one user reported receiving “racist statements because I am black.” Another user reported seeing “Nazi imagery in a shared space” in an unnamed Social VR platform.

The mixed results so far in psychological research regarding VR and race suggests that the impacts of racist imagery in virtual spaces may be unpredictable and require ongoing, careful study. The reports of racist imagery in VRChat that are already cropping up show that racism is becoming a problem in Social VR. And the links made by journalists and users to past anti-Semitic actions in virtual worlds and the evidence of tolerance for Nazi imagery in the VRChat community suggests that it may only be a matter of time until this kind of offensive imagery is widespread in Social VR

Future Issues for Social VR

Without intervention and appropriate preventive strategies, harassment, bias, and hate may grow and become the norm in Social VR spaces. Sexist imagery, harassment, and simulated assault may push women, LGBTQ, and others out of these worlds, as it has on social media. This may discourage the very individuals who might be motivated to fix the problems of sexism, racism, and anti-Semitism from working in the industry. Without intervention, Social VR may become an environment in which hate, bias and harassment of many kinds is accepted and perpetuated.

Just as troublingly for the current cultural moment, the full ramifications of the ability to embody people of different races and analogous gender, religion, culture, and ethnicity role-playing opportunities are not yet clear. They may promote empathy and understanding, or they may instead strengthen bias. In cultural terms, role-playing another race or culture represents “cultural appropriation” and can lead to misrepresentation of certain communities and cultures[c]. In psychological terms, such role-playing may increase “outgroup threat assessment”[ci]. That is, groups that a user imitates that the user sees as different from themselves can counterintuitively seem more threatening following racial, ethnic, or other forms of role-playing, not less. In other words, being embodied as another race (and other forms of cultural, racial, and ethnic imitation) may, in fact, heighten bias and hate. The potential for heightened levels of bias warrants careful consideration because VR is currently being positioned by some researchers and industry experts as a “solution” to racism[cii][ciii][civ].

Finally, the well-documented surge in anti-Semitism in online social networks and the ubiquitous presence of racist ideas in video games and gaming culture suggest that industry insiders need to be on guard against rising anti-Semitism in Social VR. Signs of this form of bias are already evidenced in Social VR discussion boards and warrant careful observation. These are multifaceted issues that will need to be tackled in online communities where anti-Semitism is already evident or bubbling up on the part of the designers, storytellers, and engineers of virtual worlds.

What Comes Next for Social VR?

The future of Social VR is bright, but developing virtual communities that are welcoming spaces for a wide variety of people will require careful planning. On the positive side, early indications from psychological research suggest that the immersive qualities of VR may nudge people to become more empathetic, understanding, and helpful to those who are different. Further, the main draw of Social VR, like social media before it, is the potential it has to bring users together to form connection and community regardless of location and nationality and with the freedom to determine one’s self-presentation in virtual space. On the negative side, Social VR is maturing in the wake of decades of innovation and community growth in video games and social media. Some Social VR platforms seem to have inherited the racism, sexism, and anti-Semitism that has been documented in video games and social media. It is a hopeful sign that the Social VR companies affected have already begun implementing moderation tools intended to reverse these trends. The big question is: will the positives of VR outweigh the negatives to make Social VR a force for good in society?

The Social VR industry is growing in tandem with the development of other uses of VR, including games, workplace productivity tools, design and architecture applications, and VR for health. Innovation in these adjacent sectors of the VR industry may uncover more ways that virtual immersion can positively shape social interactions and further expand the tools available to develop and sustain enjoyable communities in Social VR.

Bias, hate, and harassment are not the only challenges facing the industry. Slow adoption of consumer headsets has created a limited demand for content, but new, lower-cost headsets may make the technology and content more appealing and accessible in 2018. For Social VR in particular, where interacting with other users is an integral component of the experience, broad adoption is a prerequisite for lively social worlds. As a new industry with new technologies, talent development has also been identified as a factor that may slow the growth of both hardware and software companies[cv].

Another wildcard for the Social VR industry is the effect of the European Union’s General Data Protection Regulation (GDPR). This rule introduced new standards for data privacy when it went into full effect in May 2018, making it harder to share data between platforms and companies and limiting how collected data can be used and stored. The limits on how companies can use the data of EU citizens may impact advertising-driven business models now common in digital media. In VR, this may affect the kinds of body, gaze, and environment tracking data companies can collect and use[cvi]. Because of GDPR, Facebook-owned Oculus has updated its terms of service to limit how Oculus-collected data can be shared with Facebook for ad targeting[cvii]. In the coming months, the industry as a whole will undoubtedly consider what data is necessary to collect and how to target VR content to users.

Finally, it will be important to monitor the development of moderation techniques designed to deal with hate, bias, and harassment in Social VR. As social media platforms have grown, hate has become a normal part of many users’ experiences. Since we are still in the early days of Social VR, there is an opportunity to preempt the development of similarly toxic cultures in up-and-coming virtual worlds.

To date, a variety of moderation tools have been implemented or proposed, including:

- Personal space management features

- Blocking features

- Features that limit interaction with large groups of strangers

- User reporting of harassment and hate

- Active moderation by company representatives

- Machine learning-based techniques, to automate the identification and removal of hateful content and harassers

- A combination of automated detection and human review of hateful content

ADL’s Center for Technology and Society will continue to monitor and research tools and features as Social VR develops. In particular, CTS will monitor the development of new policies to understand and assess:

- What tools industry leaders and observers predict will be effective in curbing hate, bias, and harassment in Social VR.

- What values will be prioritized in the development of moderation tools. For example, will moderation tools allow individuals to filter hate from their personal experience of Social VR, or will they remove hate from all shared social spaces? Will moderation tools be proactive (preventing hate) or reactive (removing hate once it is shared and reported)?

- Which groups in society (e.g. women, people of color, people with disabilities) will be considered in designing moderation tools.

- The efficacy of different approaches to prevention and moderation of hate, bias, and harassment in Social VR.

The Social VR industry has an exciting future ahead of it. With proactive development of tools and technologies to limit hate by a diverse community of technologists and other professionals, the new generation of social virtual worlds can be a welcoming, collaborative place for all.

Bibliography

[i] Grand View Research. "Virtual Reality (VR) Market Analysis By Device, By Technology, By Component, By Application (Aerospace & Defense, Commercial, Consumer Electronics, Industrial, & Medical), By Region, And Segment Forecasts, 2018 - 2025.” May 2017. https://www.grandviewresearch.com/industry-analysis/virtual-reality-vr-market.

[ii] Zion Market Research. "Global (VR) Virtual Reality Market Size Forecast to Reach USD 26.89 Billion by 2022." GlobeNewswire News Room. 1 May 2018. https://globenewswire.com/news-release/2018/05/01/1494026/0/en/Global-VR-Virtual-Reality-Market-Size-Forecast-to-Reach-USD-26-89-Billion-by-2022.html

[iii]McCaskill, Steve. "VR and AR Device Market to Reach $1.8bn in 2018." TechRadar. 10 April 2018. https://www.techradar.com/news/vr-and-ar-device-market-to-reach-dollar18bn-in-2018.

[iv] Digi-Capital. "Ubiquitous $90 Billion AR to Dominate Focused $15 Billion VR by 2022." Digi-Capital Blog, January 2018. https://www.digi-capital.com/news/2018/01/ubiquitous-90-billion-ar-to-dominate-focused-15-billion-vr-by-2022/#.WyFUHdMrI_V.

[v] Kahn, Jeremy. "Virtual Reality Companies Navigate 'The Trough of Disillusionment'." Bloomberg.com, 21 April 2017. https://www.bloomberg.com/news/articles/2017-04-21/virtual-reality-companies-navigate-the-trough-of-disillusionment.

[vi] Statista. “Number of active virtual reality users worldwide from 2014 to 2018 (in millions).” 2018. https://www.statista.com/statistics/426469/active-virtual-reality-users-worldwide/

[vii] Ffiske, Thomas. “The State of Immersive Reality in 2018.” VRFocus, 1 March 2018. https://www.vrfocus.com/2018/01/the-state-of-immersive-reality-in-2018/

[viii] Cummings, James J. and Jeremy N. Bailenson. “How Immersive Is

Enough? A Meta-Analysis of the Effect of Immersive Technology on User Presence.” Media Psychology, 19:2 (2016): 272-309. DOI: 10.1080/15213269.2015.1015740

[ix] Ahn, Sun Joo, Amanada Minh Tran Le, and Jeremy Bailenson. “The Effect of Embodied Experiences on Self-Other Merging, Attitude, and Helping Behavior.” Media Psychology 16 (2013): 7-38. https://doi.org/10.1080/15213269.2012.755877

[x] Buchleitner, Jessica. “When Virtual Reality Feels Real, So Does the Sexual Harassment.” Salon, 21 April 2018. https://www.revealnews.org/article/when-virtual-reality-feels-real-so-does-the-sexual-harassment/

[xi]Milk, Chris. "How Virtual Reality Can Create the Ultimate Empathy Machine." TED: Ideas worth Spreading. March 2015. https://www.ted.com/talks/chris_milk_how_virtual_reality_can_create_the_ultimate_empathy_machine.

[xii] Arora, Gabo Arora, and Chris Milk. "Clouds over Sidra." Here Be Dragons, 2015. https://www.dragons.org/clouds-over-sidra/

[xiii] Robertson, Adi. "The UN Wants to See How Far VR Empathy Will Go." The Verge. September 19, 2016. https://www.theverge.com/2016/9/19/12933874/unvr-clouds-over-sidra-film-app-launch.

[xiv] Turner, Fred. From Counterculture to Cyberculture: Stewart Brand, the Whole Earth Network, and the Rise of Digital Utopianism. Chicago University Press: Chicago. 2006.

[xv] Baym, Nancy. Tune in, Log on: Soaps, Fandom, and Online Community. SAGE Publications, Inc. 2000. http://dx.doi.org/10.4135/9781452204710

[xvi] Tufekci, Zeynep. Twitter and Tear Gas: The Power and Fragility of Networked Protest. Yale University Press: Princeton, NJ. 2017.

[xvii] Data & Society. Media Manipulation and Disinformation Online. New York, NY. 2017. https://datasociety.net/output/media-manipulation-and-disinfo-online/

[xviii] Anti-Defamation League. “The Online Hate Index.” January 2018. https://www.adl.org/resources/reports/the-online-hate-index

[xix] Chakrabarti, Samidh. “Hard Questions: What Effect Does Social Media Have on Democracy?” Facebook News, 22 January 2018. https://newsroom.fb.com/news/2018/01/effect-social-media-democracy/

[xx] Howard, Phillip. “Is Social Media Killing Democracy?” Oxford Internet Institute, 15 November 2016. https://www.oii.ox.ac.uk/blog/is-social-media-killing-democracy/

[xxi] Yee, Nick and Jeremy Bailenson. “Walk a Mile in Digital Shoes: The Impact of Embodied Perspective-Taking on the Reduction of Negative Steretyping in Immersive Virtual Environments.” Proceedings of PRESENCE, 2006.

[xxii] Yee, Nick and Jeremy Bailenson. “The Proteus Effect: The Effect of Transformed Self-Representation on Behavior.” Human Communication Research 33:3 (2007): 271–290. https://doi.org/10.1111/j.1468-2958.2007.00299.x

[xxiii] Macknik, Stephen L. “A Virtual Trick to Remove Racial Bias.” Illusion Chasers: Scientific American Blog Network. 14 June 2017. https://blogs.scientificamerican.com/illusion-chasers/a-virtual-trick-to-remove-racial-bias/

[xxiv] Salmanowitz, Natalie. “The Impact of Virtual Reality on Implicit Racial Bias and Mock Legal Decisions.” Journal of Law and the Biosciences (2018): 174-203. https://doi.org/10.1093/jlb/lsy005

[xxv] Clark, Liat. “Could VR ‘Solve’ Racism? Headsets May Be Trialled as a Way to Undo Bias in the Police.” Wired 2016. http://www.wired.co.uk/article/alexandra-ivanovitch-simorga-virtual-reality

[xxvi] Groom, Victoria, Jeremy N. Bailenson, and Clifford Nass. “The Influence of Racial Embodiment on Racial Bias in Immersive Virtual Environments.” Social Influence (2009): 1-18. https://doi.org/10.1080/15534510802643750

[xxvii] Oh, Soo Youn, Jeremy Bailenson, Erika Weisz, and Jamil Zaki. “Virtually Old: Embodied Perspective Taking and the Reduction of Ageism under Threat.” Computers in Human Behavior 60 (2016): 398-410. http://doi.org/10.1016/j.chb.2016.02.007

[xxviii] Rosenberg, Robin S., Shawnee L. Baughman, and Jeremy N. Bailenson. “Virtual Superheroes: Using Superpowers in Virtual Reality to Encourage Prosocial Behavior.” PLoS ONE 8, no 1 (2013): e55003. https://doi.org/10.1371/journal.pone.0055003

[xxix] Shoah Foundation. “Lala (360).” University of Southern California: iWitness. 2017. http://iwitness.usc.edu/SFI/Sites/360/

[xxx] “Anne Frank House VR.” 2018. https://www.oculus.com/experiences/gear-vr/1596151970428159/

[xxxi] Hayden, Scott. “‘Nazi VR’ Documentary Shows How VR is Helping to Convict Nazil War Criminals.” Road to VR, 15 December 2017. https://www.roadtovr.com/nazi-vr-documentary-shows-vr-helping-convict-nazi-war-criminals/

[xxxii] Mel Films. "Nazi VR: The High-Tech Prosecution of a World War II War Criminal." MEL Magazine, 13 December 2017. https://melmagazine.com/nazi-vr-the-high-tech-prosecution-of-a-world-war-ii-war-criminal-e1d58a0d5964

[xxxiii] United States Holocaust Memorial Museum. “Holocaust Education in the Digital Age.” 19 April 2017. https://www.ushmm.org/information/about-the-museum/museum-publications/memory-and-action/holocaust-education-in-the-digital-age

[xxxiv] Hollender, Allison. “This Company is Fighting Sexual Harassment with VR.” VR Scout, 9 November 2017. https://vrscout.com/news/vr-stop-sexual-harassment/

[xxxv] Florentine, Sharon. “How VR Can Improve Anti-Harassment Training.” CIO, 9 February 2018. https://www.cio.com/article/3254188/it-skills-training/how-vr-can-improve-anti-harassment-training.html

[xxxvi] Vantage Point. “Our Product.” 2018. https://www.tryvantagepoint.com/vantage-point-sexual-harassment-training-our-product/

[xxxvii] Evans, Dayna. “In Virtual Reality, Women Run the World.” The Cut, September 2016. https://www.thecut.com/2016/09/virtual-reality-women-run-the-world-c-v-r.html

[xxxviii] Gajsek, Dejan. “Women of VR - 35 Ladies Who Are Killing It in Virtual Reality.” VIAR360, 9 June 2017. https://www.viar360.com/blog/women-of-vr/

[xxxix] Women in Virtual Reality. 2018. http://www.wivr.net/

[xl] Duong, Jenn. "Women in VR/AR." Facebook. 2015. https://www.facebook.com/groups/womeninvr/

[xli] Stevenson, Sarah. "NY Women in VR (New York, NY)." Meetup.com, 2018. https://www.meetup.com/NY-Women-In-VR/

[xlii] Galley, Christine Ward. “Mediated Messages: Gender, Class, and Cosmos in Home Video Games.” The Journal of Popular Culture 27, no. 1 (Summer 1993): 81-98. https://doi-org/10.1111/j.0022-3840.1993.845217931.x

[xliii] Burgess, Melinda C. R., Steven Paul Stermer, and Stephen R. Burgess. “Sex, lies, and video games: The portrayal of male and female characters on video game covers.” Sex Roles 57:5-6 (2007): 419-433. http://dx.doi.org/10.1007/s11199-007-9250-0

[xliv] Dill, Karen E., Brian P. Brown, and Michael A. Collins. “Effects of Exposure to Sex-Stereotyped Video Game Characters on Tolerance of Sexual Harassment.” Journal of Experimental Social Psychology 44, no. 5 (September 2008): 1402-1408. https://doi.org/10.1016/j.jesp.2008.06.002

[xlv] Dill, Karen E. and Kathryn P. Thill. “Video Game Characters and the Socialization of Gender

Roles: Young People’s Perceptions Mirror Sexist Media Depictions.” Sex Roles 57 (2007):851–864. https://doi.org/10.1007/s11199-007-9278-1

[xlvi] Dill, Karen E., Brian P. Brown, and Michael A. Collins. “Effects of Exposure to Sex-Stereotyped Video Game Characters on Tolerance of Sexual Harassment.” Journal of Experimental Social Psychology 44, no. 5 (September 2008): 1402-1408. https://doi.org/10.1016/j.jesp.2008.06.002

[xlvii] Beck, Victoria Simpson, Stephanie Boys, Christopher Rose, Erick Beck. “Violence Against Women in Video Games: A Prequel or Sequel to Rape Myth Acceptance?” Journal of Interpersonal Violence 27, no. 15 (October 2012): 3016-3031. https://doi.org/10.1177/0886260512441078

[xlviii]O’Halleran, Kate. “‘Hey dude, do this’: the last resort for female gamers escaping online abuse.” The Guardian, 23 October 2017. https://www.theguardian.com/culture/2017/oct/24/hey-dude-do-this-the-last-resort-for-female-gamers-escaping-online-abuse

[xlix] Singal, Jesse. “What Sexual Harassment Does to Female Gamers.” The Cut, 23 March 2018. https://www.thecut.com/2016/03/what-sexual-harassment-does-to-female-gamers.html

[l] Cole, Amanda. “‘I Can Defend Myself’: Women’s Strategies for Coping with Harassment while Gaming Online.” Games and Culture 12, no. 2 (March 2017): 136-155. https://doi.org/10.1177/1555412015587603

[li]Liss-Schultz, Nina. “This Woman Was Threatened With Rape After Calling Out Sexist Video Games—and Then Something Inspiring Happened.” Mother Jones, 20 May 2014. https://www.motherjones.com/media/2014/05/pop-culture-anita-sarkeesian-video-games-sexism-tropes-online-harassement-feminist/

[lii] Parkin, Simon. “Gamergate: A Scandal Erupts in the Video-Game Community.” The New Yorker, 17 October 2014. https://www.newyorker.com/tech/elements/gamergate-scandal-erupts-video-game-community

[liii] Data & Society. Media Manipulation and Disinformation Online. New York, NY. 2017. https://datasociety.net/output/media-manipulation-and-disinfo-online/

[liv] Shiu, Anthony Sze-Fai. “What Yellowface Hides: Video Games, Whiteness, and the American Racial Order.” The Journal of Popular Culture 39, no. 1 (2006): 109-125. https://doi.org/10.1111/j.1540-5931.2006.00206.x

[lv] Poor, Nathaniel. “Digital Elves as a Racial Other in Video Games: Acknowledgement and Avoidance.” Games and Culture 7, no. 5 (September 2012): 375-396. https://doi.org/10.1177/1555412012454224

[lvi] Everett, Anna and S. Craig Watkins. “The Power of Play: The Portrayal and Performance of Race in Video Games.” In The Ecology of Games: Connecting Youth, Games, and Learning. Edited by Katie Salen. The John D. and Catherine T. MacArthur Foundation Series on Digital Media and Learning. Cambridge, MA: The MIT Press, 2008. 141-166.

[lvii] Chan, Dean. “Playing with Race: The Ethics of Racialized Representations in E-Games.” International Review of Information Ethics 4 (2005): 24-30.

[lviii] Greitemeyer, Tobias. “Playing Violent Video Games Increases Intergroup Bias.” Personality and Social Psychology Bulletin 40, no. 1 (January 2014): 70-78. https://doi.org/10.1177/0146167213505872

[lix] Data & Society. Media Manipulation and Disinformation Online. New York, NY. 2017. https://datasociety.net/output/media-manipulation-and-disinfo-online/

[lx]West, Lindy. “I’ve left Twitter. It is unusable for anyone but trolls, robots and dictators.” The Guardian, 3 January 2017. https://www.theguardian.com/commentisfree/2017/jan/03/ive-left-twitter-unusable-anyone-but-trolls-robots-dictators-lindy-west

[lxi]“Star Wars actress Kelly Marie Tran deletes Instagram posts after abuse.” BBC News, 6 June 2018. shttps://www.bbc.com/news/world-asia-44379473

[lxii] Emerson, Sarah. “Shenzhen’s Homegrown Cyborg.” Motherboard,25 March 2018. https://motherboard.vice.com/en_us/article/3kjqdb/naomi-wu-sexy-cyborg-profile-shenzhen-maker-scene

[lxiii]Gaudette, Emily. “Meet Naomi Wu, Target of an American Tech Bro Witchhunt.” Newsweek, 7 November 2017. http://www.newsweek.com/naomi-wu-sexy-cyborg-misogyny-silicon-valley-704372

[lxiv]General Ryan. “Why Vice’s Reporting on Naomi Wu Could Get Her Arrested in China.” Nextshark, April 2018. https://nextshark.com/naomi-wu-vice-controversy/

[lxv]Gunaratna, Shanika. “Neo-Nazis tag (((Jews))) on Twitter as hate speech, politics collide.” CBS News, 10 June 2016. https://www.cbsnews.com/news/neo-nazis-tag-jews-on-twitter-harassment-hate-speech-politics/

[lxvi] Waldman, Katy. “(((The Jewish Cowbell))): Unpacking a Gross New Meme From the Alt-Right.” Slate, 2 June 2016. http://www.slate.com/blogs/lexicon_valley/2016/06/02/the_jewish_cowbell_the_meaning_of_those_double_parentheses_beloved_by_trump.html

[lxvii]Anti-Defamation League Hate Symbols Database. “Pepe the Frog.” 2017. https://www.adl.org/education/references/hate-symbols/pepe-the-frog

[lxviii]Heim, Eric. “Recounting a day of rage, hate, violence and death.” The Washington Post, 14 August 2017. https://www.washingtonpost.com/graphics/2017/local/charlottesville-timeline/

[lxix]Anti-Defamation League. “The Online Hate Index.” January 2018. https://www.adl.org/resources/reports/the-online-hate-index

[lxx]BBC News. “Germany Starts Enforcing Hate Speech Law.” 1 January 2018. https://www.bbc.com/news/technology-42510868

[lxxi]Thomasson, Emma. “Germany looks to revise social media law as Europe watches.” Reuters, 8 March 2018. https://www.reuters.com/article/us-germany-hatespeech/germany-looks-to-revise-social-media-law-as-europe-watches-idUSKCN1GK1BN

[lxxii] Shriram, Ketaki and Raz Schwartz. “All Are Welcome: Using VR Ethnography to Explore Harassment Behavior In Immersive Social Virtual Reality.” IEEE VR. Los Angeles, USA: March 2017. https://doi.org/10.1109/VR.2017.7892258

[lxxiii] Belmaire, Jordan. “My First Virtual Reality Groping.” Medium, 2016. https://medium.com/athena-talks/my-first-virtual-reality-sexual-assault-2330410b62ee

[lxxiv] Stanton, Aaron. “Dealing with Harassment in VR.” UploadVR, 25 October 2016. https://uploadvr.com/dealing-with-harassment-in-vr/

[lxxv]D'Anastasio, Cecilia. “VR Developers Add 'Superpower' To Their Game To Fight Harassment.” Kotaku, 26 October 2016. https://kotaku.com/vr-developers-add-personal-bubble-to-their-game-to-fi-1788237241

[lxxvi]AltspaceVR. “Introducing a Space Bubble.” 13 July 2016. https://altvr.com/introducing-space-bubble/

[lxxvii] Bönsch, Andrea, Sina Radke, Heiko Overath, Laura M. Asché, Jonathan Wednt, Tom Vierjahn, Ute Habel, and Torsten W. Kuhlen. “Social VR: How Personal Space is Affected by Virtual Agents’ Emotions.” Proceedings of the IEEE Virtual Reality Conference, 2018.

[lxxviii] Gault, Matthew. “VRChat Added a Panic Button to Deal with Porn Trolls.” Motherboard, 1 March 2018. https://motherboard.vice.com/en_us/article/xw5k5z/vrchat-added-panic-button-to-deal-with-porn-trolls

[lxxix] Outlaw, Jessica. “Virtual Harassment: The Social Experience of 600+ Regular Virtual Reality Users.” 2018. https://extendedmind.io/blog/2018/4/4/virtual-harassment-the-social-experience-of-600-regular-virtual-reality-vrusers

[lxxx]Gault, Matthew. “VRChat Added a Panic Button to Deal with Porn Trolls.” Motherboard, 1 March 2018. https://motherboard.vice.com/en_us/article/xw5k5z/vrchat-added-panic-button-to-deal-with-porn-trolls

[lxxxi] Personal communication, Jesse Damiani, VRScout. 2018.

[lxxxii] Molina, Javier. Personal communication. 11 June 2018.

[lxxxiii] Outlaw, Jessica. “Virtual Harassment: The Social Experience of 600+ Regular Virtual Reality Users.” 2018. https://extendedmind.io/blog/2018/4/4/virtual-harassment-the-social-experience-of-600-regular-virtual-reality-vrusers

[lxxxiv] Outlaw, Jessica and Beth Duckles. “Why Women Don’t Like Social Virtual Reality: A Study of Safety, Usability, and Self-Expression in Social VR.” 2017. https://static1.squarespace.com/static/56e315ede321404618e90757/t/59e4cb58fe54ef13dae231a1/1508166522784/The+Extended+Mind_Why+Women+Don%27t+Like+Social+VR_Oct+16+2017.pdf

[lxxxv] Outlaw, Jessica. “Virtual Harassment: The Social Experience of 600+ Regular Virtual Reality Users.” 2018. https://extendedmind.io/blog/2018/4/4/virtual-harassment-the-social-experience-of-600-regular-virtual-reality-vrusers

[lxxxvi]Hirst, Alison and Christina Schwabenland. “Doing Gender in the ‘New Office’.” Gender, Work & Organization 25:2 (March 2018): 159-176. https://doi.org/10.1111/gwao.12200

[lxxxvii] Groom, Victoria, Jeremy N. Bailenson, and Clifford Nass. “The Influence of Racial Embodiment on Racial Bias in Immersive Virtual Environments.” Social Influence (2009): 1-18. https://doi.org/10.1080/15534510802643750

[lxxxviii] Yee, Nick and Jeremy Bailenson. “Walk a Mile in Digital Shoes: The Impact of Embodied Perspective-Taking on the Reduction of Negative Steretyping in Immersive Virtual Environments.” Proceedings of PRESENCE, 2006.

[lxxxix] Yee, Nick and Jeremy Bailenson. “The Proteus Effect: The Effect of Transformed Self-Representation on Behavior.” Human Communication Research 33:3 (2007): 271–290. https://doi.org/10.1111/j.1468-2958.2007.00299.x

[xc] Maister, Lara, Mel Slater, Maria V. Sanchez-Vives, and Manos Tsakiris. “Changing Bodies Changes Minds: Owning Another Body Affects Social Cognition.” Trends in Cognitive Science 19, no. 1 (January 2015): 6-12. https://doi.org/10.1016/j.tics.2014.11.001

[xci] Banakou, Domna, Parasuram D. Hanumanthu, and Mel Slater. “Virtual Embodiment of White People in a Black Virtual Body Leads to a Sustained Reduction in Their Implicit Racial Bias.” Frontiers in Human Neuroscience 10 (November 2016). https://doi.org/10.3389/fnhum.2016.00601

[xcii] Hasler, Béatrice S., Bernhard Spanlang, Mel Slater. “Virtual Race Transformation Reverses Racial In-Group Bias.” PLoS ONE 12, no. 4 (2017): e0174965. https://doi.org/10.1371/journal.pone.0174965

[xciii] Alexander, Julia. “Understanding Ugandan Knuckles in a post-Pepe the Frog World.” Polygon, 2 February 2018. https://www.polygon.com/2018/2/2/16951684/ugandan-knuckles-pepe-frog-meme-vrchat

[xciv] Alexander, Julia. “‘Ugandan Knuckles’ is overtaking VRChat.” Polygon. 8 January 2018. https://www.polygon.com/2018/1/8/16863932/ugandan-knuckles-meme-vrchat

[xcv] "Pool's Closed." Know Your Meme. June 03, 2018. http://knowyourmeme.com/memes/pools-closed.

[xcvi] "[Image - 148224] | Pool's Closed." Know Your Meme. February 22, 2018. http://knowyourmeme.com/photos/148224-pools-closed

[xcvii] "Offensive Avatars Allowed? :: VRChat General Discussions." Steam Community. December 18, 2017. https://steamcommunity.com/app/438100/discussions/0/2906376154328822986/.

[xcviii] VRChat. “Avatars.” 2018. https://docs.vrchat.com/docs/avatars

[xcix] Outlaw, Jessica. “Virtual Harassment: The Social Experience of 600+ Regular Virtual Reality Users.” Extended Mind, 2018. https://extendedmind.io/blog/2018/4/4/virtual-harassment-the-social-experience-of-600-regular-virtual-reality-vrusers

[c] Shiu, Anthony Sze-Fai. “What Yellowface Hides: Video Games, Whiteness, and the American Racial Order.” The Journal of Popular Culture 39, no. 1 (2006): 109-125. https://doi.org/10.1111/j.1540-5931.2006.00206.x

[ci] Groom, Victoria, Jeremy N. Bailenson, and Clifford Nass. “The Influence of Racial Embodiment on Racial Bias in Immersive Virtual Environments.” Social Influence (2009): 1-18. https://doi.org/10.1080/15534510802643750

[cii] Clark, Liat. “Could VR ‘Solve’ Racism? Headsets May Be Trialled as a Way to Undo Bias in the Police.” Wired 2016. http://www.wired.co.uk/article/alexandra-ivanovitch-simorga-virtual-reality

[ciii] Salmanowitz, Natalie. “The Impact of Virtual Reality on Implicit Racial Bias and Mock Legal Decisions.” Journal of Law and the Biosciences (2018): 174-203. https://doi.org/10.1093/jlb/lsy005

[civ] Macknik, Stephen L. “A Virtual Trick to Remove Racial Bias.” Illusion Chasers: Scientific American Blog Network. 14 June 2017. https://blogs.scientificamerican.com/illusion-chasers/a-virtual-trick-to-remove-racial-bias/

[cv] Hall, Stefan, and Ryo Takahashi. "Augmented and Virtual Reality: The Promise and Peril of Immersive Technologies." McKinsey & Company. October 2017. https://www.mckinsey.com/industries/media-and-entertainment/our-insights/augmented-and-virtual-reality-the-promise-and-peril-of-immersive-technologies.

[cvi] Addis, Maria Chiara and Maria Kutar. “The General Data Protection Regulation (GDPR), Emerging Technologies and UK Organisations: Awareness, Implementation and Readiness.” UKAIS Conference Proceedings (2018). https://www.ukais.org/resources/Documents/ukais%202018%20proceedings%20papers/paper_39.pdf

[cvii] Robertson, Adi. "Oculus Is Adding a "privacy Center" to Meet EU Data Collection Rules." The Verge. April 19, 2018. https://www.theverge.com/2018/4/19/17253706/oculus-gdpr-privacy-center-terms-of-service-update.

Codes of Conduct