Related Content

Nicholas J. Fuentes: Five Things to Know

Antisemitic Conspiracies About 9/11 Endure 20 Years Later

Coded Hate: Extremists Weaponize Seemingly Innocuous Content to Promote Bigotry

Meta CEO Mark Zuckerberg posted a video to Facebook in January 2025 announcing dramatic changes to the company’s content moderation policies.

In the clip, the 40-year-old executive shared his commitment to restoring free expression and to making sure that “people can share their beliefs and experiences” on Meta platforms.

In reality, our research has shown that these policy changes have resulted in a dramatic increase of antisemitism and extremism on Meta’s social media platforms at least as it relates to certain populations. Malicious actors have capitalized on this more permissive operating environment, exploiting platform features to promote hateful and antisemitic rhetoric, foment material support for foreign terrorist organizations, and sell merchandise that incorporates explicit hate symbols.

According to a 2025 Pew Research study, 80% of American adults under 30 use Instagram, and a 2024 Pew study found that 50% of kids between the ages of 13-17 use Instagram daily, with 12% reporting that they use it “almost constantly.”

Meta’s content moderation choices risk turning Instagram into a hub for hate and antisemitism that puts offline communities at risk. The hate that Instagram users consume online does not stay there. A lack of strong enforcement and protections against extremist and antisemitic rhetoric imperils offline communities by bolstering the ranks of extremist movements, spreading foreign terrorist messaging, and financing extremist activities.

Nick Fuentes, the Groypers, and Collaborative Posts

In May 2025, white supremacist Nick Fuentes lamented on X that he had been banned from Instagram since 2021, complaining of how he had been “blacklisted, slandered, and suppressed,” which has significantly impacted his career. Despite this ban, Fuentes noted that his content continues to spread on Instagram, writing on Telegram that Instagram “relaxed its content moderation” and had “stopped taking my clips down.”

Fuentes claimed that his “team” and other Groypers were posting his content on the platform, resulting in him becoming “Instagram famous, getting stopped in public by people who saw the clips that were getting millions of views.”

While one should not generally take white supremacists at their word, a COE investigation supports Fuentes’s contention: Despite his claim of being personally banned from creating an Instagram account, his content still floods the platform, amplified by a network of accounts that boost each other and his hateful rhetoric. This allows Fuentes to reach a larger audience than he could on his own and further exposes people to his message who likely would not otherwise encounter it.

COE has identified at least 105 Instagram accounts affiliated with Fuentes’s Groyper movement, which combined had over 1.4 million followers as of January 2026. Their posts, which are published with collaborators, garner hundreds to thousands of likes to millions of views.

In order to elevate their profiles and maximize their content’s reach, Groyper accounts capitalize on the basic functions of Instagram. Groypers will often post to their profile grids in collaboration with other Groyper accounts, piggybacking on accounts with greater reach to help elevate their profiles out of obscurity and into more people’s feeds. It is common to find posts sharing Fuentes content that have both larger accounts and smaller accounts tagged to boost the smaller accounts’ exposure and reach on the platform.

Posts from Groyper accounts frequently include antisemitic, white supremacist, anti-LGBTQ+, and anti-immigrant messages. This content is sourced from Fuentes’s livestream show, America First with Nicholas J. Fuentes, or his other online appearances and collaborations. These clipping accounts generally source their clips from Fuentes’s most recent livestreams, but will also circulate old clips of him, especially if he is saying something that is charged, controversial or bigoted.

In a November 2025 collaborative post, six Groyper accounts, with follower counts ranging from several thousand to tens of thousands of followers, posted a reel of Fuentes joking that he wants Hitler to run the United States. As of February 20, the post received over 2.7 million views and 172,000 likes.

In a post from November 2025, another group of six Groyper accounts posted as collaborators a reel clipped from Fuentes’s livestream show in which he baselessly argues that Jews are “against Christianity” and that they have “subversive” morals because he believes they “run the porn industry.” As of February 20, the post received at least 311,000 views and over 27,000 likes.

In another post from September 2025, a different group of four Groyper accounts posted as collaborators a reel edit of Fuentes stating on his show that he believes Israel and the “organized Jewish community” have been involved in the “many of the greater conspiracies of the past 200 years.” Fuentes went on to reference the assassination of President John. F Kennedy, the mistaken Israeli strike against the U.S.S. Liberty, and the 9/11 terrorist attacks as examples of these conspiracies. The reel prominently features the number 271,000, which is coded hate term for Holocaust denial that trivializes the widely accepted figure of 6 million Jewish deaths resulting from the Holocaust. As of February 20, the post has received at least 1 million views and over 140,000 likes.

(Screenshot/Instagram)

Antisemitic conspiracy theory Instagram reel by Groyper accounts.

Groyper accounts have adapted to the limited moderation that Instagram does employ: If they notice that the platform is taking down violative content, Groyper accounts will deactivate for a short period of time to avoid bans. Once they feel comfortable, they reactivate and continue spreading their hate on the platform for their audiences to consume.

Foreign Terrorist Organizations and Supporters on Instagram

According to Meta’s Community Standards, the company does not allow “organizations or individuals that proclaim a violent mission or are engaged in violence to have a presence on our platforms.” The company asserts that it removes any glorification or support of designated Foreign Terrorist Organizations (FTO), Specially Designated Global Terrorists (SDGT), and any groups that organize or advocate for violence against civilians.

Despite this official policy, many accounts that do so are active on Instagram and often share violative content. In recent years, Meta maintained a ban on content praising FTOs, like the Taliban, ISIS and Al-Qaida. But today, content glorifying and even originating from FTOs, SDGTs, and other similar organizations is rampant on the platform.

COE identified at least 23 accounts that spread Islamic State and Al-Qaida propaganda. These accounts will often post an image or video that glorifies organizations that meet Meta’s terrorism definition with caption text that is unrelated to the subject matter at hand — like a synopsis of Home Alone or gardening advice — potentially in an effort to avoid detection. These pages will also cross-post with other accounts that support terrorists/terrorism and violence against civilians.

In one post shared in December 2025, footage from the Bondi Beach massacre is overlaid with music from a well-known Islamic State song that includes the lyrics, “we will march to the gates of paradise where our maidens await / we are men that love death just as you love your life / we are soldiers that fight in the day and the night.” The accompanying Arabic text on the post includes calls for the killing of Jews around the world, especially in the streets and synagogues. The post also uses the hashtags “foryou” and “fyp.”

One Islamic State supporting account in February 2026 shared a video of Islamic State soldiers executing people in a field for refusing to accept Islam. Next to the video was anodyne text describing a clock tower in Mecca. The post, from a small account with fewer than 300 followers, was liked over 4,000 times, and shared over 2,400 times in the first two weeks after it was posted to Instagram.

A separate account that month shared an Islamic State West Africa propaganda video, leveraging Instagram’s platform to propagate terrorist content.

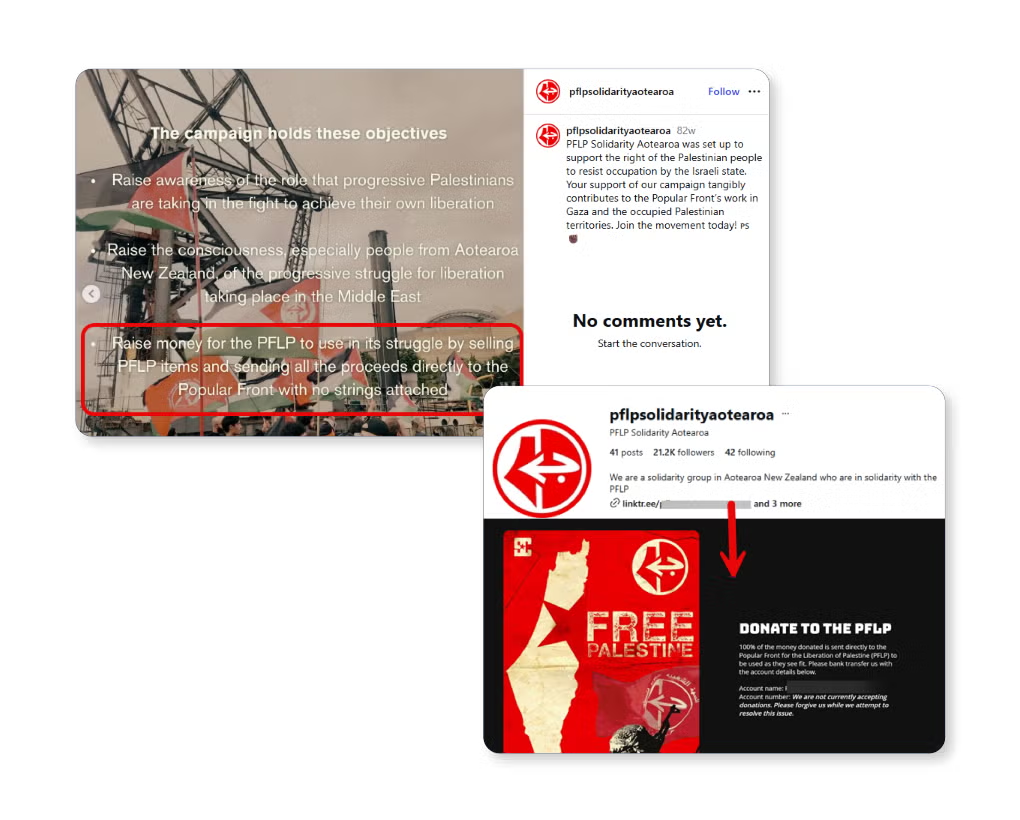

COE has identified 33 accounts with direct or indirect connections to the Popular Front for the Liberation of Palestine, or which have been sanctioned or dissolved by their own respective governments for inciting violence, hatred against Jews or expressing support for Hamas. The total number of followers exceeds 340,000. PFLP took part in the Hamas-led October 7th terror attacks.

Examples of Instagram accounts dedicated to supporting PFLP abound, with at least one account that has fundraised for the terrorist organization. PFLP Solidarity Aotearoa, a New Zealand-based account with over 21,000 followers as of February 2026, explicitly posted that their campaign seeks to “raise money for the PFLP.” One link in the group’s Instagram bio directed users to material published by PFLP and information directing users on how to donate to the PFLP, though as of February 2026, its website states “We are not currently accepting donations. Please forgive us while we attempt to resolve this issue.” It appears the site accepted donations until sometime late in 2024. Archived versions of the site show an account number to which one could send funds in July 2024. By August 3, the account number was removed, and a revised message stated they were no longer accepting donations.

On March 30, 253 accounts and posts included in this report’s analysis were flagged directly to Meta by the ADL, including PFLP Solidarity Aotearoa’s account, in an effort to proactively engage with the platform. As of April 1, the account was no longer active on the platform.

Left image: Instagram post by @pflpsolidarityaotearoa guiding users to donate to the PFLP (Source: Instagram/Screenshot)

Top right image: Instagram bio of @pflpsolidarityaotearoa, including a LinkTree (Source: Instagram/Screenshot)

Bottom right image: Website of PFLP Solidariy Aorearoa, which originated in the LinkTree, providing directions on how to donate to the PFLP (Source: Website/Screenshot)

COE analysts additionally identified chapters of Samidoun, a transnational anti-Zionist group sanctioned by the U.S. and Canadian governments as a "sham charity that serves as an international fundraiser for The Popular Front for The Liberation of Palestine," operating on the platform. The presence of these accounts on Instagram is particularly troubling because Meta has previously removed Samidoun accounts.

The France-based group Urgence Palestine, which the French government began to dissolve in 2025 for “inciting violent acts against people” and advocating for “a terrorist organization like Hamas,” has at least 18 national and chapter accounts on Instagram. The group often uses its Instagram account to post images of the inverted red triangle, a symbol used by Hamas in its propaganda videos to mark Israeli targets. It has also attempted to justify the Hamas-led terror attack on October 7, 2023.

Weak enforcement of Meta’s policies banning the glorification, support, and representation of terrorist organizations allows groups free reign to amplify and promote terrorist organizations and causes on the platform.

Curb Stomp MFG and Extremist Merchandising

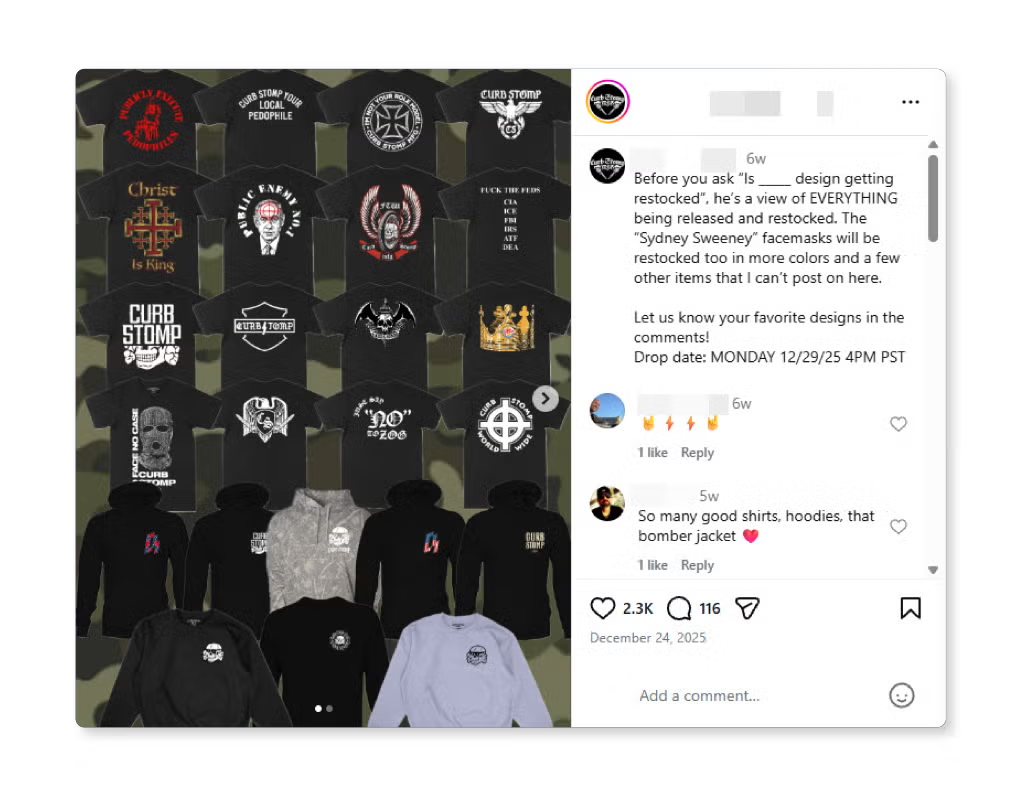

Despite hate symbols being explicitly banned, products that incorporate these symbols, including sonnenrads, Totenkopfs, and SS bolts, are routinely sold on Instagram.

Curb Stomp MFG, a Southern California-based merchandising company owned by Joshua Arbues, takes full advantage of Instagram’s weak enforcement. Arbues has over 154,000 followers on his personal account, which he often uses to promote his company. The company’s newest Instagram profile, created in March 2026, has grown to over 11,000 followers and remains active as of this report’s publication. An alternative account, created in October 2025, grew to over 20,000 followers by March 2026 before being removed from the platform. Another alternate account for Curb Stomp MFG, created in May 2025, grew to over 43,000 followers before being removed from the platform in February 2026.

Curb Stomp MFG uses its Instagram page to direct customers to its web shop, where it sells numerous items with white supremacist messaging. Its accounts have also encouraged people to sign up for their newsletter for “uncensored” merchandise they can’t show on social media. The business has repeatedly advised customers to watch their Instagram closely for monthly merchandise “drops” claiming their products sell out quickly. The company has stated that the best way to contact them is through Instagram DMs and not via email.

MFG takes its name from curb stomping, the violent act of smashing a person’s head heavily against the ground with one’s foot after forcing the victim’s face against the edge of a street curb. The name and act were made infamous in the 1998 film American History X, in which a racist skinhead curb stomps a Black man to death. On February 23, Curb Stomp MFG posted to their now-removed Instagram this scene from the movie to promote their merchandise drop for later that month. Curb Stomp MFG also posted a reel from another removed Instagram page in January, which had gained over 484,000 views, citing the film as explicit inspiration for the founding of the brand.

(Screenshot/Instagram)

Instagram post by Curb Stomp MFG promoting their merchandise drop.

The company sells apparel, motorcycle accessories, stickers, flags, and knives, most of which are emblazoned with Nazi symbols. For example, in December 2025, Curb Stomp MFG posted to a now-removed Instagram account an image of a compilation of t-shirts it is selling that feature Totenkopfs, SS lightning bolts, and Reichslader without the swastika. One shirt also includes the image of Israeli Prime Minister Benjamin Netanyahu with a target on his head with the message “Public Enemy No.1.”

On March 3, Curb Stomp MFG posted to a now-removed Instagram page that Shopify identified its merchandise sold on the platform as “promoting hateful content, violence, gore profanity or offensive content.” Shopify actioned the store by suspending its payment processing and hid the webstore from the platform’s Shop channel.

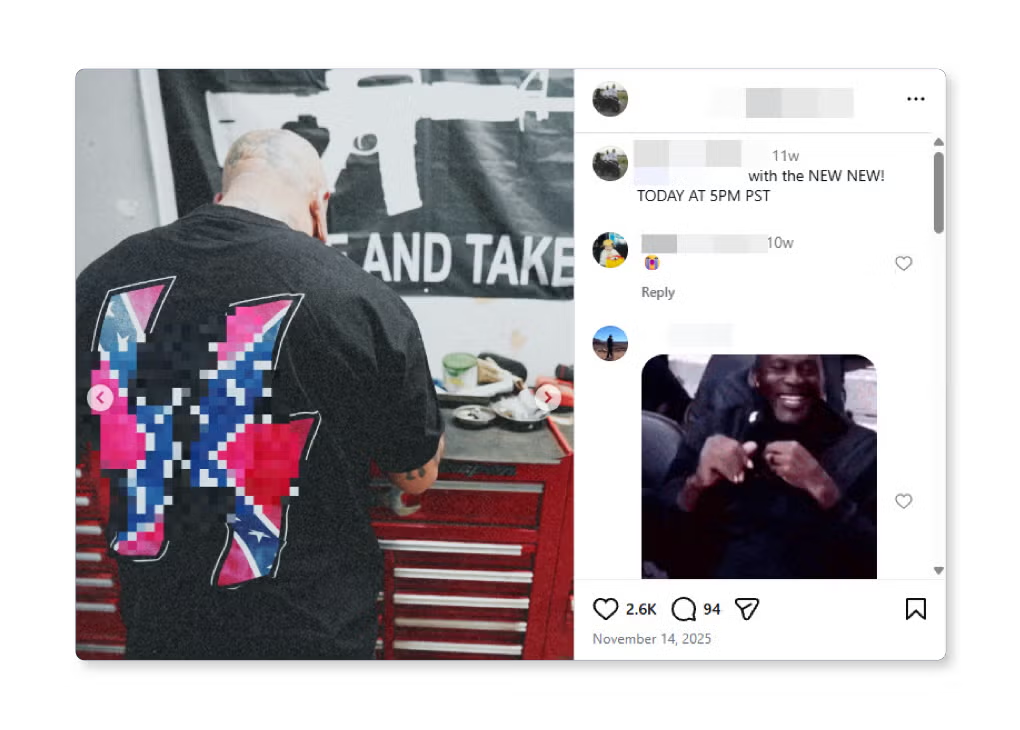

(Screenshot/Instagram)

Instagram post by Curb Stomp MFG promoting shirts for sale.

In what appears to be an effort to avoid moderation, Curb Stomp MFG will blur portions of their posts, though not enough that the viewer won’t recognize what is being obscured. For example, in November 2025, the page shared a now-removed picture of one of their T-shirts featuring SS bolts but blurred the symbol to avoid content moderation.

(Screenshot/Instagram)

Instagram post from Curb Stomp MFG’s account featuring their obscured SS bolts shirt with the Confederate flag.

Curb Stomp MFG and Arbues also openly share white supremacist and antisemitic content. The accounts regularly share reels that feature antisemitic, pro-Hitler, Islamophobic, and racist messaging. As of early March, this hateful content garnered over 3.2 million views on Instagram.

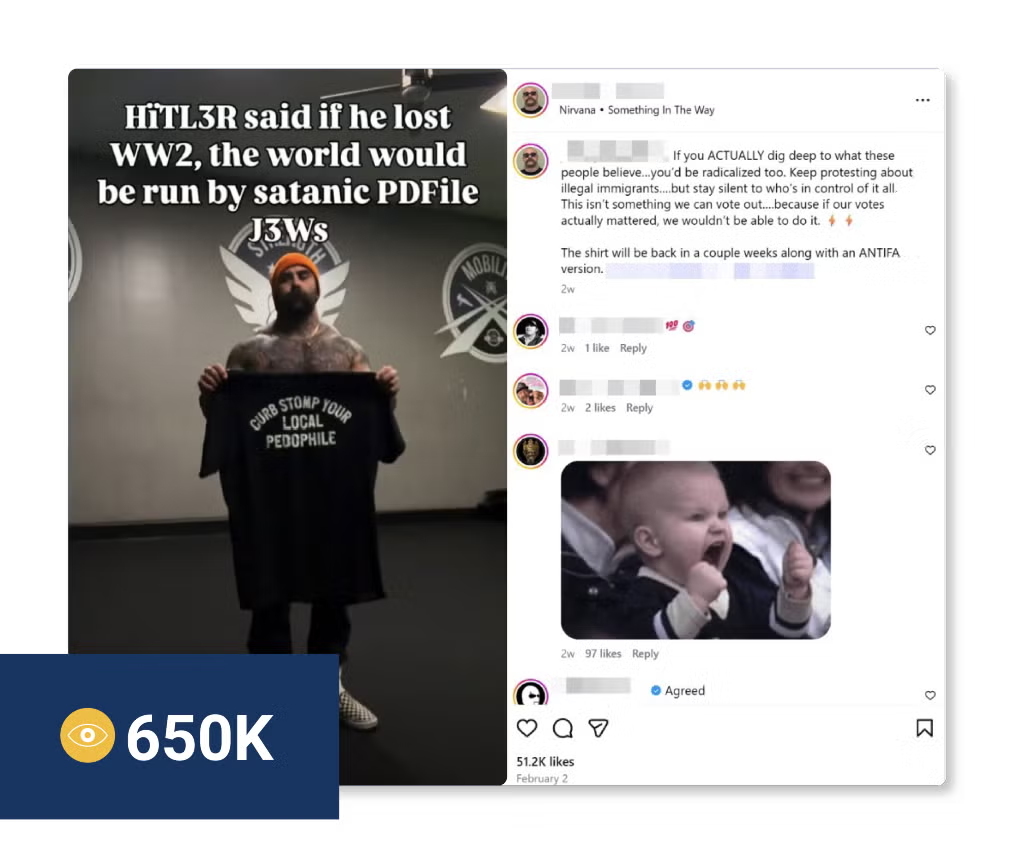

On February 2, Arbues posted to his Instagram profile promoting the sale of a shirt with the message “HïTL3R said if he lost WW2, the world would be run by satanic PDFile J3Ws.”

The post promotes antisemitic tropes to falsely make broad, malicious claims that Jews are satanic and abuse children. The post’s caption refers to Jews as “these people” and promotes the sale of Curb Stomp MFG’s shirt with the message, “CURB STOMP YOUR LOCAL PEDOPHILE.” The caption also cites researching Judaism as a point for “radicalization” and makes the antisemitic claim that Jews have too much power in society. As of March 3, the reel has gained over 650,000 views and over 51,200 likes.

(Screenshot/Instagram)

Instagram reel posted by Arbues promoting antisemitic tropes.

A now-removed Curb Stomp MFG account also published a reel of Arbues in December 2025 with the message, “When you’re spending Christmas with family and someone asks your opinion on Israel” which then cuts to a GAI video of Adolf Hitler dancing. As of February 20, and before removal, the post gained over 226,000 views and includes the hashtags #adolfhitler #adolf #thejuice #extemist.

Instagram and the Failure to Moderate

Between January 14 and February 17, COE conducted a content moderation test of Instagram by anonymously reporting accounts and posts linked to the Groyper movement, FTO supporters, and extremist merchandisers. These accounts and posts were reported for violating Instagram’s policies against violence, hate, or exploitation.

After waiting two weeks for each flag to give Instagram time to respond, COE recorded the results of those reports. All told, Instagram removed 7% of the 253 accounts and content flagged.

Of the 150 accounts that COE reported, Instagram:

- Removed 11 of these accounts, accounting for 7% of cases.

- Acknowledged the report, but permitted the account to remain on the platform in 139 cases, accounting for 93%.

- Did not respond at all to the flag in 20 cases, accounting for 13%.

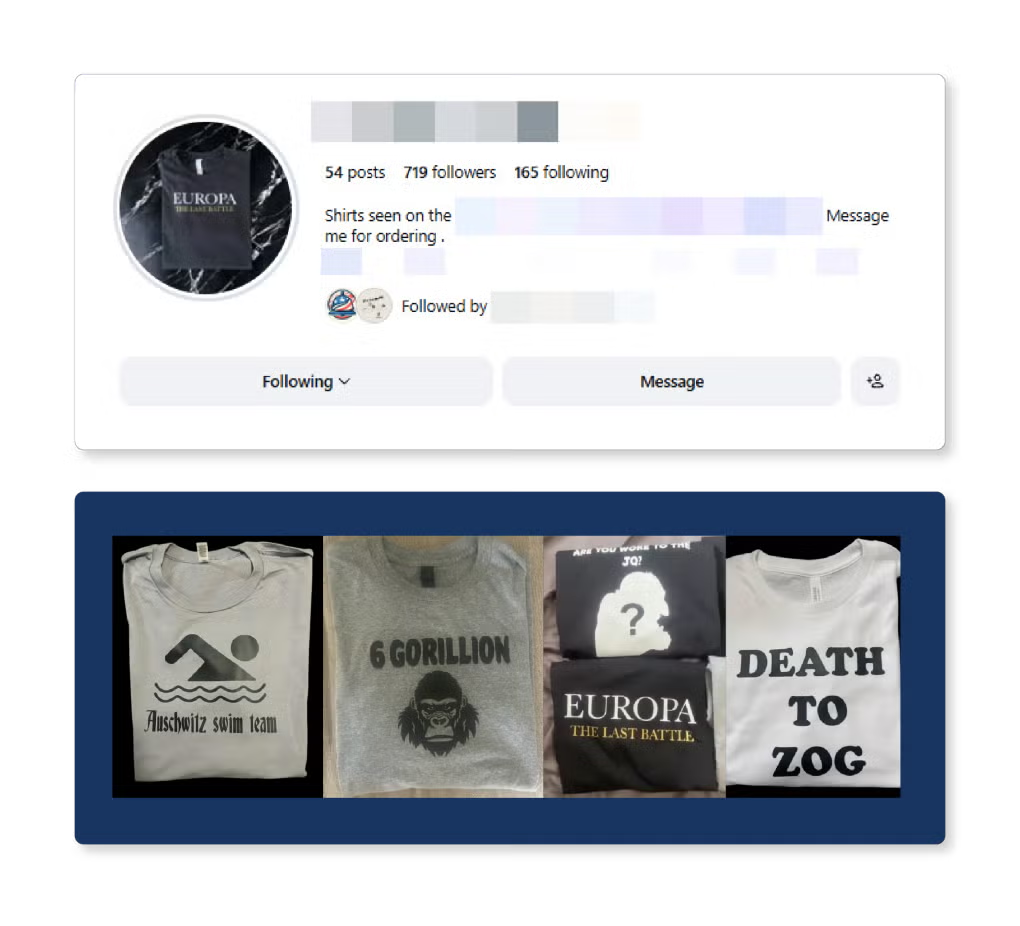

Instagram found that one extremist merchandising account, the apparel page for The Berm Pit did not go against their Community Standards. The Berm Pit is a Pittsburgh-based far-right, antisemitic podcast that often features far-right influencers and promotes antisemitic conspiracy theories like The Great Replacement theory, Holocaust denial, tropes about Jewish control and praise for Nazi Germany. The Berm Pit’s apparel page sells T-shirts with antisemitic imagery, promotion of white supremacist films, and anti-Israel slogans. These shirts are prominently featured in the account’s posts and requests followers to direct message the account to order.

(Screenshot/Instagram)

The Berm Pit’s Instagram apparel page features images of its T-shirts for sale.

Of the 103 posts that COE reported, Instagram:

- Removed 8 of these posts, accounting for 8%. Additionally, three posts were “hidden from teens.”

- Acknowledged the report, but permitted 95 posts to remain on the platform, accounting for 92%.

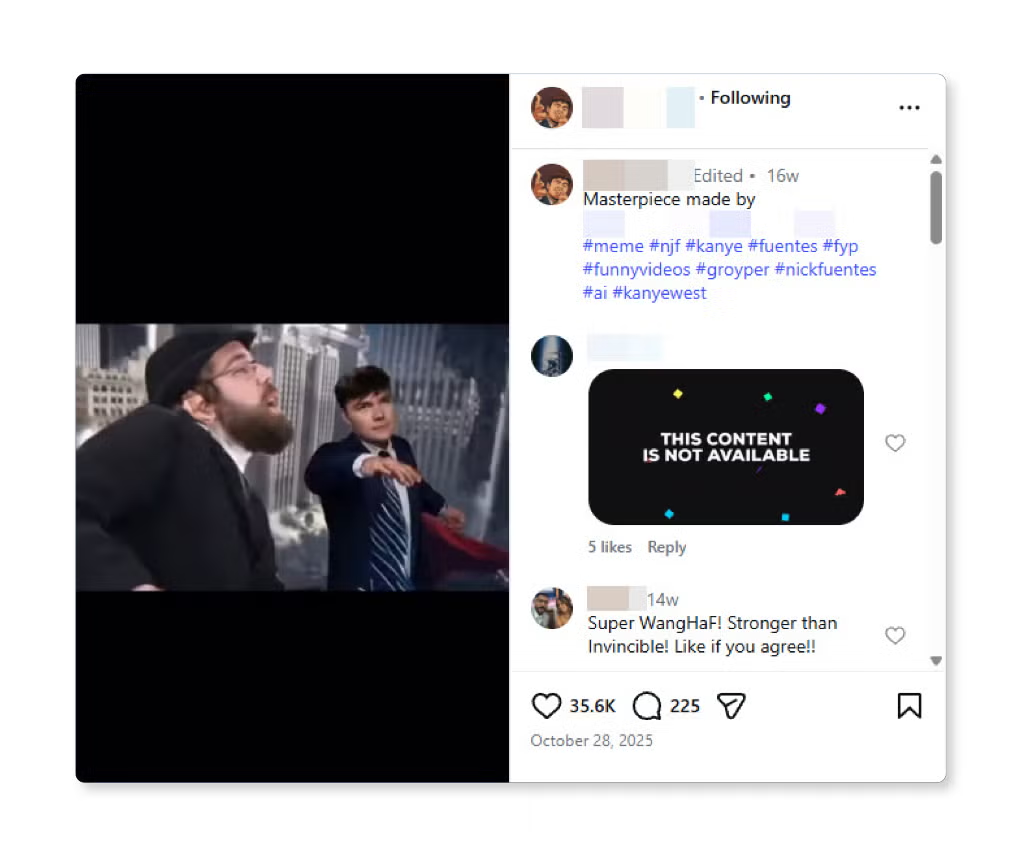

One of the posts Instagram found was congruent with their Community Standards is a generative AI (GAI) reel that depicts Fuentes as a Superman-like figure physically fighting a group of Orthodox Jewish men. As of February 20, the post has received over 401,000 views and over 35,600 likes.

(Screenshot/Instagram)

Groyper GAI reel showing Fuentes fighting a group of Orthodox Jewish men.

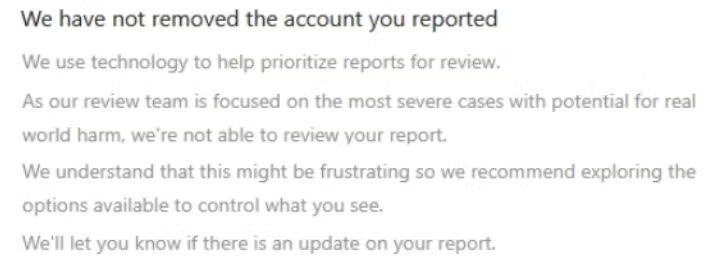

Of note, in response to 20 accounts reported during this process, Instagram responded that they did not have the bandwidth to review the report, explaining: “We use technology to help prioritize reports for review. As our review team is focused on the most severe cases with potential for real world harm, we’re not able to review your report.” As of March 5, 17 of these accounts remained active and continued to spread hateful content.

(Screenshot/Instagram)

Response from Instagram citing an inability to review a reported account.

In at least three cases, Instagram claimed that accounts had been removed, but subsequent review by COE on March 5 found that they remained active on the platform. After escalating these profiles to Meta on March 30, only one account remains active as of this publication. Separately, in one case, an account appeared to have temporarily deactivated amid the initial flagging process, and at the time of writing remains active on the platform.

Though this was a sampling flagging exercise, the overwhelming percentage of cases where Instagram did not remove the offending content either explicitly or through non-response further illustrates that the platform is failing to protect its users from hateful and extremist content.

Of particular concern is the lack of response to flags entirely and the messages that certain pieces of content would not be reviewed at all. This suggests that it is not a matter of a difference of opinion as the to the application of the terms to the content, but rather that Instagram is abrogating its responsibility to protect users from extremist content and allowing malicious actors to use its platform as a microphone for hate.

Recommendations

The results of this report indicate that concerns about hate and extremism on Meta platforms are warranted. Lack of or lack of enforcement of content moderation practices only allows harmful content to proliferate on mainstream platforms like Instagram.

ADL raised alarm about these decisions last year, arguing that if Meta wanted to rely primarily on user reporting, then the process of doing so must be reformed. Yet findings from the Instagram enforcement testing conducted for this report show that many user reports are not leading to the removal of harmful content and, in some cases, are being ignored.

With this, we recommend that Meta:

- Work with ADL experts on hate and extremism to better identify violative users and content

- By connecting with experts who have deep knowledge of how extremists and promoters of hate navigate online spaces, trust and safety teams can better address violative and illegal content at the source, rather than relying squarely on user reports.

- Provide researchers with adequate data access

- Having adequate access to platform data enables researchers, including academic and civil society members, to more efficiently identify patterns and trends in violative content, without having to make manual assessments. Methods could include reinstating tools like CrowdTangle, for example, or creating a special API that allows experts to research Meta platforms.

- Reinstate proactive measures against violative content

- When platforms are equipped with proactive safeguards against violative content, it’s harder for bad actors to exploit moderation gaps, therefore lessening the surge of hate on the platform and the reliance on user reports. These surges may be mitigated if Meta brings back more proactive moderation efforts, like those in place before their January 2025 announcement, in which Mark Zuckerberg stated that the company would narrow the focus of its automated filtering tools to illegal and high severity violations while relying on user reports to identify lower severity violations. As Zuckerberg said at the time, by “dialing [the filters] back, we're going to dramatically reduce the amount of censorship on our platforms” Meta is “going to catch less bad stuff.”

- Review all user reports

- Social media users deserve online communities in which they feel welcome and heard, and where they don’t have to share space with extremist or hateful users. If companies like Meta choose to prioritize user reporting for content moderation, then they must be able to do so at scale. Without the proper measures, they break the trust of their users by promising a service that is more performative than it is effective.

Previous research from ADL found that Meta responded to exactly zero cases of antisemitic content when reported by average users. Meta should conduct an audit of automated systems responsible for both proactive moderation and the review of user reports, as well as their guidance for any remaining human moderators.

- Social media users deserve online communities in which they feel welcome and heard, and where they don’t have to share space with extremist or hateful users. If companies like Meta choose to prioritize user reporting for content moderation, then they must be able to do so at scale. Without the proper measures, they break the trust of their users by promising a service that is more performative than it is effective.

Policymakers must also take action to ensure terrorists and foreign extremists are stopped from exploiting social media platforms, like Instagram, to seed division and drive hate. We urge lawmakers to support and pass the bipartisan Stopping Terrorists Online Presence and Holding Accountable Tech Entities (STOP HATE) Act (H.R. 5681). This legislation provides essential oversight to ensure companies enforce their own policies and take responsibility for the content they host.

This critical legislation would:

- Require social media platforms to publicly disclose their policies on how they address content generated by Foreign Terrorist Organizations (FTOs) and Specially Designated Global Terrorists (SDGTs), ensuring transparency and accountability;

- Mandate the Intelligence Community assess the national security threat posed by FTO usage of social media platforms, providing essential oversight of how terrorist organizations exploit these digital spaces; and

- Hold tech platforms accountable for hosting terrorist and extremist content, often in violation of their own terms of service.

Congress should work to pass the STOP HATE Act without delay.