Related Content

May 13, 2021

This blog post is the first in a series of three articles that will explore the unique challenges audio content moderation poses for platforms creating audio-focused digital spaces. This first article focuses on the choices around recording audio, and includes recommendations regarding Clubhouse’s current practices around recording. The second will discuss reviewing audio. The third will be on current approaches and models of audio content moderation that have been publicly announced.

“Hitler did what he had to do for the people.”

“You can’t even prove to me that that [the Holocaust] happened.”

Over the weekend of April 17 and 18, ADL was notified of several incidents of antisemitic harassment that involved statements such as the above on the social media platform Clubhouse. Occurrences of antisemitic harassment on the platform were first reported last September, and ongoing problems of safety and inclusion have plagued Clubhouse since it launched in July 2020 in beta without a means of reporting hate and harassment. However, it later implemented this feature and has been somewhat responsive to antisemitic incidents once reported.

ADL’s Center for Technology and Society has been tracking abuse on Clubhouse closely. The platform’s rise has been meteoric, growing from 600,000 users in December 2020 to over 10 million as of April. But as interest in Clubhouse and other audio-focused digital social spaces grows, how prepared are tech companies for the distinct challenges of moderating audio content to fight hate and harassment?

Though there are clearly unique challenges to content moderation in different types of digital spaces, many of the problems of audio content moderation are similar regardless of whether the space is an online game, is part of an existing service like Twitter or Telegram, or is part of a new platform like Clubhouse. When considering how to do audio content moderation, each tech company must make a fundamental decision on whether to record audio content in some form. Recording, in this context, means that the audio in the social space is captured by the social platform and stored for some time. There are many reasons not to record audio content ranging from concerns around users’ right to privacy, data storage needs for audio content, the impact of audio content review on human moderators, and the resources necessary to tackle the challenge. Then again, choosing not to record audio has implications for moderation against hate, harassment, and extremism. In the absence of a record of the content in question, moderators have to rely on witnesses’ accounts or take direct action themselves to evaluate an incident.

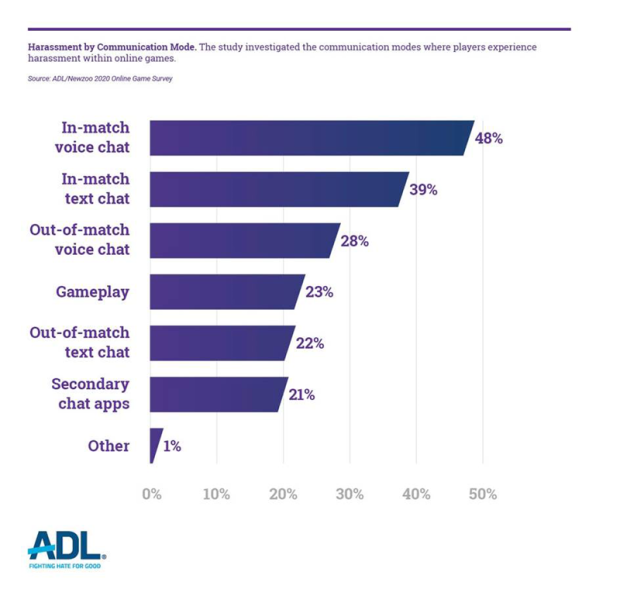

Although Clubhouse is in the spotlight at present, the problems highlighted are not new, and a historical perspective is useful to understand the consequences of these choices. The social platform Discord has had live voice chat since its first rounds of funding in 2016. Voice chat has been a part of communication and content moderation concerns in online multiplayer games since at least 2012, when games scholar Kishonna Gray wrote about women of color’s experiences of racism in voice chats on Xbox Live. The problems posed were underscored by the results of ADL’s 2020 survey of hate, harassment and positive social experiences in online games, which found that 48 percent of American adult players experience harassment in voice chat while playing games online.

After Clubhouse launched in March 2020, other tech companies announced their plans for audio-focused spaces. In December 2020, the private chat platform Telegram added a group voice chats feature, and Twitter rolled out a beta version of its Spaces voice product. Spotify announced the purchase of Betty Labs, the company behind an audio-only app, Locker Room, while Slack’s CEO spoke of his plans to build Clubhouse-like audio features into Slack. Facebook recently announced their audio-focused product, Live Audio Rooms, would be rolled out to users by this summer.

To Record or Not to Record

In a 2019 study on community moderation in audio-focused digital spaces, researcher Aaron Jiang interviewed community moderators on the platform Discord who tried to investigate audio content abuse in their channels despite Discord’s practice of not recording audio content. The tactics moderators used included entering voice channels as soon as a report confirmed that rules were being broken and interviewing witnesses of abuse to get several versions of events. Jiang found:

“The affordances of voice-based online communities change what it means to moderate content and interactions. Not only are there new ways to break rules that moderators of text-based communities find unfamiliar, such as disruptive noise and voice raiding [joining an audio space enmasse to engage in abuse], but acquiring evidence of rule-breaking behaviors is also more difficult due to the ephemerality of real-time voice. While moderators have developed new moderation strategies, these strategies are limited and often based on hearsay and first impressions, resulting in problems ranging from unsuccessful moderation to false accusations.”

The tactics moderators described comprise interviewing witnesses to a particular allegation of a transgression in an audio space and moderators themselves jumping into an audio space at the first sign of an issue. Without recorded audio, these tactics are often the only tools available to moderate content. This is a very different model from the comprehensive, proactive monitoring and review of content flagged by users typical of text-based platforms.

On the other hand, platforms that choose to record audio content have received public pushback. Last October, PlayStation announced that the soon-to-be-released PlayStation 5 video game console would allow users to record audio from their online game and submit it to PlayStation for moderator review. This announcement drew mixed reactions. Some expressed trepidation over PlayStation staff listening to everything they say, despite reassurances that PlayStation would not actively moderate voice chat and instead provide tools for players to record harassment they are experiencing and report that audio. ADL’s 2019 survey of hate, harassment and positive social experiences in online games found that only a slight majority of adult gamers in the U.S. (55%) supported games with technology that actively monitored voice chat.

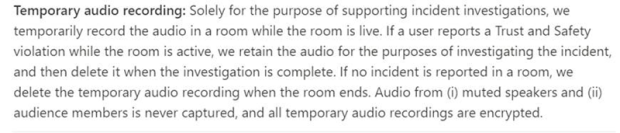

Clubhouse’s current guidelines state that it records audio on the platform while a channel is “live” (when people are actively talking) in a particular space. If a user reports an incident during this time, Clubhouse then stores the audio and investigates the incident. If nothing is reported while a space is “live,” Clubhouse deletes the audio upon the end of a session.

Clubhouse policy re: recording audio content

Clubhouse’s approach of temporarily holding audio content provides a different set of pathways to moderate content than Discord’s non-recording model. From ADL’s perspective, the Clubhouse approach has significant problems. It requires the target or those witnessing the hateful or harassing incident to respond at the moment and report the incident when they may be in distress or still processing the impact of the incident. While Clubhouse does provide an opportunity for users to report an incident after the live audio has ended, the investigation into the incident encounters a similar problem to what was described in the interviews with Discord community moderators, as audio evidence will no longer exist.

The policy currently in place with the Twitter Spaces audio product is a more equitable model. While not retaining the recording indefinitely, Twitter holds the recording for investigative purposes for 30 days. If a complaint is filed during that period, Twitter states that it will extend the period to 90 days to allow an appeal. This model gives ample time for individuals from vulnerable and marginalized communities often targeted by hate and harassment online to report incidents to the platform, while also not retaining the audio in perpetuity.

The decision on whether and how to record audio content is one that all tech companies who are engaged in creating audio-focused spaces will have to weigh as they decide the best path forward. The reasoning behind a company’s recording decisions must center the experience of vulnerable and marginalized communities, because failure to do so may cause audio-focused social media platforms to become hotbeds of hate and harassment, as is too often the case with online games.

Part two of this series, “Static vs. Livestreaming Audio Content Moderation,” will explore what choices a platform must make around content moderation if it chooses to record audio.