Related Resources

The Reality of How Harassment Spreads on Twitter

Online Hate and Harassment: The American Experience 2022

Press Release

Fight Antisemitism

Explore resources and ADL's impact on the National Strategy to Counter Antisemitism.

Generative Artificial Intelligence (GAI) has dominated news headlines for its potential to transform how we work, write, and play. For example, OpenAI’s ChatGPT allows people to ask various questions, summarize a book or write a new song in the style of their favorite musical artist. AI-enhanced search tools return summaries of online content, rather than a list of links to different webpages. Other tools create realistic photos or pieces of artwork from a description. Some tools create audio of someone saying words they never spoke. Even in this nascent stage, users combine GAI tools to create new images, video, and audio. These outputs, called “deepfakes,” can be produced with very little skill or time. Though imperfect, they are often realistic enough that, without close attention, viewers cannot determine if it is AI-created, possibly deceiving them. Like any new technology, GAI shows great promise and potential dangers.

ADL Center for Technology and Society (CTS) has been monitoring the emergence of these GAI tools. Our analysis and questions for lawmakers and technologists urge them to consider the risks GAI poses in magnifying online hate and harassment and further destabilizing an already fragile information ecosystem.

To learn more about the public’s view of GAI, we conducted a survey of over 1,000 Americans that asked about their views of its potential benefits, possible harms, and options for interventions. These results bolster the need for the public to ask tough questions now to ensure that GAI is developed with user safety at its core.

Methodology

Using Qualtrics, a nationally representative survey platform, 1,007 adults living in the United States were polled between May 1-5, 2023. Participants completed a 34-item survey after viewing a short prompt explaining generative AI tools and examples of their uses. The average survey completion time was approximately seven minutes.

After providing basic demographic information (10 questions), participants indicated their familiarity (“Not familiar at all” to “Very familiar”) with generative AI before taking the survey. Participants then selected from a list which potential uses of generative AI they were excited or hopeful about (e.g., “improving healthcare services”). Participants used a six-point scale ranging from “Strongly disagree” to “Strongly agree” to indicate their responses to eight statements about AI’s potential misuses (e.g., “[AI] tools will make extremism, hate, and/or antisemitism worse in America.”). Participants were also asked about various interventions and options for regulation. Respondents’ familiarity with GAI did not significantly affect their level of concern or desired interventions. Below are some of the main takeaways from the survey.

Key Results

While some people are hopeful regarding some of the potential uses of generative AI tools, the survey found overwhelming concern by Americans that generative AI tools could make hate and harassment worse in society.

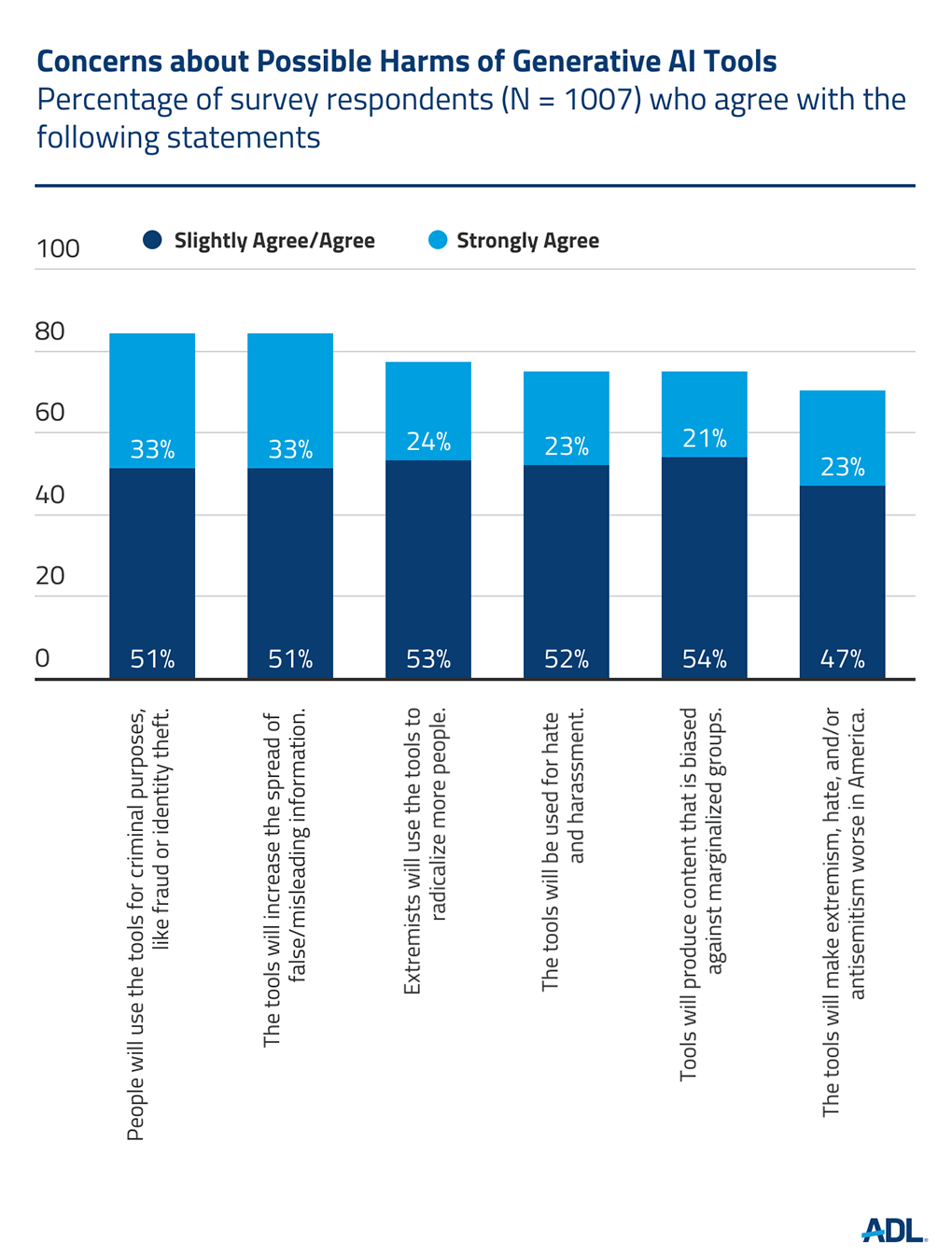

Substantial majorities of Americans are concerned that people will use the tools for criminal activity (84%), spreading false or misleading information (84%), radicalizing people to extremism (77%), and inciting hate and harassment (75%). Seventy-four percent of people think the tools will produce biased content, and 70% think the tools will make extremism, hate and/or antisemitism worse in America.

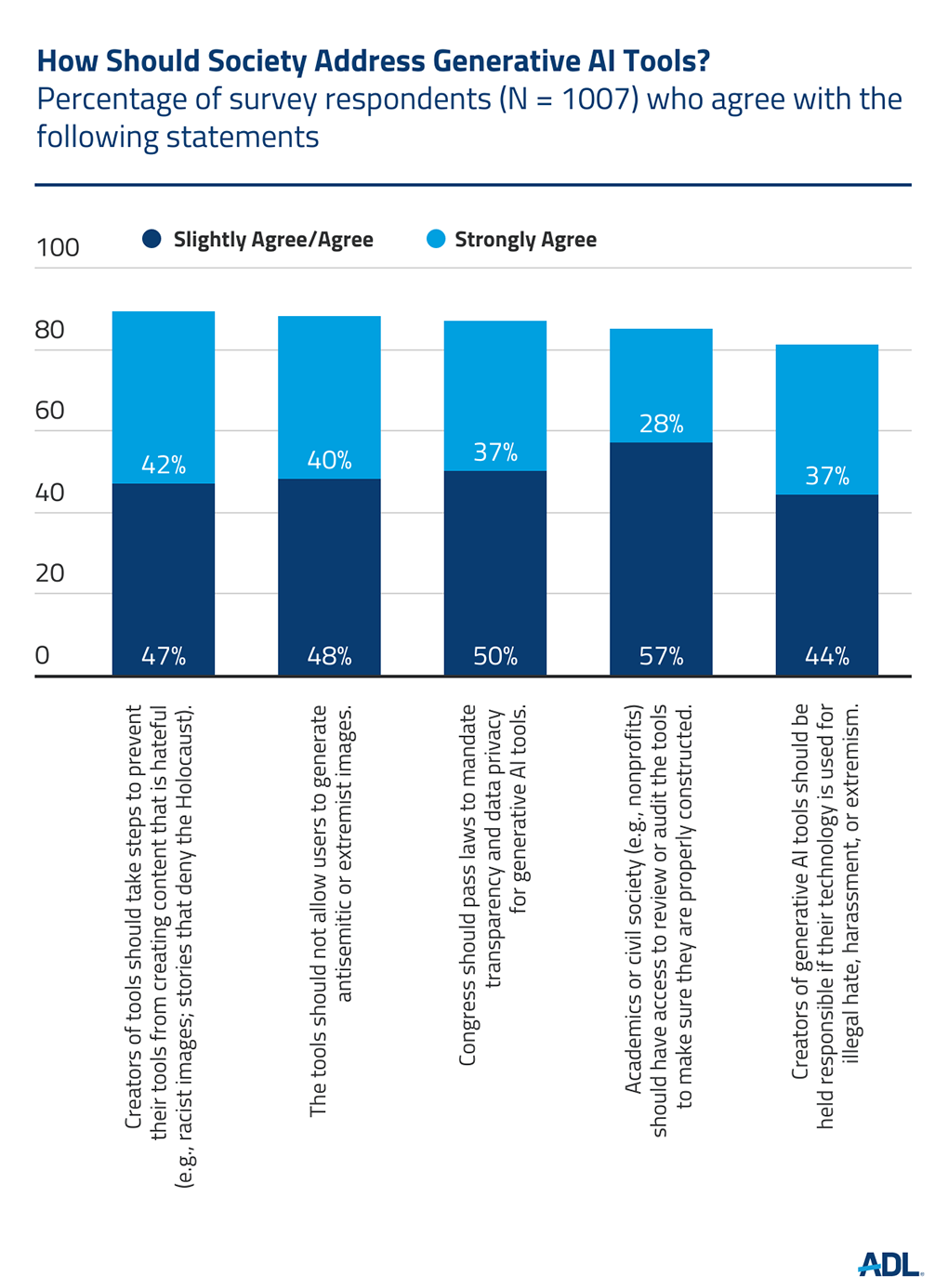

Americans overwhelmingly supported interventions to mitigate the risks posed by generative AI. Regarding what companies that create GAI tools should do, 89% of respondents believe that companies should take steps to prevent their tools from creating hateful content, and not allow users to generate antisemitic or extremist images. In terms of government or legal action, 87% of respondents supported congressional efforts to mandate transparency and privacy. Additionally, 81% of Americans believe that creators of GAI tools should be held responsible if their tools are used for illegal hate, harassment or extremism. Finally, 85% supported civil society having the ability to audit generative AI tools.

Conclusion

Americans are concerned that generative AI tools may exacerbate already high levels of hate, harassment and extremism. As a result, while conversation can and should continue about the possible benefits of GAI, all stakeholders—government, tech companies, social media users, and civil society organizations—should place these risks front and center and ask tough questions to ensure these new capabilities benefit society.