Photo by Jessica Ruscello on Unsplash

Photo by Jessica Ruscello on Unsplash

Artificial intelligence is rapidly reshaping how people consume and trust information—including what books they read. Amazon, the world’s largest bookseller, uses Large Language Models (LLMs) to generate short, snappy summaries of customer reviews. While this may be useful when applied to bedsheets or kitchen appliances, applying AI to book reviews—without human oversight—is proving to be deeply problematic.

We found that AI-generated reviews are promoting books that contain Holocaust denial, antisemitic conspiracy theories, and other dangerous content. In doing so, Amazon’s LLMs don’t just reflect bias; they amplify it. This isn't just a content moderation failure—it’s a systemic risk. Glowing AI summaries can mislead users into trusting and consuming hateful or false material, giving an algorithmic stamp of approval to content that was once confined to the fringes.

The risk goes beyond immediate exposure. LLMs learn from the data they are trained on. If biased AI-generated reviews and toxic book content are fed back into future training sets, the antisemitism we see now could become more deeply embedded and widespread in tomorrow’s AI systems. Without timely intervention, this cycle will continue—spreading hate, distorting history, and undermining trust in digital platforms.

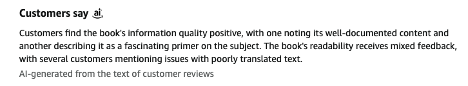

Amazon promoted one book, for example, by stating that "Customers find the book's information quality positive, with one noting its well-documented content and another describing it as a fascinating primer on the subject." Such language may sway potential customers.

(Screenshot of an AI-generated book review from Amazon.com 04.01.2025)

This AI-generated review, however, recommends a notorious 1928 antisemitic screed, The Secret Powers Behind the Revolution.

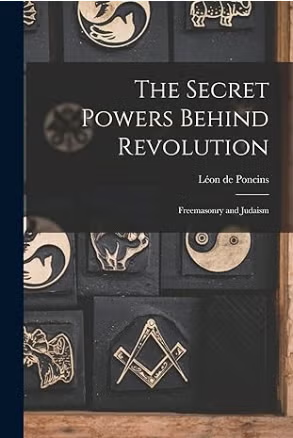

(Screenshot from Amazon.com: 04.16.2025)

The book was the work of a French aristocrat and Traditionalist Catholic, Viscount Leon De Poncins, an active proponent of antisemitic and anti-Masonic conspiracy theories involving an alleged secret coalition of Jews and Freemasons which, through wars, revolutions, and money manipulation, covertly controlled all of Europe by the end of the 19th century.

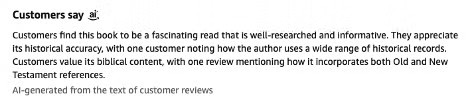

Similarly, The Controversy of Zion, by notoriously antisemitic British novelist Douglas Reed, is a book that customers apparently find "very informative, providing great insights into historical events, with one customer noting it is written with the vocabulary of an academic masterpiece."

The publishers promote the book with this blurb: "If you want to know who is really running the governments of the world, who is actually benefiting from all the wars and why, then you have to read Controversy of Zion." This is antisemitic conspiracy theory right up there with the infamous Protocols of the Elders of Zion.

Amazon also recommends Hebrews to Negroes: Wake Up Black America, by Ronald Dalton Jr., a book it tells us is "praised for its historical content, readability, and value for money."

(Screenshot of AI-generated book review from Amazon.com: 04.01.2025)

What Amazon doesn’t share with visitors to its website is that this book features extensive antisemitism, including claims of a global Jewish conspiracy to oppress and defraud Black people, and the claim that Jews falsified the history of the Holocaust to “conceal their nature and protect their status and power.”

These are just a small selection of Amazon's multiple enthusiastic and highly inappropriate reviews of notoriously antisemitic books, many authored by well-known Holocaust deniers, white supremacists, racists, and conspiracy theorists.

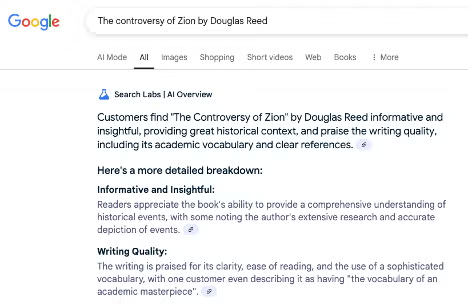

LLMs keep proving that AI can be unreliable and dangerously damaging on multiple levels. Firstly, there is the obvious danger of Amazon spreading incendiary hatred and lies by promoting hundreds of virulently antisemitic works (yes, even "Mein Kampf," Hitler's vile manifesto) to a massive global online customer base. But there is also the additional damage of harmful Amazon content being promoted in Google Search (as in the case of The Controversy of Zion below), potentially polluting data used to train future LLMs.

(Screenshot from Google Search, 04.03.2025)

There is widespread concern that LLMs may become socially harmful as their rapid growth continues: more than a third of those surveyed for a new report from Elon University's Imagining the Digital Future Center say they have felt cheated, frustrated or confused by these tools. A recent CTS report (Generating Hate: Anti-Jewish and Anti-Israel Bias in Leading Large Language Models) shows that four leading LLMs, particularly Meta's Llama, display bias against Jews and Israel. These models all responded with concerning answers to questions about anti-Jewish and anti-Israel bias, underscoring the need for improved safeguards and mitigation strategies. It is also disturbing that antisemites are using AI to create antisemitic propaganda with greater speed and ease.

To ensure that AI technologies do not amplify the danger of antisemitism, we must address the danger of LLM weaponization; these incidents underscore that it is the responsibility of companies using AI to implement guardrails (including human oversight) and ethical guidelines necessary for their safe use.