Register for Never is Now

Join us at Never is Now, ADL’s signature event and the world’s largest summit on antisemitism and hate, which will take place from March 3-4, 2025, at the Javits Center in New York City.

Game Over for Hate: Make Online Gaming Safe

Gamers are being targeted with hate and harassment based on their religion, gender, race, and ethnicity just for expressing pride in their identity. Join ADL in demanding action from the CEOs of popular online games.

Related Content

Online Hate and Harassment: The American Experience 2022

Online Hate and Harassment: The American Experience

Hate is No Game: Hate and Harassment in Online Games 2023

Hate Is No Game: Hate and Harassment in Online Games 2022

by Dr. Kat Schrier, Professor and Director of Games & Emerging Media at Marist University

29 min read

Executive Summary

This report shows there was hate and harassment in about half of the online multiplayer game sessions that ADL asked a group of participants to play using a range of religious, ethnic, and national identities.

Previous research by ADL and others has repeatedly suggested that online games are spaces where hate and harassment are rampant; we set out to examine what happens when gamers express pride in different religious, ethnic, and national identities while playing online competitive multiplayer games. Are they targeted with hate and harassment? If so, what is the nature of this harassment? And is there any prosocial behavior in these games?

Yet despite a dramatic increase in antisemitism and a rise in hate crimes against other religious/ethnic/national groups in the United States in the past year, the participants in this study were surprised there was not even more hate and harassment in the games they played.

No member of a marginalized group should have to hide their identity in online or offline communities for fear of being targeted with hate and harassment. Hate and harassment in games are not inevitable and should never be normalized.

This study suggests that in the quest to significantly reduce hate-based harassment while enhancing prosocial play, the design of games and game communities matters.

Key Findings

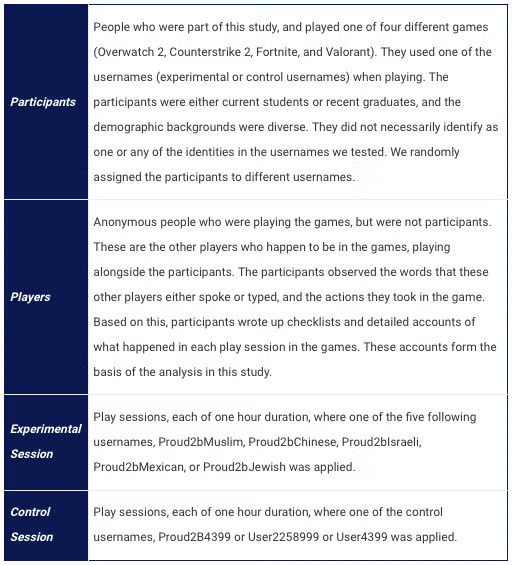

We asked 15 participants (university students, recent graduates, and young adults) to play four leading online games (Valorant, Counterstrike 2, Overwatch 2, and Fortnite) in one-hour increments with different identities (Jewish and Muslim religious and ethnic identities, as well as in national identities such as Chinese, Mexican, and Israeli).

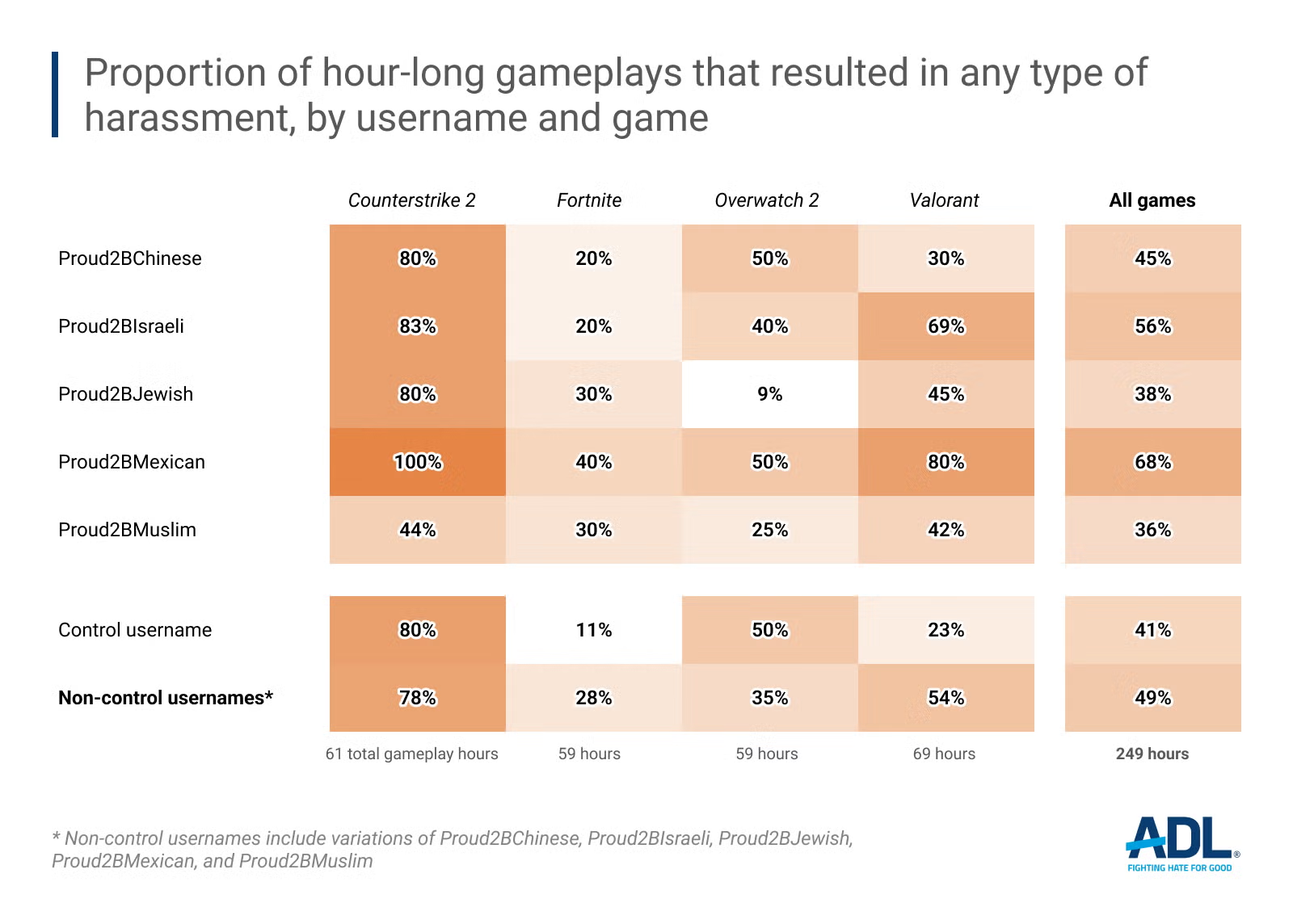

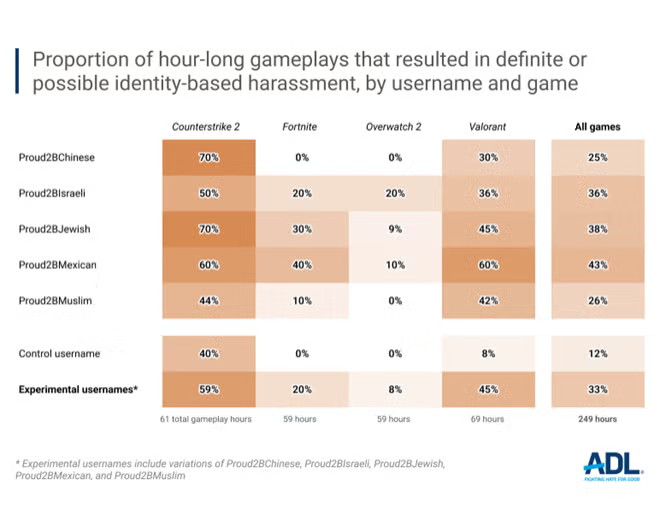

Our research across all four games shows that of the experimental game sessions played:

Almost half included some form of harassment, such as slurs, trash-talking, or disrupted play.1

One-third included identity-based harassment, such as “gas the Jews” or calling people the “n-word."

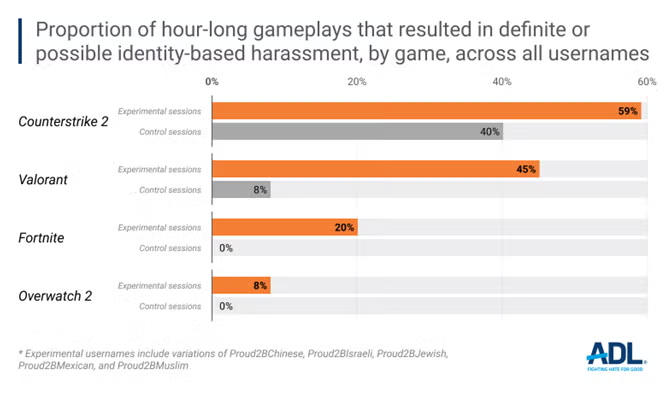

Valorant and Counterstrike 2 resulted in some type of harassment in about two-thirds of the game sessions; half had identity-based harassment.

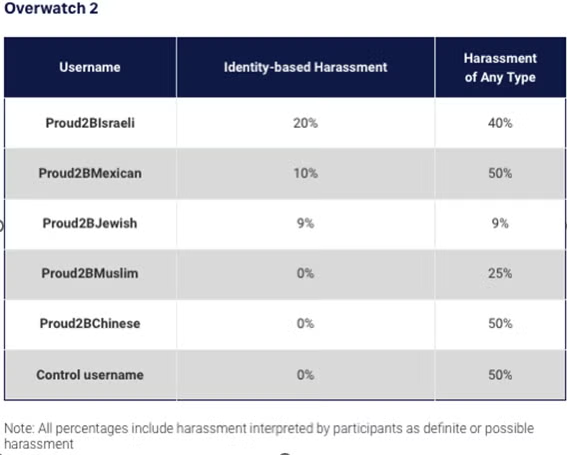

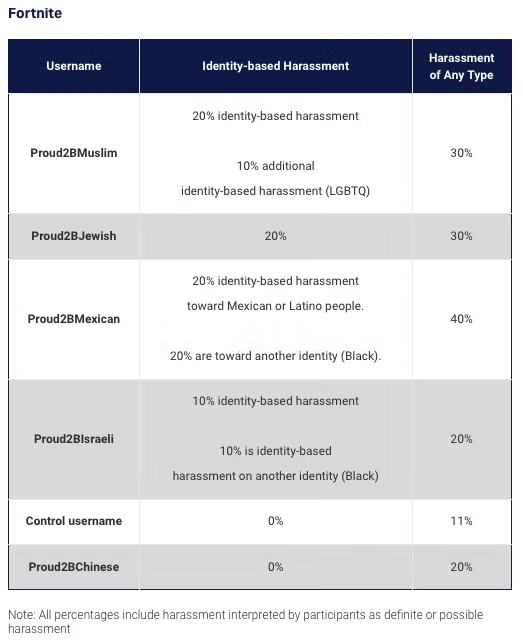

Overwatch 2 and Fortnite, in contrast, showed the least amount of identity-based harassment (8% for Overwatch 2 and 20% for Fortnite).

While there was some harassment in control play sessions, this was not due to the control username.

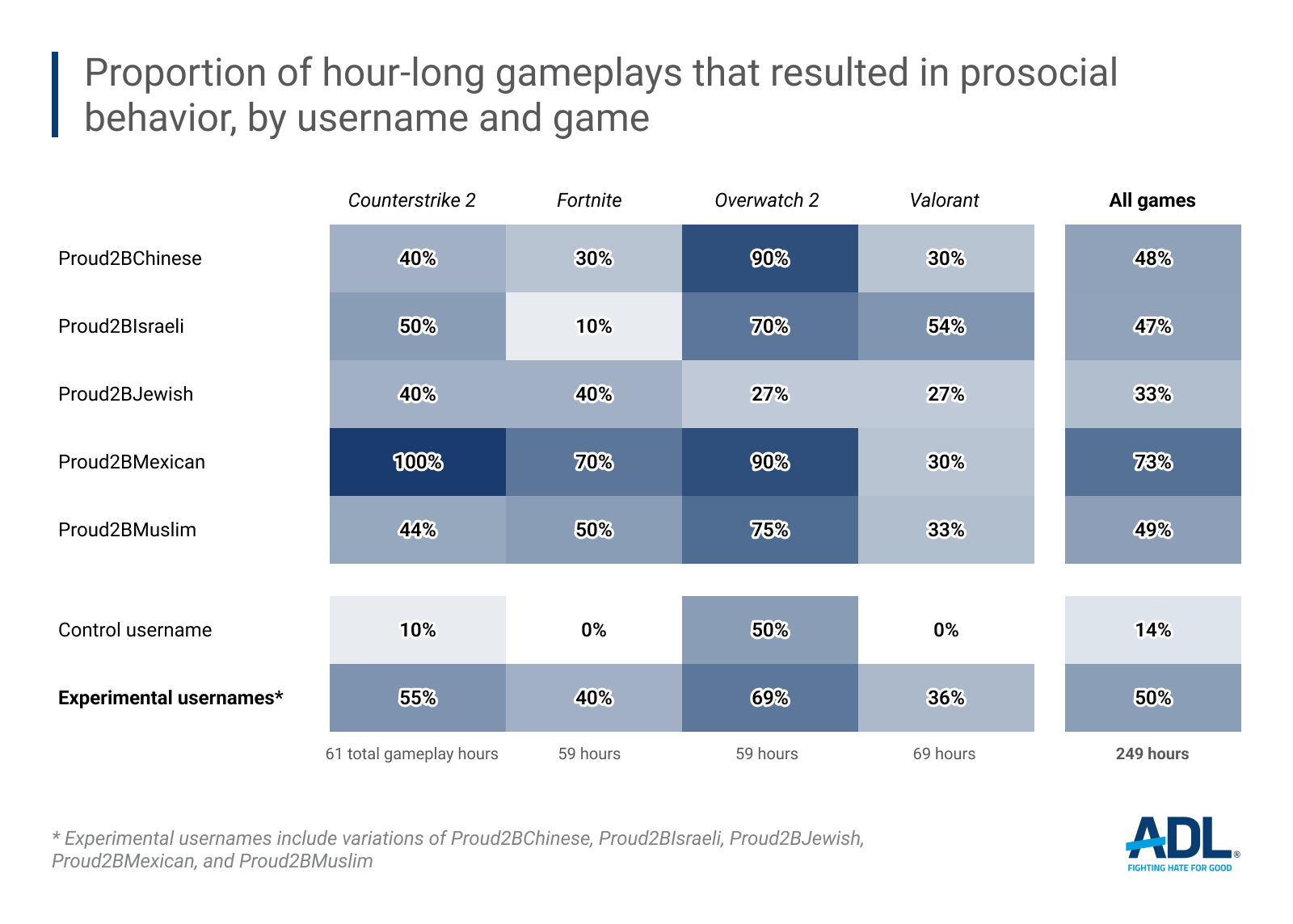

Prosocial behavior and positive play were also identified in these games: around half of all experimental play sessions resulted in positive play.

Conclusions

As increasing numbers of people engage in online gaming spaces, we need to find ways to minimize disruptive behavior, hate, and harassment.

Games should never accept disruptive or hateful behavior. Most players do not want to play in these kinds of environments, and harassment can cause many players to stop playing online games and become completely marginalized from gaming communities. Players may also respond by disrupting gameplay for others. This further spreads the notion that “games are toxic,” thereby continuing the cycle of disruptive behavior.2

The impact of this type of negative behavior extends beyond the game itself. Not only may the players themselves be directly affected, but also those watching via other platforms, such as Twitch or Discord.

Game design and community matter. This study’s investigation of four different games, with varying proportions of harassment and prosocial behavior, makes this evident.

Games can also facilitate prosocial outcomes and wellbeing. We should continue to advocate for games and gaming communities to be more compassionate rather than cruel.3

The Problem of Hate in Online Games

Online games are communities where people exchange ideas, socialize, and share opinions on current events.4 Six out of ten people (ages 5-90) in the U.S. play video games, according to the Entertainment Software Association,5 and there are over 3 billion players around the world.6 In games, people express their identities, engage in civics, and comment on a whole range of issues and events - much as they do on social media platforms such as Facebook, Instagram, X, and TikTok. Online games are also communities where people learn about themselves and shape their own opinions and behaviors, as well as ideas about others.7

ADL has been investigating hate and harassment—as well as prosocial behavior—in online multiplayer games since 2019. In 2023, ADL found that 76% of adult participants (aged 18-45) in online multiplayer games reported experiencing any harassment in online games. Types of harassment include name-calling, trolling and other disrupted play, and being excluded from a game. The same study found that 75% of teens (ages 10-17) had experienced some form of harassment in online multiplayer games.8

Other studies have also investigated toxicity, harassment, and dark participation in games or gaming-adjacent spaces (e.g., Twitch).Rachel Kowert and colleagues found that in their 2024 study of 432 online game participants, 82.3% reported experiencing toxicity and harassment first-hand, and 88.1% said they witnessed it happen to someone else. 31% of the respondents shared that they took part in harassing others.9

Games have also been sites of prosocial and positive behavior. A 2021 ADL report found that 99% of online multiplayer game players reported experiencing positive interactions in online games, such as mentorship, friendship, and discovering new interests.10

Most Americans see play in a positive light, notes the Entertainment Software Association (ESA).They view it as a way to experience joy and relieve stress, get mental stimulation, build community and connection, and help foster skills such as conflict resolution, teamwork, and problem-solving.11 Researchers, including former ADL Belfer Fellows Kat Schrier and Constance Steinkuehler, have written extensively about the use of games for learning, empathy, care, and compassion.12

The Research, introduced

A team of researchers tested identity-based usernames in four games: Overwatch 2, Counterstrike 2, Fortnite, and Valorant. Each session was approximately one hour, and participants played each game for 59-69 hours (due to variations in gameplay).

The following are terms we will be using throughout this report:

Almost half (49%) of all experimental game sessions resulted in varying degrees of harassment when played with the Proud2bMuslim, Proud2bChinese, Proud2bIsraeli, Proud2bMexican, or Proud2bJewish usernames (not including control session usernames).

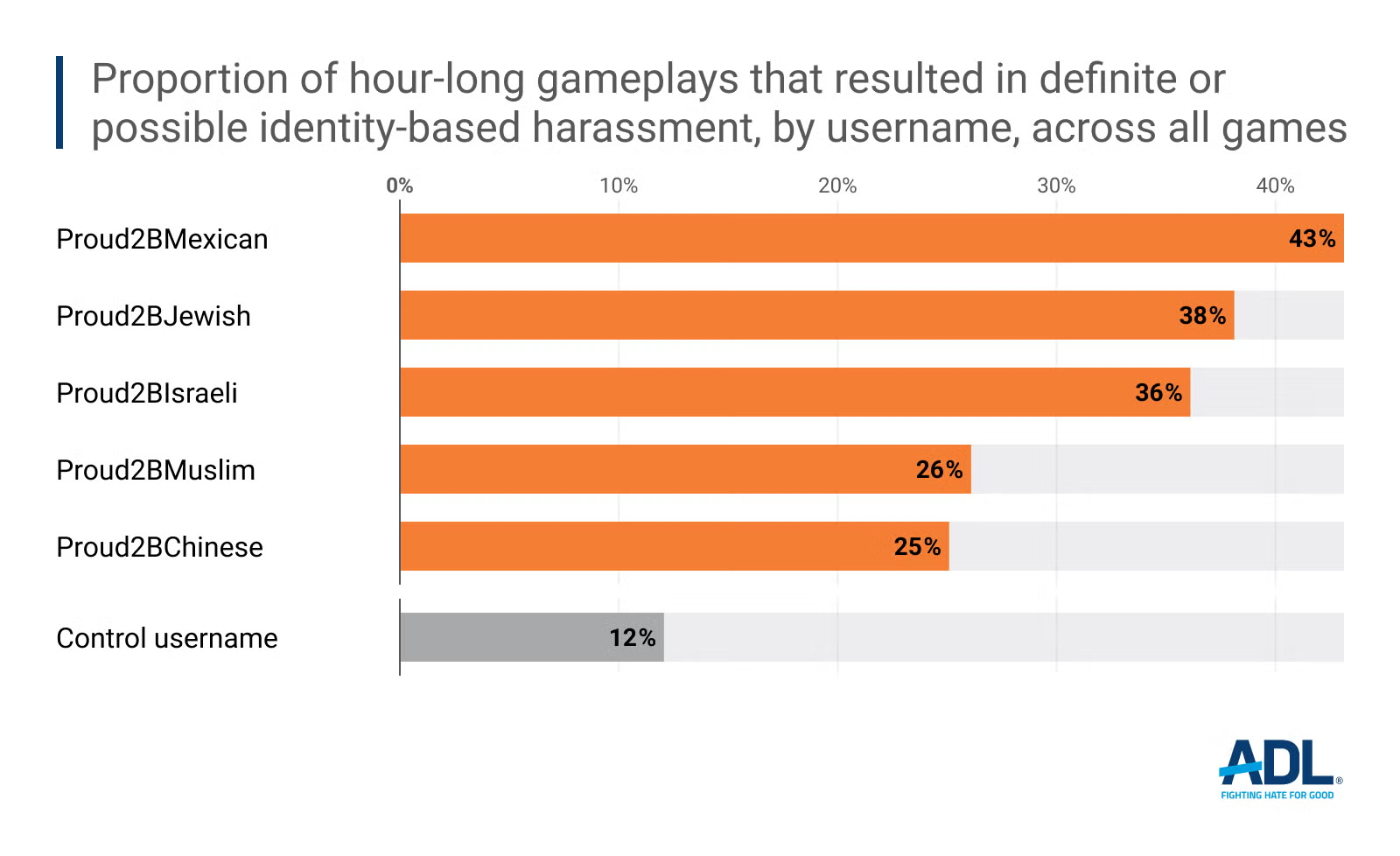

33% were definitely or possibly (total) identity-based (not including the control sessions).

22% of all sessions across all games definitely resulted in harassment based on identity.

Harassment, by game

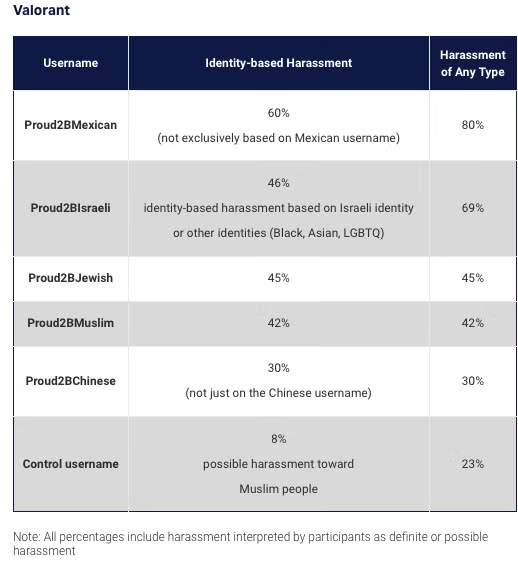

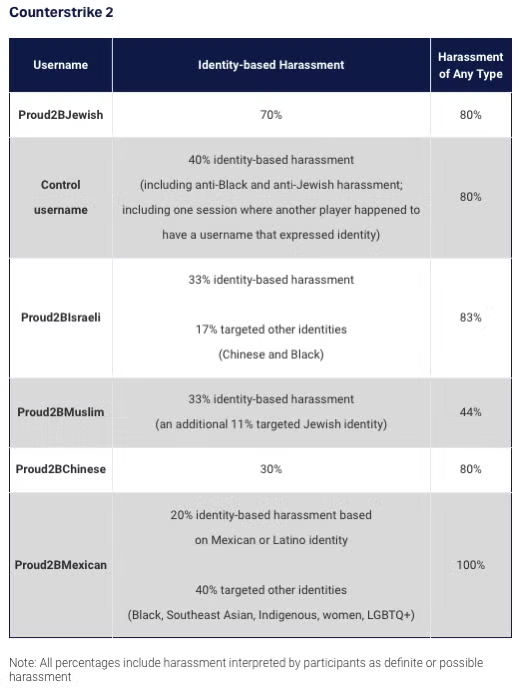

In the experimental play sessions, Valorant and Counterstrike 2 had more identity-based harassment (and general harassment) than Fortnite and Overwatch 2. The identity-based harassment is often related to the usernames (e.g., Proud2BJewish, Proud2BMexican). Sometimes it related to other identities (e.g., women, LGBTQ+, Black people). But none of the harassment in the control play sessions related to the control username.

In Valorant and Counterstrike 2 combined, over half of the play sessions included some form of identity-based harassment (Valorant was 45%, and Counterstrike 2 was 59%). And in 65% of playing sessions across Valorant and Counterstrike 2 game sessions, players witnessed or experienced a broader range of harassment (e.g., name-calling, disrupted play).

In 5% of the sessions across all games, players were exposed to racial and identity-based bias not related to the usernames. This included racial slurs–for instance, a player said, “‘These Ni***rs suck’ and when asked who sucks by another player standing up for those not speaking, he repeated it and spelled it out.”

Of the control username gameplay experiences in Overwatch 2, Fortnite, or Valorant, in comparison, very few resulted in identity-based harassment. In Counterstrike 2, 30% of the control play sessions resulted in identity-based harassment and 80% included general harassment, and disrupted play. This was mainly related to a player broadcasting religious sermons. One session, however, also included general identity-based harassment toward the LGBTQ+ community and people of color. One of the control play sessions in Counterstrike 2 may have included anti-Jewish harassment, as one of the players kept stating that the fan on their computer was shaped like a swastika. None of the control sessions included harassment related specifically to the control usernames.

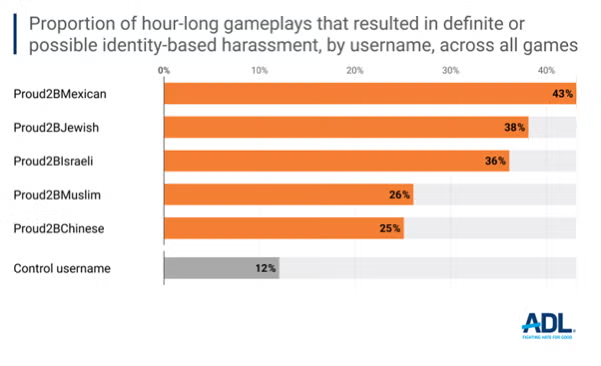

Harassment, by username

Experimental sessions with the usernames Proud2BMexican and Proud2BJewish had the highest overall identity-based harassment. This included harassment and bias based on Jewish, Mexican, and Latino identities, as well as Black, Indigenous, women, and LGBTQ+ identities. In some sessions, people were not just harassed based on their specific username, but there were instances of general identity-based harassment or harassment toward multiple identities. It is possible that seeing one username with a racial, ethnic, or national identity might, for example, spur someone to share hostility toward multiple identities. In one session in Valorant with the Proud2BMexican username, a player said, "You’re Mexican? Are you bad, like are you a girl? ‘Cuz if you’re a girl, like, y’know… Imma try to hit you up…”

Harassment of Participants with Mexican Username

25% of all play sessions with the Proud2bMexican username included some form of harassment toward Mexican or Latino people.

43% of all play sessions with the Proud2bMexican username included varying degrees of identity-based harassment - and not just toward Mexican people.

60% of the Valorant and Counterstrike 2 play sessions with the Proud2bMexican username included some level of identity-based harassment toward Mexican people and instances of identity-based harassment toward other identities, such as Black, Indigenous, women, and LGBTQ+.

Harassment of Participants with Jewish Username

Slightly more than a third (38%) of all play sessions with the Proud2bJewish username included some form of harassment toward Jewish people (this includes those interpreted as definitely and maybe identity-based harassment).

In Valorant and Counterstrike 2, over half (57%) of the play sessions with the Proud2bJewish username included some level of Jewish identity-based harassment.

Harassment included calling someone a “Jew” in a derogatory manner and participants getting shot at intentionally by teammates.

Harassment of Players with Muslim Username

Around 26% of all of the play sessions with the Proud2bMuslim username included some form of harassment toward Muslim or other identities, including Jewish people (20% of all sessions with identity-based harassment).

Harassment of Participants with Chinese Username

Around 25% of the play sessions with the Proud2bChinese username included some form of harassment toward Chinese people or others, including Jewish and Black people.

Bystander Behavior and Bans

Participants sometimes observed another player being reported, muted, or banned. This was typically due to general curses and disruptive gameplay behavior (purposely not playing or the previously mentioned Bible verses) rather than being related to slurs or identity-based harassment.

Only one of the participants initiated a report or voted to block another player specifically due to racism (in Counterstrike 2 with the Proud2BMexican username). They said, “[username] started out by calling me “lil Mexican (N-word)” and he repeated it like two thousand times over the course of the match until he got bored and just started saying the n-word not related to me at all.” However, participants were told to just play the game and not interact otherwise, which may have affected their reporting frequency.

Similarly, there was only one instance of a collective vote to kick someone out that was initiated by a player other than a participant (this was for someone using racist slurs in a Proud2BJewish Counterstrike 2 game). In a few cases, the participant-players themselves were subjected to a vote to kick them out despite not doing anything inappropriate (one was actually voted out). Despite all the harassment observed in the experimental sessions, only one session involved a system-wide report where another player was removed due to their identity-based harassment.

There were only three instances (out of all 249 experimental sessions) where players verbally stood up to someone who was bullying or harassing another person due to identity-based harassment.13 One was when a Proud2BIsrael participant in Valorant was called a “supporter of genocide and ‘israshit’ and someone…stood up for me in the game.” The second was when someone in a Proud2BMexican session in Counterstrike 2 was saying the n-word, and another player said, “I hope you’re black bro you cannot be saying that.” The participant interpreted this as possibly standing up to the racist behavior. Finally, in a Proud2BMuslim play session in Counterstrike 2, “A player of the opposing team said as they won the round, ‘These Ni***rs suck,’ and when asked who sucks by another player standing up for those not speaking, he repeated it and spelled it out.”

In other instances, two additional people stood up for players not doing well in the game, but this was unrelated to identity. In one experimental session, a player stood up to a (non-racist) aggressor, with the participant-player commenting, “You don’t often see that.” Otherwise, the participants noted that when they were harassed based on their username, no one else stood up for them. One participant said, “Other players were either..silent and ignored the general harassment I experienced, or.. just as toxic/harassing as the other people.” Another participant noted that the harassment seemed contagious. They said, “The harassment actually spurred on other players to harass each other".

It's Not All Bad: Prosocial Behavior

We also observed prosocial behavior, though most related to gameplay and not identity expression. Overwatch 2 (69%) and Counterstrike 2 (55%) had higher overall rates of positive interactions than Fortnite (40%) and Valorant (36%). Most of these were either “good job” (gg) sentiments in chat or endorsements (in the case of Overwatch 2) or, in the case of Counterstrike 2, votes to kick out players who were sharing Bible sermons over the voice chat (the sermons are seen as disruptive because they make it hard for players to hear each other strategize or concentrate on the gameplay).

Examples of prosocial behavior in Valorant, Counterstrike, Overwatch 2, and Fortnite:

Teamwork

There was teamwork and cooperation in many of the sessions. In a Fortnite session with the username Proud2bJewish, for instance, a participant said, "[Username’s] reboot card was trapped in a spinning blade trap and no one could get to it. All the teammates tried getting it despite that and couldn’t, and she was very understanding about it, which you never see in online games. She was extremely communicative throughout the whole game and even stayed after her reboot card expired to cheer on the team, and we were alive for like ten more minutes.…Her male friend/boyfriend asks what she’s doing, and she says, ‘I’m dead but I’m staying and supporting my squad because that’s what you do in Fortnite.’”

Some of the gameplay experiences included both positive and negative experiences. However, many displayed no notable antisocial or prosocial behavior at all—no speaking, no chats, and no disruptive, nor any prosocial gameplay. There was just competitive gameplay.

Recommendations

Recommendations for industry

It is crucial that company executives, game developers, community managers, and other people involved in designing game environments do the following:

Implement industry-wide policy and design practices to better address how hate targets specific identities. Hate manifests differently based on the identity of the person being targeted. Companies should look at their policy and design solutions taking this into account. They should work with trusted industry partners and experts (such as ADL) representing impacted communities, to ensure that these policies are effectively designed and implemented across their platforms.

Incentivize and promote prosocial behavior through design. In addition to countering hate, game companies must encourage players to engage positively with one another. For example, Overwatch 2 enables endorsements, which could be utilized to encourage another player’s excellent gameplay and for their actions making the community safer - such as standing up to hate. As we noted in our previous report : “Use social engineering strategies such as endorsement systems to incentivize positive play.”

Increase, not cut, resources. Rather than reducing Trust & Safety headcount, as many companies have done in 2023 and 2024, companies must expand resources in this critical area. Failure to do this will eventually impact player retention and spending, as many players will leave if a game seems to not care about addressing hate and harassment. Some may choose not to play at all.

Improve reporting systems and support for targets of harassment. Gaming companies cannot stop at creating policies against online abuse: they must also enforce them comprehensively and at scale. Having effective, accessible reporting systems is a crucial part of this effort as it allows users to flag abusive behavior and seek speedy assistance. Companies must ensure they are equipping their users with the controls and resources necessary to prevent and combat online attacks. For instance, users should have the ability to block multiple perpetrators of online harassment at once, as opposed to having to undergo the burdensome process of blocking abusive users individually. Gaming companies must connect individuals reporting severe hate and harassment to human employees in real time during urgent incidents.

Strengthen content moderation tools for in-game voice chat. Bad actors in gaming spaces often evade detection by using voice chat to target other players in online games. To date, the tools and techniques that detect hate and harassment in voice chat lag behind those that moderate text communication (though some companies have made progress in the last year). Although this requires an investment of resources, companies must address this glaring loophole to ensure their users’ safety.

Release regular, consistent transparency reports on hate and harassment. Unlike mainstream social media companies, games companies—with few exceptions such as Xbox, Roblox and Wildlife Studios—have not published reports about their policies and enforcement practices. Transparency reports should include and share data from user-generated, identity-based reporting, aggregating data around how identity-based hate and harassment manifest on their platforms and target users. For guidance, companies can consult the Disruption and Harms in Online Gaming Framework produced by ADL and the Thriving in Games Group (formerly the Fair Play Alliance), which provides a set of common definitions for hate and harassment in online games.

Submit to regularly scheduled independent audits. Gaming companies should allow third-party audits of content-moderation policies and practices on their platforms so the public knows the extent of in-game hate and harassment. While transparency legislation efforts are still underway, gaming companies should voluntarily provide independent researchers and the public access to this essential data. In addition to promoting accountability, independent audits pave the way for gaming companies to assess areas for improvement with data-based findings.

Include metrics on online safety in the Entertainment Software Rating Board’s (ESRB) rating system of games. The ESRB provides information about a game’s content, allowing adults, parents, and caretakers to make informed decisions about the games that their children may play. Although game companies caution players that the gameplay experience may depend on the behavior of other players, no components related to the efforts of online games to keep their digital social spaces safe exist in the ESRB ratings at present. The ESRB should devise and implement these kinds of ratings across all the online games they review.

Recommendations for government

In February 2024, ADL released its annual report, Hate is No Game: Hate and Harassment in Online Games 2023. This report highlights hate and harassment in games and further demonstrates the need for governments to take a more active role in fighting hate and harassment in games. As in our February report, ADL recommends:

Prioritize transparency legislation in digital spaces and include online multiplayer games. States are beginning to introduce, and some are successfully passing, legislation to promote transparency about major social media platforms' content policies and enforcement (see California’s AB 587). Legislators at the federal level must prioritize the passage of equivalent transparency legislation and include specific considerations for online gaming companies. Game-specific transparency laws will ensure that users and the public can better understand how gaming companies are enforcing their policies and promoting user safety.

Enhance access to justice for victims of online abuse. Hate and harassment exist online and offline alike, but our laws have not kept up with increasing and worsening forms of digital abuse. Many forms of severe online misconduct, such as doxxing and swatting, currently fall under the remit of extremely generalized cyberharassment and stalking laws. These laws often fall short of securing recourse for victims of these severe forms of online abuse. Policymakers must introduce and pass legislation that holds perpetrators of severe online abuse accountable for their offenses at both the state and federal levels. ADL’s Backspace Hate initiative works to bridge these legal gaps and has had numerous successes, especially at the state level.

Establish a National Gaming Safety Task Force. As hate, harassment, and extremism pose an increasing threat to safety in online gaming spaces, federal administrations should establish and resource a national gaming safety task force dedicated to combating this pervasive issue. Like task forces that address doxxing and swatting, this group could promote multistakeholder approaches to keeping online gaming spaces safe for all users.

Resource research efforts. Securing federal funding that enables independent researchers and civil society to better analyze and disseminate findings on abuse is vitally important in the online multiplayer gaming landscape. These findings should further research-based policy approaches to addressing hate in online gaming.

Recommendations for the public, parents and caregivers

We all need to help advocate for safe, moderated, and caring communities in our virtual civic spaces.

Everyone should be aware of the impact of toxic discourse and harassment in our spaces, including online multiplayer games.

We should hold the government and industry accountable. We should demand safety and care in our online multiplayer spaces and ask government and industry leaders to protect us and our families.

Prioritize having up-to-date policies and practices. As more and more people spend a significant part of their social and civic lives online in environments like online multiplayer spaces, we must prioritize having up-to-date policies and practices to ensure we are protected from harassment.

We should not accept that “games will be games” and toxic cultures are to be expected. Hate and harassment are not inevitable. We should urge game companies to do better for their communities and game designs.

Parents of online competitive game-playing children (whether eight or 18 years old) should consider the types of interactions kids might have in these games. We should have engaged discussions around issues of safety online, such as what their child should do if they are harassed. We should also consider how to encourage kids to stand up to harassers (such as by reporting any harassment) and not propagate harassment.

Advocate for games that inspire health, care, and learning. Games can support the wellbeing of people, as well as negatively affect mental health. We must advocate for games to inspire good health rather than have a problematic impact.

We should encourage and incentivize resilient and caring communities, offline and online, to model prosocial civic behavior wherever people interact.

Recommendations for researchers

There are many gaps in the research surrounding harassment and hate in online games. We need more studies investigating what is happening inside these games and how to design interventions to promote prosocial play.

Some specific examples are:

Investigate these games more deeply, with an enhanced number of players, and play sessions. One possibility is expanding this investigation to include other online games (including non-competitive ones or ones aimed at other demographics). For instance, how do Roblox games or games that are not first-person shooters compare to the harassment found other games?

Study different variables, such as:

Longer gameplay (2 hours, not 1) or other increments of data (30-minute increments or by game)

Playing well (intentionally)

Playing poorly (intentionally)

Different expert levels for each of the games (novice vs. expert)

Focusing on only the lobbies vs. gameplay matches

Speaking aloud by people with different gender or racial identities

Use voice chat with non-English languages and non-U.S. accents, such as Hebrew language or Israeli accents.

Try out additional usernames, such as additional ethnicities or nationalities or non-ethnic usernames (Proud2bKnitting; Proud2bChef; Proud2bWriter)

Investigate specific game elements

Study the specific elements (e.g., design, moderation, diversity level, game environment, players) that influence how much a game community does or does not encourage hate or harassment versus prosocial behavior.

Appendix

Methodology

For two months in late 2023 and early 2024, 15 university students and recent graduates participated as game players in three online competitive first-person shooter games (Valorant, Counterstrike 2, and Overwatch 2) and played as Proud2BJewish, Control, and Proud2BMuslim. In summer 2024, 12 participants played Valorant, Counterstrike 2, Fortnite, and Overwatch 2 and played as Proud2BJewish, Proud2BIsraeli, Proud2BMexican, Proud2BChinese, Proud2BMuslim, and a Control username. The participants recorded their gameplay and filled out a short checklist after each hour of gameplay.

Choice of Game

The four games were chosen because they are popular multiplayer first-person shooter games (Counterstrike 2 is the top-played game on Steam, for instance), they are all free-to-play, and all four appear in the previous Playing with Hate report from ADL (2023). Counterstrike and Valorant, were two games with the highest reported harassment and online hate (86% and 84%). As a comparison, Overwatch 2 is also in the report and slightly lower on the list, with harassment reported by 73% of the respondents. Fortnite was at 74% in that report.

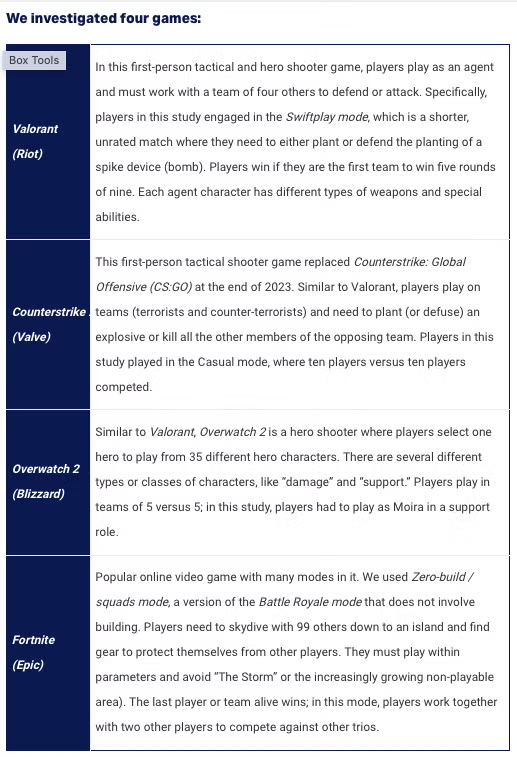

We investigated four games:

Valorant (Riot): In this first-person tactical and hero shooter game, players play as an agent and must work with a team of four others to defend or attack. Specifically, players in this study engaged in the Swiftplay mode, which is a shorter, unrated match where they need to either plant or defend the planting of a spike device (bomb). Players win if they are the first team to win five rounds of nine. Each agent character has different types of weapons and special abilities.

Counterstrike 2 (Valve): This first-person tactical shooter game replaced Counterstrike: Global Offensive (CS:GO) at the end of 2023. Similar to Valorant, players play on teams (terrorists and counter-terrorists) and need to plant (or defuse) an explosive or kill all the other members of the opposing team. Players in this study played in the Casual mode, where ten players versus ten players competed.

Overwatch 2 (Blizzard): Similar to Valorant, Overwatch 2 is a hero shooter where players select one hero to play from 35 different hero characters. There are several different types or classes of characters, like “damage” and “support.” Players play in teams of 5 versus 5; in this study, players had to play as Moira in a support role.

Fortnite (Epic): Popular online video game with many modes in it. We used Zero-build / squads mode, a version of the Battle Royale mode that does not involve building. Players need to skydive with 99 others down to an island and find gear to protect themselves from other players. They must play within parameters and avoid “The Storm” or the increasingly growing non-playable area). The last player or team alive wins; in this mode, players work together with two other players to compete against other trios.

Usernames Applied

Participants played each game in hour-long increments, using one of six different usernames:

· Proud2BMuslm or Proud2BMuslim or ProudToBeMuslim or Proud2bMuslim

· Proud2BJwish or Proud2BJewish or ProudToBeJewish or Proud2bJewish

· Proud2BChinese or ProudChinese

· Proud2BIsraeli or ProudIsraeli

· Proud2BMexican or ProudMexican

· Proud2B4399 or User2258999 or User4399 (“control” usernames with randomized numbers)

Usernames were sometimes shortened due to character limit constraints for one of the games and sometimes altered slightly if the game wouldn’t let them use that exact username.

Participants played as a “new” player for Valorant and Counterstrike 2. Participants played as either new or expert players for Overwatch 2 and Fortnite, if possible. Participants played Fortnite in Battle Royale/No Build and Squads mode.

Playing as a “new” player meant the participant created a brand-new account for that game and started with one of the six usernames. Playing as an “expert” for Overwatch 2 means that players played on a Platinum 1 level account, which was shared among the “expert” players. Participants were randomly assigned to games and conditions (usernames), but they were sometimes reassigned if a game would not play on their computer or they could not get the game to work properly. They also were assigned to new or expert depending on their experience level with Overwatch 2. Note: We did not find any differences in new versus expert for Overwatch 2, so we aggregated the results and just reported on Overwatch 2 as a whole.

For each of the games, participants played without speaking or typing to ensure that the results were not affected by the perception of intersectional identities (such as those based on gender identity or racial identity alongside the username). They enabled team voice chat and recorded what was happening on the screen and in the team voice chat.

Procedures

Participants filled out a checklist for each game hour they played, where they explained which game they played, which username they used, and what types of behaviors and chats (voice, text) occurred during that hour. For each one-hour increment they wrote down all instances of harassment, including name-calling, virtual problematic gestures, general slurs, and other disruptive behavior. Examples include someone calling another person a “Jew” in a derogatory way or purposely attacking their own teammate by shooting at them or their corpse.

Participants also wrote down all instances of prosocial behavior, including friendship, mentorship, and praise from teammates. For instance, players noted if they got endorsements from other players or if others stood up against disruptive behavior.

Participants also engaged in a short interview with the researcher. All completed checklists and interviews were coded using an iteratively-developed coding scheme. Each instance of problematic or prosocial behavior reported by the participants was coded with different codes, like “slurs (to the participants)” or “other players endorsed a player.”

Participants

All participants in this study are university students, recent graduates, or young adults. There was a mix of ages and gender identity, with about half of the participants identifying as men and the other half identifying as female and/or non-binary. Participants volunteered for the study based on an advertisement shared on Handshake, a career platform, and an advertisement on a games-major server in Discord. The participants have a variety of experience levels (novice to expert) with the games played and with online competitive multiplayer games in general.

Contextual Considerations

Context matters in how actions or language are interpreted in these games. Calling someone a “Jew” or “Jewish” in the game in a disparaging way, for instance, was seen as being problematic or harassing behavior. This was used as a slur in a few of the games played. In addition, some participant-student players initially dismissed slogans and words like “Free Palestine” or “Chinese Food” as not bothering them, perhaps because they themselves are not Jewish or Chinese and/or are used to hearing such things in game environments. It was only after speaking to a friend, as one of the players noted, or reflecting later, that they realized saying “Free Palestine” to a Jewish person or “Chinese Food” to a Chinese person might be viewed as an intentional insult.

Moreover, the same behavior can, in some instances, be seen as positive (“tea-bagging” after a victory), but in other instances, it could be seen as problematic or harassing (tea-bagging after a defeat, or tea-bagging while saying other words). Participants commented on whether players targeting them were due to the gameplay (competitive first-person shooter and being shot at by the opposing team) or due to their expressed identity (if their own teammates were shooting at them). These nuances might make it difficult for artificially intelligent (AI) moderation tools based on large language models (LLMs) to pick up hateful behavior and marginalization. All of this suggests that moderators and researchers (humans or AI) cannot just listen or watch to learn about hateful behavior and speech in online games. We need to understand the context and the norms of a particular game’s culture to fully realize how someone may or may not be harassed and marginalized in these spaces.

In some of the games, there were racist or biased remarks directed at identities beyond the ones expressed in this study. It suggests that players may still be targeted even if they do not express their identities. For instance, in some of the games, players were asked if someone was gay, a woman, Black, Mexican, Chinese, Indian, Indigenous, or Jewish, even if these identities were not expressed through any players’ avatars, chat, voice, usernames, gameplay, or image.

The participant-players were sometimes concerned that the expression of one’s identity (in the game) as Israeli, Jewish, or Muslim would cause them problems in the game or would be undesirable. Two participants dropped out of this study because they did not want to play as “Proud2BIsraeli,” though they were okay with the other usernames. One participant noted that they assumed other players thought they were “a troll account” because of the Jewish username and because they were not good at the game. Other participants shared their trepidation, at first, by using these usernames in these spaces.

Participant-players, however, shared their “pleasant” surprise that the gameplay sessions “weren’t that bad.” A few participants mentioned “having a good time playing the game” with the usernames.

Despite witnessing or observing antisemitic, Islamophobic, and other racist behavior and language, they seemed inured to its effects. They expressed that they often saw “much worse” behavior in previous play sessions with these games (with their typical username).

These results relate to other studies done on the normalization of toxic culture. Adinolf & Turkay (2018) interviewed game players in a university esports club and found that participants seemed apt to rationalize toxic behaviors and language as part of the competitive gaming culture.14 Likewise, Beres et al. (2021) explored the player perception of toxic behavior and that players may not report negative behavior because they think it is fine, typical, or not concerning.15 This is not necessarily due to resiliency, but due to perhaps a feeling of helplessness, as the impacts of identity-based hate and harassment in games and beyond have been shown to be damaging.16 Despite observing hate, players themselves may not be worried about hate speech and marginalization in games, which is worrying in itself.

Additional Analysis

Limitations & Opportunities

This investigation helps to understand the intersection between identity and expression of identity with behavior in online multiplayer games. However, some limitations have been identified:

Sample size is small. While we have almost 250 hours of total gameplay, we also do not have many sessions per game and condition (username). We recommend expanding on this study with further play sessions and games.

Since games are so dynamic, they are difficult to control due to many factors. Participants were asked not to speak or do anything that would otherwise relay their identity (such as their gender or racial identity through their voice). However, we might want to think about the intersectional effects. How are women who are Jewish harassed, or disabled people who are Mexican, or Black men who are Muslim, or Chinese people who are gay, etc.?

We cannot control for the people who are playing with or against us and the game matches we join.

Each game is unique, so we cannot control for various emergent gameplay factors.

It’s hard to control “winning” or “losing” the games as we play as a team with others.

We also played at different times and on different days, which could affect results.

There was a lack of consistency across multiple game players in terms of gameplay style and analysis of the interactions.

Some participants playing the games were playing as “New” to a game, but were experts at the game.

Some participants playing these games were completely new to the game.

Participants had different interpretations of the same events and interactions.

Some participants ascribed perceived biases and harassment to poor gameplay rather than underlying bias.

While all players used Moira in Overwatch 2 as often as possible future research should also control for the Agent used in Valorant, as darker-skinned avatars garner more racial bias from other players in multiplayer games.17

The length of the gameplay was constrained to only one hour for each game session.

Some game sessions were completely quiet and focused just on the game.

Others were more interactive and social.

Longer gameplay sessions would help to understand what is happening in these games over time.

One hour of play only lets players play about three-game matches.

Ethical issues in taking on an identity that is not your own.

Most participants were not Chinese, Mexican, Israeli, Jewish, or Muslim.

Some mentioned they would be uncomfortable taking on an identity if they had to speak or chat from the perspective of a person from that identity.

While participants had a variety of gender identities and racial identities, they may not have been affected in the same way by the slurs and harassment heard.

How do we protect the participants and any game players from unnecessary harm when playing these games as a Jewish, Chinese, Mexican, Israeli, or Muslim person and expressing one’s identity as such has a high probability of resulting in harm. For instance, one participant identified as one of those identities and was afraid to express it in the game. Another participant recognized as one of those identities and only wanted to express that one in the game. However, participants were randomly assigned to each condition and game.

Technological issues and other snags.

Participants had a variety of technological constraints, including drivers missing from their computers so they couldn’t play the game and computers overheating due to the high processing needs of the games.

Some participants ran out of video storage space on their computers.

Notes

1. We distinguished types of harassment (general vs. identity-based) by determining whether the harassment related to identity, and hatred or bias toward an identity, or whether it was related to other forms (e.g., trolling, disruptive play, griefing). We created a coding scheme based on previous definitions from the ADL and the Thriving in Games framework, outlined in more detail in the Appendix.

2. Beres, N. A., Frommel, J., Reid, E., Mandryk, R. L., & Klarkowski, M. (2021). Don’t you know that you’re toxic: Normalization of toxicity in online gaming. In Proceedings of the 2021 CHI conference on human factors in computing systems.

3. K. Schrier. (2021). We the Gamers: How Games Teach Ethics and Civics, Oxford University Press, https://global.oup.com/academic/product/we-the-gamers-9780190926113?cc=us&lang=en&; Johannes, N., Vuorre, M., & Przybylski, A. K. (2020, November 13). Video game play is positively correlated with well-being. https://doi.org/10.1098/rsos.202049.

4. K. Schrier. (2021). We the Gamers: How Games Teach Ethics and Civics, Oxford University Press, https://global.oup.com/academic/product/we-the-gamers-9780190926113?cc=us&lang=en&

5. https://www.theesa.com/resources/essential-facts-about-the-us-video-game-industry/2024-data/

6. https://financesonline.com/number-of-gamers-worldwide/

7. Ibid.

8. https://www.adl.org/resources/report/hate-no-game-hate-and-harassment-online-games-2023

9. Kowert, R., Kilmer, E. & Newhouse, A. (2024). Culturally justified hate: Prevalence and mental health impact of dark participation in games, HICSS ‘24, accessed at: https://scholarspace.manoa.hawaii.edu/server/api/core/bitstreams/ad85c3c9-5a16-426d-a694-71f426d9fd18/content

11. https://www.theesa.com/resource/2022-essential-facts-about-the-video-game-industry/

12. K. Schrier. (2021). We the Gamers: How Games Teach Ethics and Civics, Oxford University Press, https://global.oup.com/academic/product/we-the-gamers-9780190926113?cc=us&lang=en&; Steinkuehler, C., Squire, K., & Barab, S. (Eds.) (2012), Games, Learning & Society, Cambridge University Press.

13. Note that the participants were told just to play, and not to talk or type about anything other than gameplay. But one participant did initiate a block due to constant harassment and racial slurs, which automatically muted the player in Counterstrike 2.

14. Adinolf, S. & Turkey, S. (2018). Toxic behaviors in eSports Games: Player perceptions and coping strategies. CHI PLAY’18. https://dl.acm.org/doi/10.1145/3270316.3271545

15. Beres, N. A., Frommel, J., Reid, E., Mandryk, R. L., & Klarkowski, M. (2021). Don’t you know that you’re toxic: Normalization of toxicity in online gaming. In Proceedings of the 2021 CHI conference on human factors in computing systems.

16. Kowert, R., Kilmer, E. & Newhouse, A. (2024). Culturally justified hate: Prevalence and mental health impact of dark participation in games, HICSS ‘24, accessed at: https://scholarspace.manoa.hawaii.edu/server/api/core/bitstreams/ad85c3c9-5a16-426d-a694-71f426d9fd18/content

17. Cloud-Buckner J., Sellick M., Sainathuni B., Yang B., Gallimore J. (2009). Expression of personality through avatars: Analysis of effects of gender and race on perceptions of personality. In J. A. Jacko (Ed.), Human-Computer Interaction, Part III (Vol. HCII 2009, pp. 248-256). Springer-Verlag Berlin Heidelberg. https://doi.org/10.1007/978-3-642-02580-8_27