Demand Tech Companies Stop AI-Generated Hate

New AI tools can create hateful antisemitic videos in seconds, and the safety features meant to stop this are failing. Join ADL in calling on leaders at tech companies like Google, OpenAI and Hedra AI to prevent their tools from being weaponized to spread hate & antisemitism.

Nathaniel Rabb, Alexander M. Levontin, Adam Berinsky, Gordon Pennycook,

Thomas H. Costello*, David G. Rand*

*Corresponding authors: [email protected], [email protected]

Antisemitic conspiracy theories have been central to anti-Jewish prejudice for centuries. Given their longevity and deep ties to religious, ethnic, and ideological identities, debunking them presents a particularly difficult challenge. Here, we test whether having believers discuss their chosen antisemitic conspiracy with a large language model (LLM) prompted to debunk such conspiracies can reduce belief and improve attitudes toward Jews. In a preregistered experiment (N = 1,224 U.S. adults endorsing an antisemitic conspiracy theory), participants were randomized to a dialogue with an LLM (Claude 3.5 Sonnet) prompted to debunk their belief, or one of two control conditions. The debunking dialogue substantially reduced belief in antisemitic conspiracies relative to controls, and increased favorability toward Jews among initially unfavorable participants. These findings show that even deeply rooted, identity-linked conspiracies can be effectively debunked through factual correction, offering new insight into prejudice reduction and suggesting that LLM chatbots may help reduce antisemitism at scale.

Introduction

Antisemitism and conspiracy theories about Jews have been deeply intertwined for centuries (Hornsey et al., 2023). From the medieval “Blood Libel,” which accused Jews of murdering Christian children for ritual purposes, to claims from early Christians that Jews conspired with the Romans to kill Jesus, conspiratorial narratives have long portrayed Jews as secretly malevolent and powerful. Modern times have seen the same themes manifest in new guises, with theories involving Jews exerting global domination and controlling the media, effecting demographic replacement of white Christians, exaggerating or fabricating the Holocaust, and being responsible for the COVID-19 pandemic (ADL, 2020, 2021; SPLC, 2022). Furthermore, the overlap between antisemitic conspiracy theories and more general antisemitism (e.g., Allington et al., 2023; Nyhan & Zeitzoff, 2018) suggests that prejudice may drive belief in antisemitic conspiracy theories.

Given this long history of conspiratorial thinking about Jews, the deep connection that such beliefs can have to religious, ethnic, and ideological identities, and the potential role of prejudice as a driver of belief, debunking antisemitic conspiracies presents a particularly daunting challenge. Recently, it has been shown that dialogues with a large language model (LLM) prompted to debunk conspiracies can produce large and durable decreases in believers’ confidence in classic conspiracies (e.g. JFK assassination, government hiding evidence of aliens) (Costello et al., 2024). Importantly, this effect is primarily driven by the factual content of the LLM’s responses (Costello et al., 2025), and persists even if participants believe they are talking to an expert rather than AI (Boissin et al., 2025). Here, we ask whether this approach can similarly reduce belief in antisemitic conspiracies—or if these theories’ deep roots and connections to identity and prejudice make them unresponsive to factual claims and counterevidence. However, the apparent link between conspiracism about Jewish people and antisemitism also provides an opportunity to ask a potentially even more consequential question: Does debunking antisemitic conspiracy theories improve more general attitudes towards Jews? Beyond the practical significance of intervening on antisemitic views, this research seeks to test whether factual evidence relating to misbeliefs can have downstream impacts on prejudicial attitudes towards Jews.

To investigate this issue, we conducted a pre-registered experiment in which U.S. adults who endorsed at least one of a set of six antisemitic conspiracy theories (N = 1224) were asked to provide free-text descriptions of their belief and the evidence they saw as supporting it and then rated their confidence in that belief. They were then randomly assigned to one of three conditions: (1) a debunking dialogue with an LLM, Anthropic’s Claude 3.5 Sonnet, which was prompted to reduce the participant’s belief in the conspiracy; (2) a control dialogue with the LLM about an unrelated topic (benefits or costs of working from home); or (3) a minimal treatment condition that simply stated that their belief had been identified as a dangerous conspiracy, without providing counter-arguments. Finally, participants re-rated their confidence in the conspiracy belief they had articulated. To assess impacts on antisemitism, participants also rated how favorably they saw various religious and ethnic groups, including Jews, both pre- and post-treatment. See Methods for further details.

Results

Patterns of Antisemitic Conspiracy Endorsement

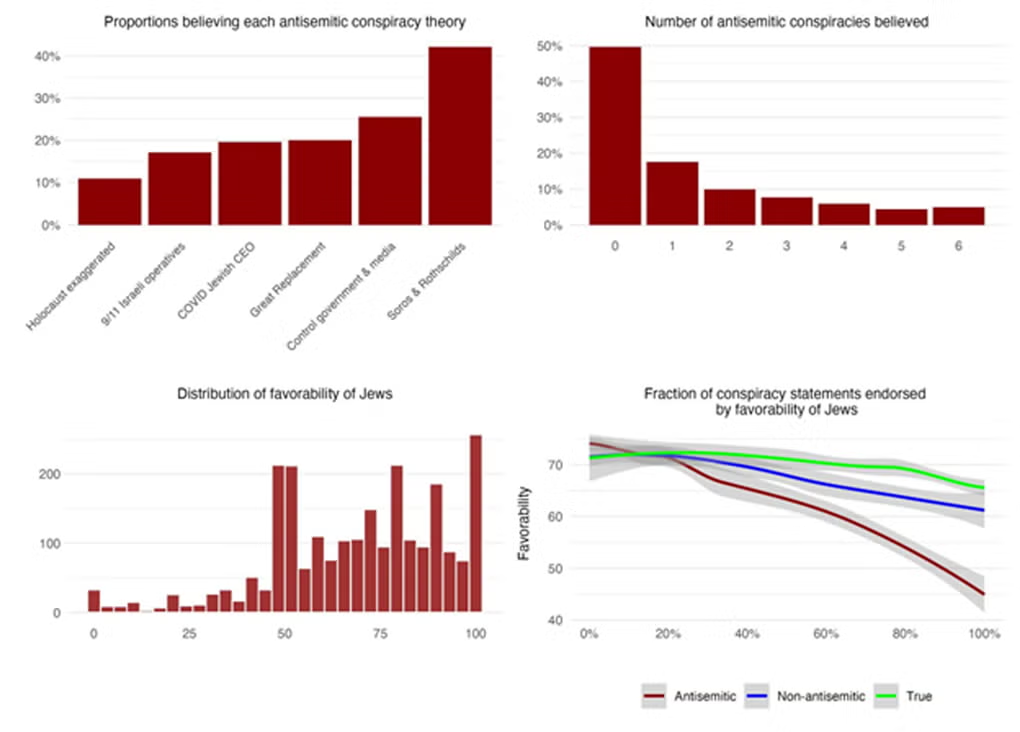

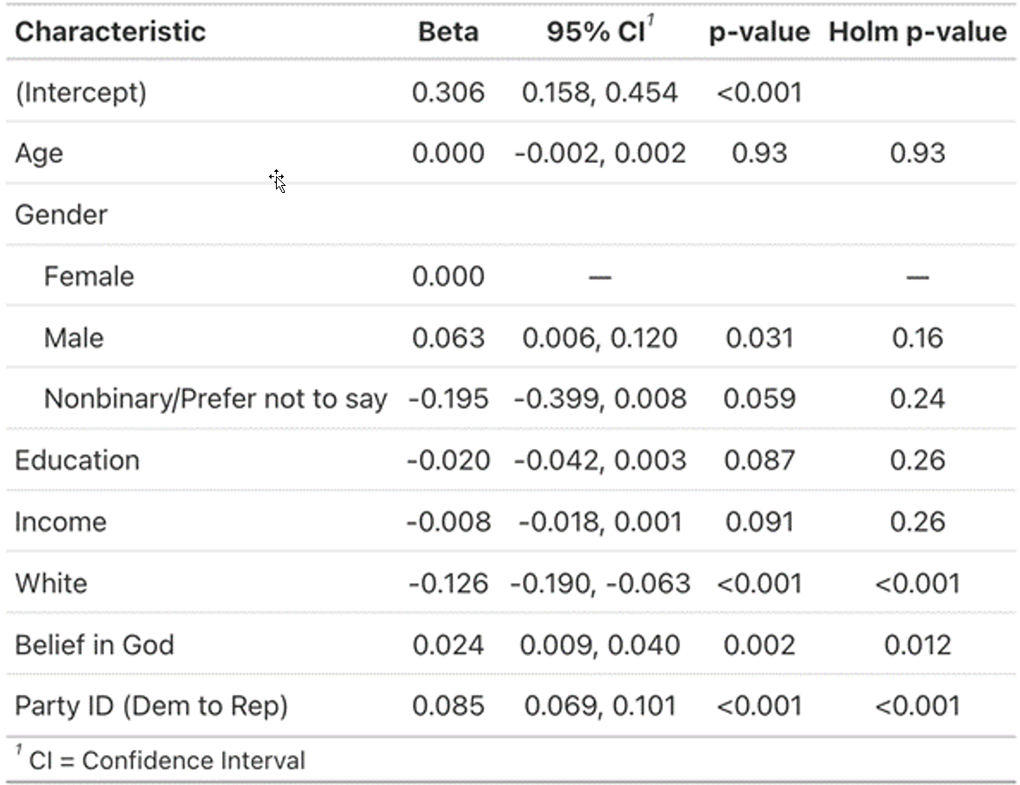

Participants rated their belief in six antisemitic conspiracy items, six non-antisemitic conspiracy theories, and five true conspiracies (see Methods for details). Focusing on the antisemitic conspiracies, Figure 1a shows that the most commonly endorsed theory concerned powerful Jewish families manipulating world events, while the least commonly endorsed theory concerned the unreliability of historical evidence for the Holocaust—although even this item was rated higher than the scale midpoint by 267 participants (>10%). The modal number of antisemitic conspiracy theories endorsed was zero (Figure 1b), but 23% of the initial sample (n = 556) endorsed three or more conspiracies. The distribution of ratings of how favorably participants felt toward Jews (pre-treatment) is shown in Figure 1c. Favorability ratings were negatively related to the number of antisemitic conspiracy theories endorsed (b = -.24, 95% CI[-.27, -.21] p < .001), and this relationship was significantly more negative than the relationship with the number of non-antisemitic conspiracies (interaction between belief and non-antisemitic conspiracy dummy, b = 0.16, 95% CI[.14, .18], p < .001) or true conspiracies (interaction between belief and true conspiracy dummy, b = .19, 95% CI[.16, .21], p < .001) - suggesting a unique link between antisemitic conspiracy beliefs and prejudice against Jews. An analysis of the content of participants’ specific beliefs (i.e., the conspiracies that participants endorsed in their own words) suggests a high degree of heterogeneity. For instance, 112 participants discussed the Great Replacement conspiracy theory, but among the 35 most frequently made claims about this conspiracy, just one was made by more than twenty participants, eight were made by two participants, and ten were made by exactly one participant. All items had similarly long-tailed distributions. Claims made by exactly one participant constitute 60% of all claims made to support this conspiracy theory (Holocaust exaggerated = 48%; 9/11 Israeli operatives = 53%; COVID Jewish CEO = 37%; Control government & media = 44%; Soros & Rothschilds = 31%). As such, the reasons that participants gave to support their conspiracy beliefs were highly variable. Finally, several demographic characteristics were associated with endorsing at least one antisemitic conspiracy theory, relative to endorsing none; see SI section SM3 for details.

Figure 1. Belief in antisemitic conspiracies is widespread and uniquely associated with antisemitism. (A) Percentage of participants endorsing (i.e. rating likelihood of being true > 50) each of the six antisemitic conspiracy theories included in our study. (B) Distribution of the number of antisemitic conspiracies endorsed by participants. (C) Distribution of pre-treatment favorability ratings of Jews. (D) Relationship between percentage of antisemitic conspiracy, non-antisemitic, and true conspiracy theories endorsed pre-treatment and pre-treatment favorability rating of Jews.

Reducing Belief in Antisemitic Conspiracies

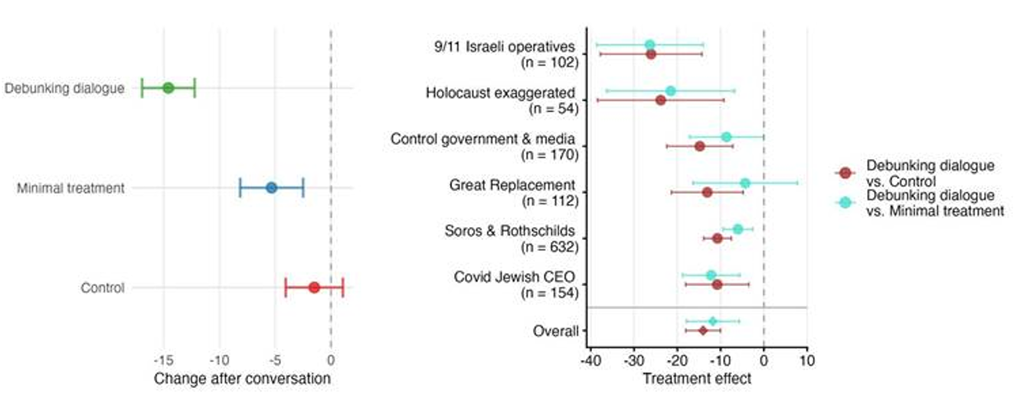

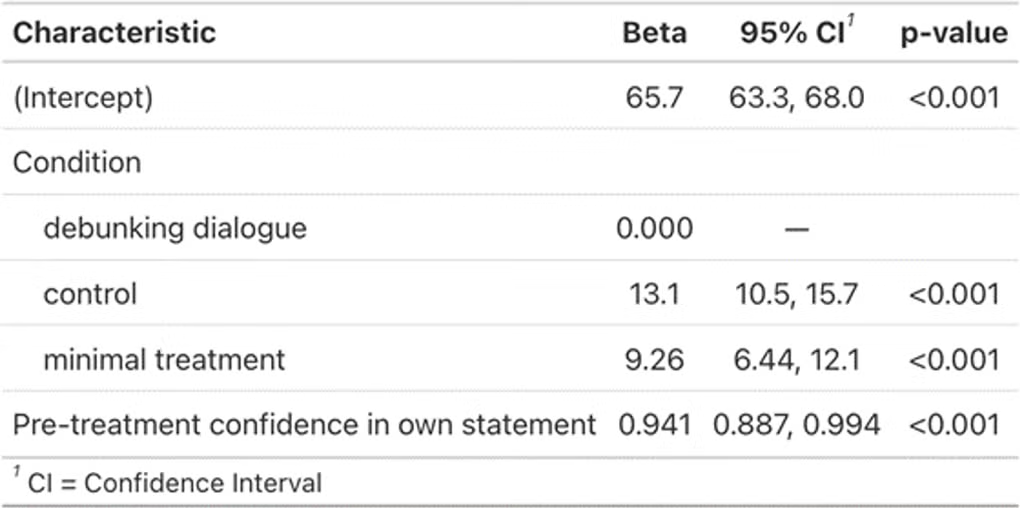

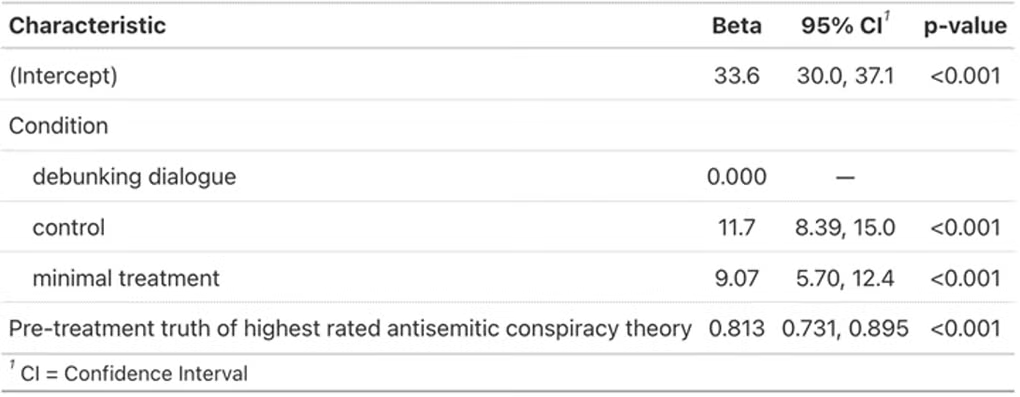

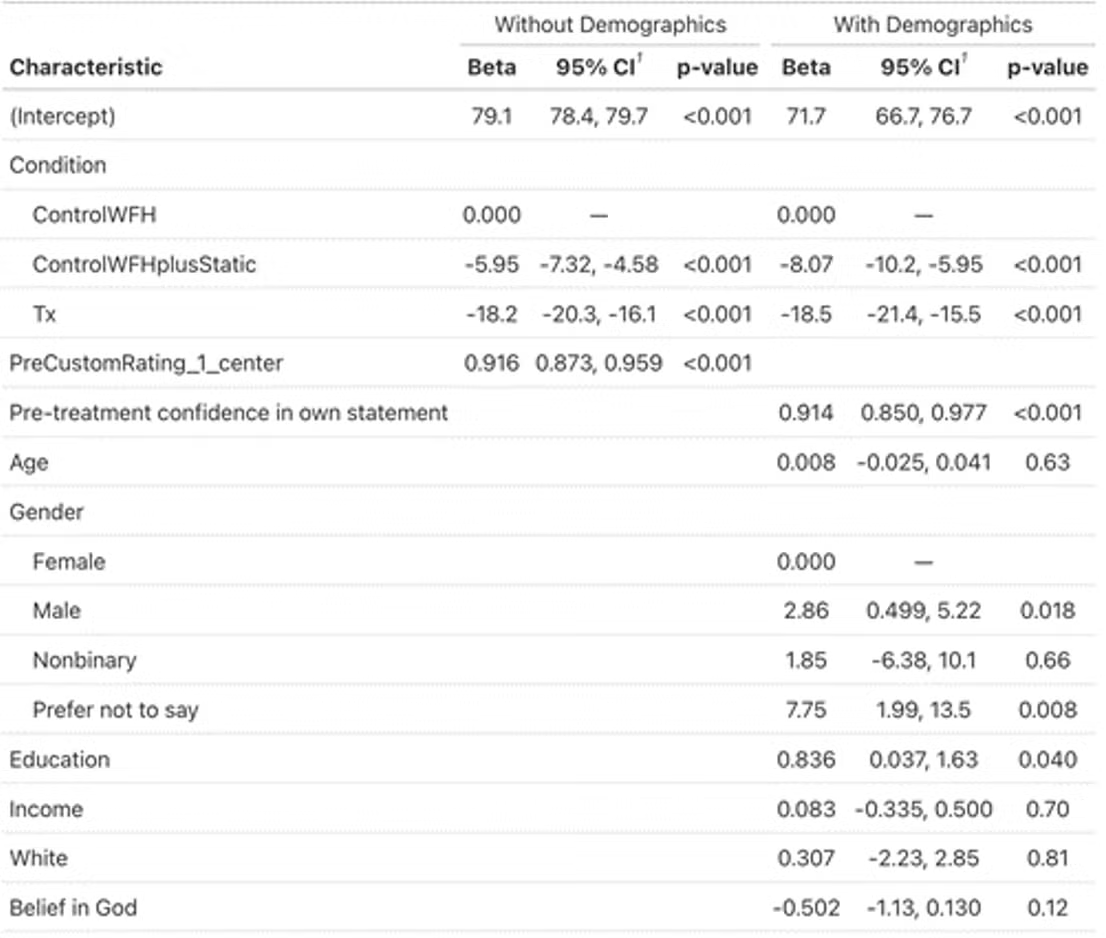

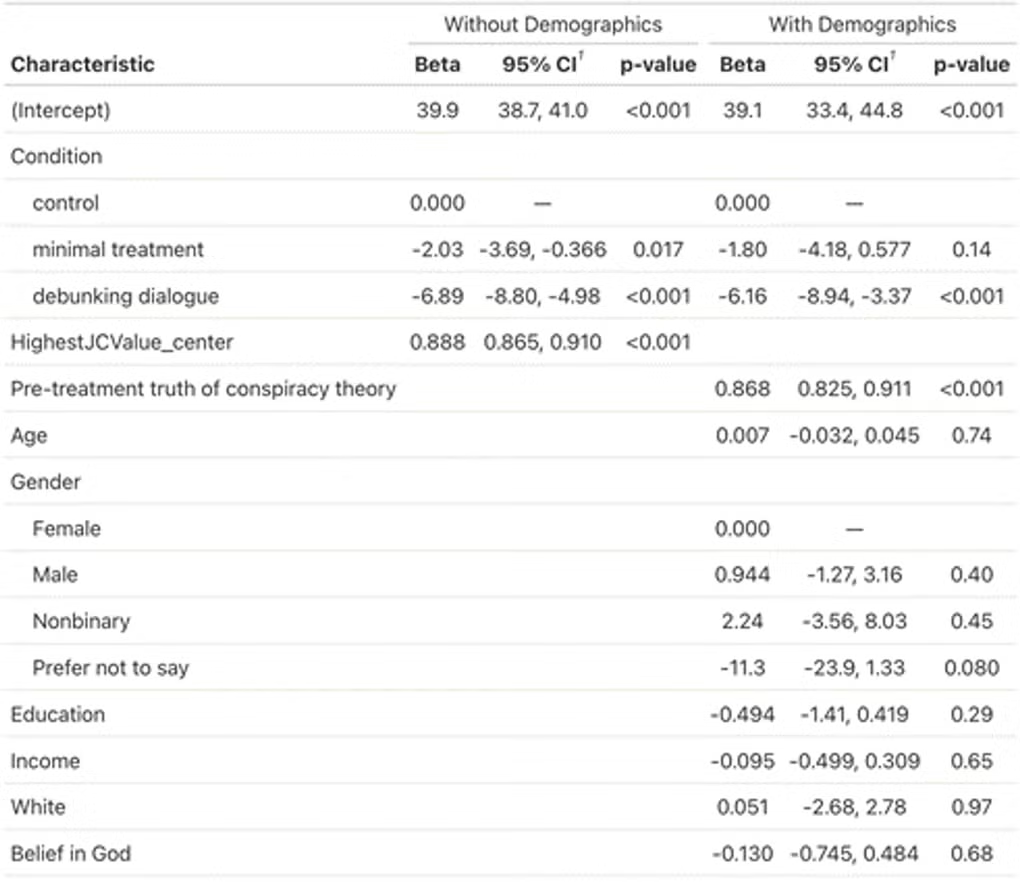

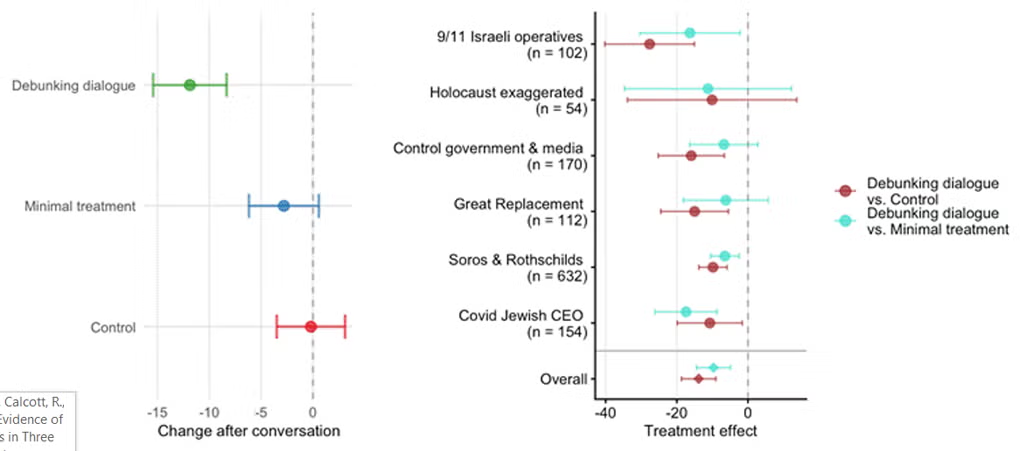

To test the effect of the debunking dialogue on antisemitic conspiracy beliefs, we follow our pre-registered analysis plan and predict post-treatment belief in the participant’s statement about their chosen antisemitic conspiracy theory using condition dummies while controlling for pre-treatment belief. We find that the debunking dialogue reduced belief significantly more than either the control (b = -13.1, 95% CI[-15.7, -10,5], p < .001, d = .64, 16% decrease relative to pre-treatment) or the minimal treatment (b = -9.26, 95% CI[-12.1, -6.44], p < .001, d = .46, 12% decrease relative to pre-treatment); see Figure 2A. These results are robust: The same pattern of results is seen when examining each conspiracy separately (see Figure 2B), when excluding people who reported technical problems or that the LLM mischaracterized their views (see SM5), when making the assumption that participants who dropped out during the dialogue would have shown no change had they completed the study (see SM6), and when adding demographic covariates as controls (SM8). A similar pattern is also seen for ratings of the truth of the antisemitic conspiracy theory that participants provided at the outset of the study when rating the full set of 14 conspiracies (this analysis was not pre-registered, but provides a measure of broader endorsement of this conspiracy theory rather than just the specific statement of it given by the participant): the debunking dialogue reduced rated likelihood of being true significantly more than either the control (b = -11.7, 95% CI[-15.0, -8.39], p < .001, d = .74, 15% decrease relative to pre-treatment) or the minimal treatment (b = -9.07, 95% CI[-12.4, -5.7], p < .001, d = .57, 12% decrease relative to pre-treatment); see SM9.

Figure 2. The LLM debunking dialogue reduces belief in antisemitic conspiracies relative to the control and to simply stating that the belief is an antisemitic conspiracy theory. (A) After the LLM debunking dialogue (green), participants significantly reduce belief in their stated antisemitic conspiracy theory whereas there is a much smaller decrease after the minimal treatment (blue) and virtually no change after the control (red). (B) This effect is not specific to any one antisemitic conspiracy theory: the debunking dialogue effect was more negative relative to control (maroon) and relative to minimal treatment (aqua) across conspiracies.

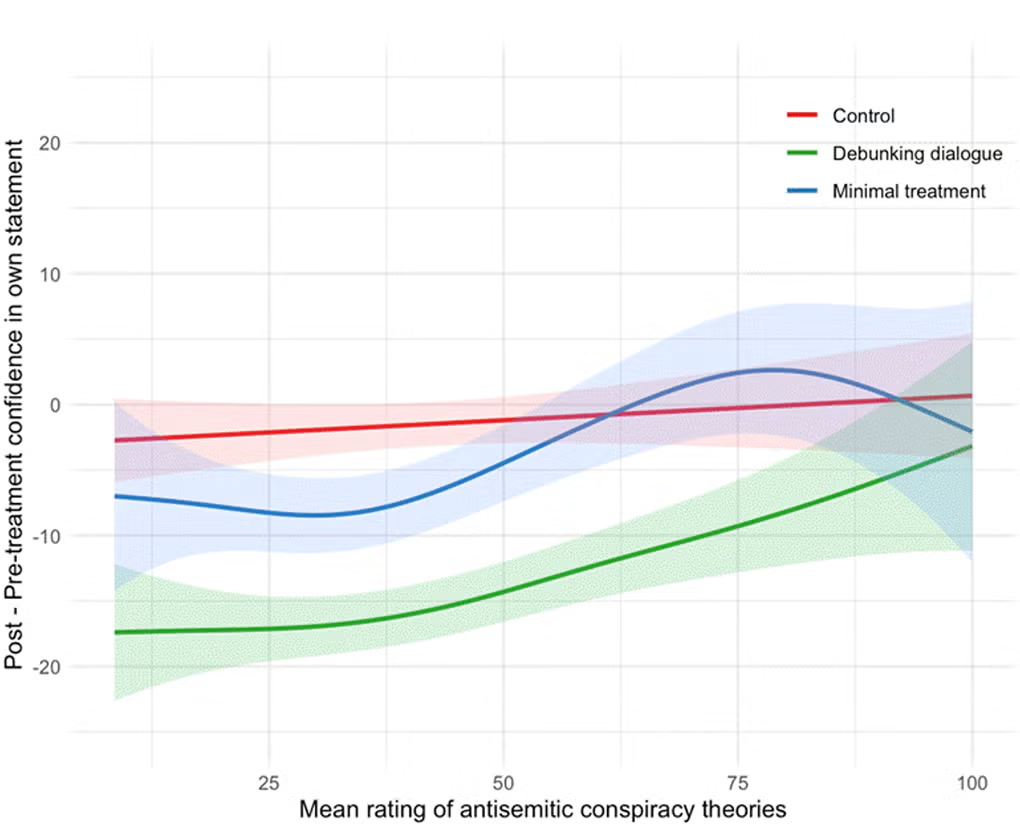

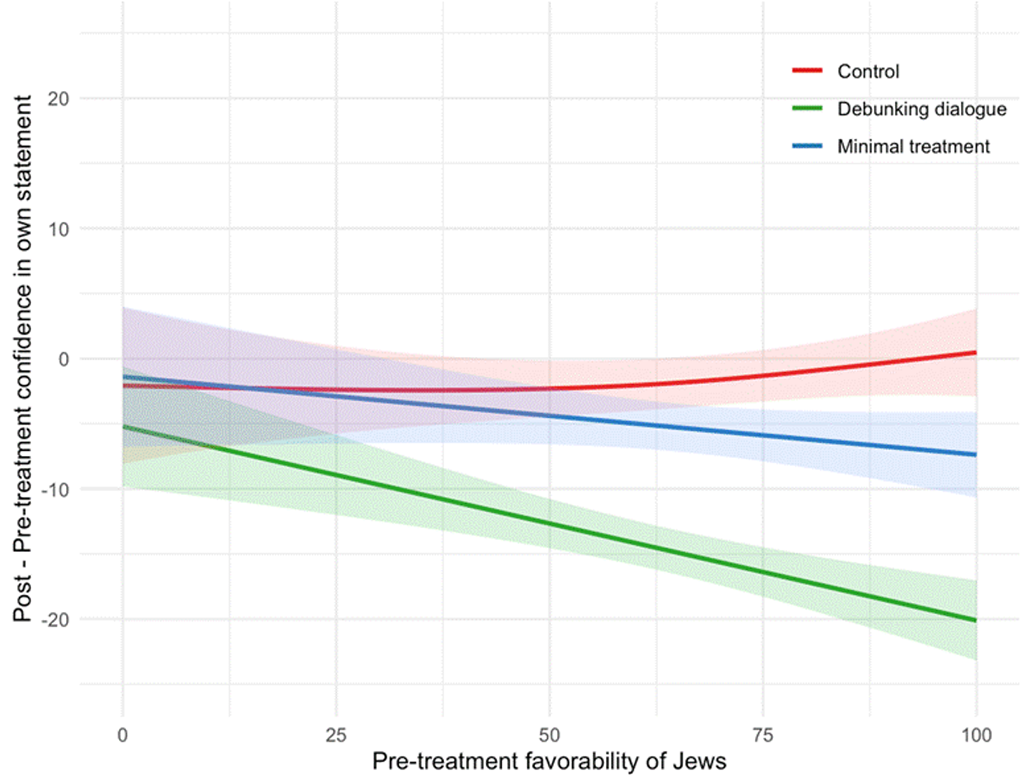

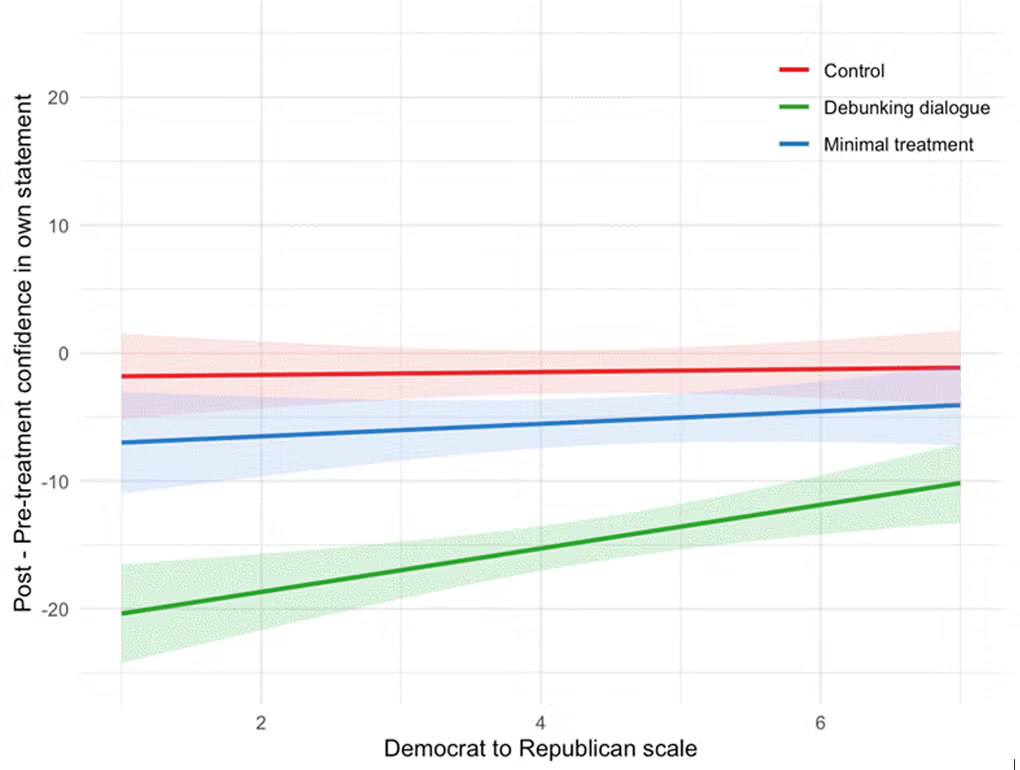

Finally, we conduct pre-registered moderation tests, asking how the treatment effect varies based on a variety of characteristics of the respondents (see SM7 for details and deviations from preregistration). Political party identification and presence of explicitly antisemitic sentiment in participants’ dialogue turns did not significantly moderate belief change. A generalized additive model indicates that the treatment effect on belief in the participants’ statement diminishes for those in the highest quartile (n = 104) of pre-treatment overall antisemitic conspiracy belief (average across all six focal items; see steeper curve in SM7.1). A similar although linear moderation is seen for pre-treatment favorability of Jews (see SM7.2). This indicates that the treatment was somewhat less effective for the most conspiratorial and antisemitic participants.

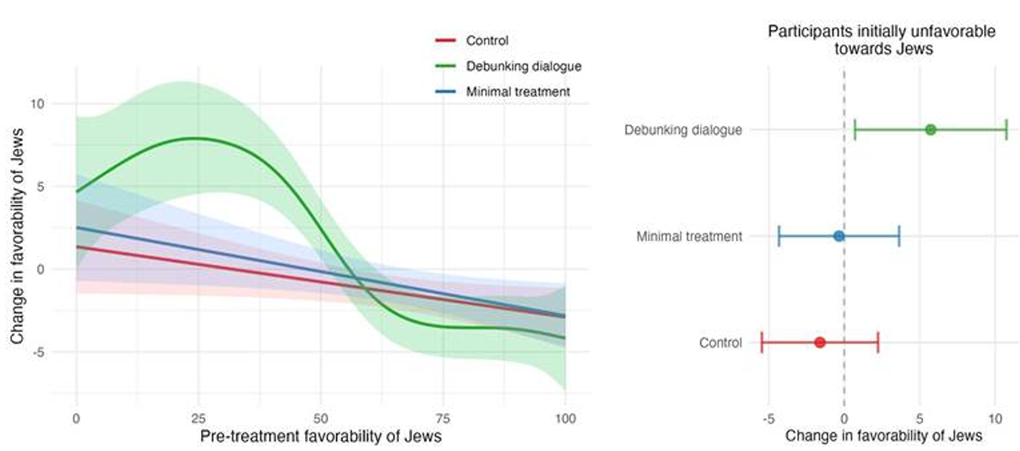

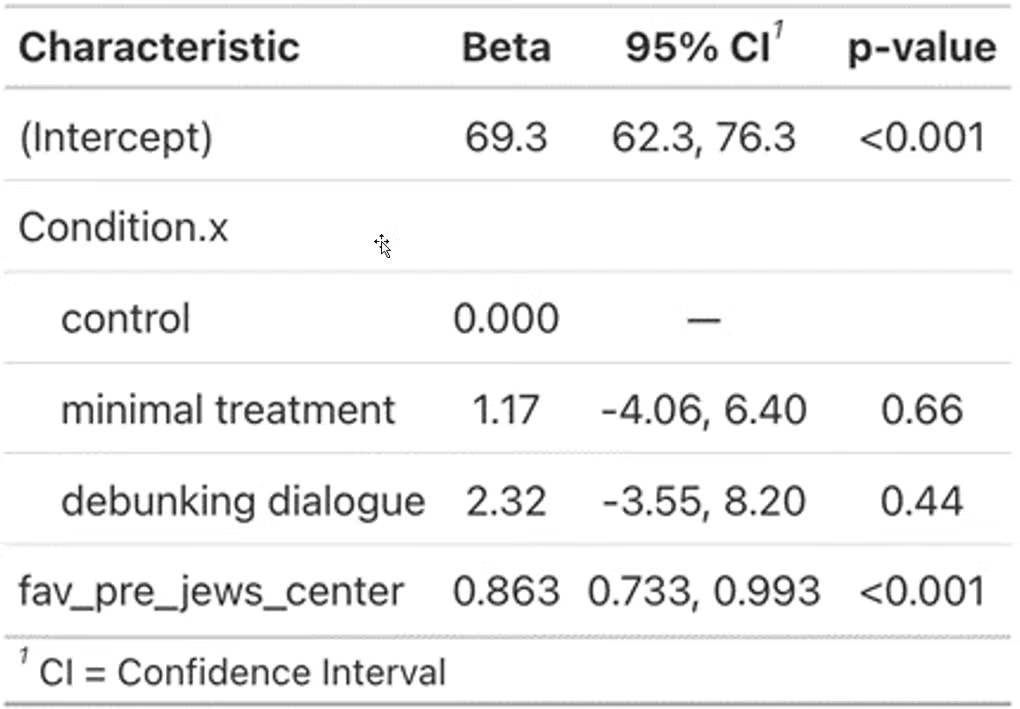

Effects on Favorability Toward Jews

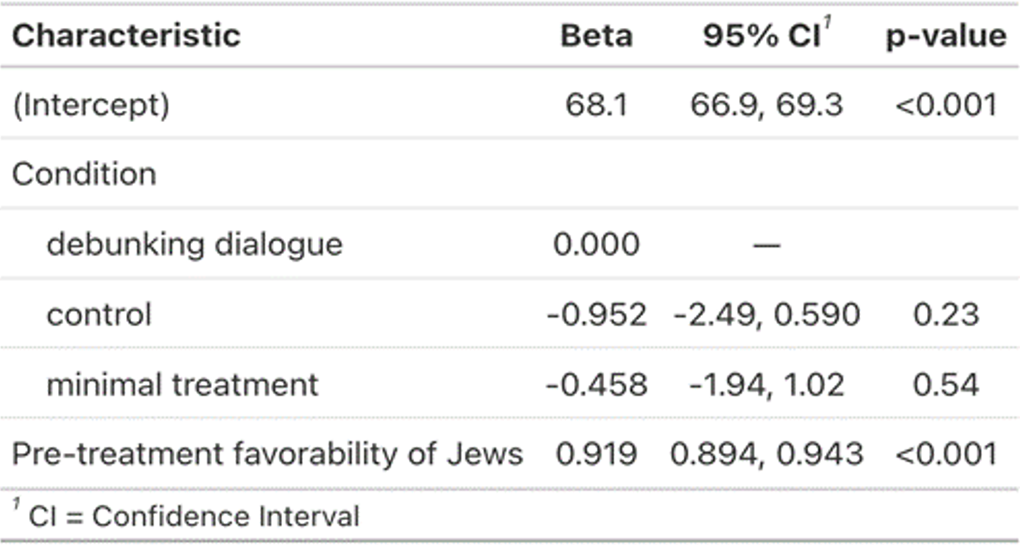

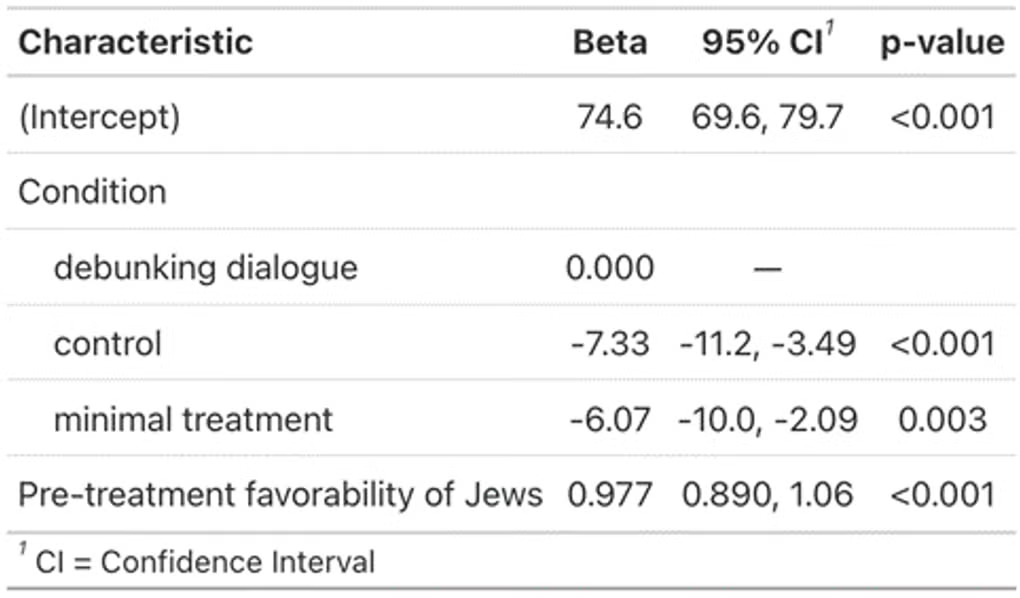

Next we turn to our second pre-registered key outcome: favorability toward Jews. Overall, we find no significant difference in post-treatment favorability between the debunking dialogue condition and the control condition (b = .95, 95% CI[-.59, 2.49], p = .2, d = .04) or the minimal treatment condition (b = .46, 95% CI[-1.02, 1.94], p = .54, d = .03). Turning to pre-registered moderation analyses, however, shows that the treatment effect on favorability towards Jews varies dramatically based on pre-treatment favorability (see Figure 3A; a generalized additive model with pre-treatment favorability as a moderator outperformed a model without, ΔAIC = 19, ΔR2 = .02, ANOVA p < .001). Specifically, the debunking dialogue substantially improved attitudes towards Jews among participants who initially saw Jews unfavorably. When restricting to these participants (initial rated favorability < 50, n = 266), the debunking dialogue significantly increased rated favorability relative to control (b = 7.33, 95% CI[3.49, 11.2], p < .001, d = .45, 25% increase relative to pre-treatment) and the minimal treatment (b = 6.07, 95% CI[2.09, 10.0], p = .003, d = .37, 21% increase relative to pre-treatment). Thus, we find that the debunking dialogue did successfully increase favorability towards Jews among initially antisemitic participants.

Figure 3. Debunking antisemitic conspiracies improved favorability towards Jews among those who initially saw Jews unfavorably. (A) There is a highly non-linear relationship between pre-treatment favorability of Jews and the effect of the debunking dialogue (green). In particular, there is a large positive effect among those who were initially unfavorable (B). Shown is the pre-to-post treatment change in favorability of Jews among the n = 266 participants who were initially unfavorable towards Jews (i.e. favorability < 50 pre-treatment).

Durability of Effects

We also tested the durability of the observed effects by recontacting all participants roughly one month after the experiment and inviting them to participate in a follow-up survey. Data were collected 35-44 days (M = 37.56) after the initial survey.

The recontact survey re-presented participants with the AI-generated summary of their views on their chosen antisemitic conspiracy theory, and asked them to rate their confidence that these views are true. They then re-rated favorability of all ethnic, religious, and geographic groups, as well as rating their belief in the entire conspiracy theory battery, with the order of the two question blocks randomized. The survey concluded with a brief memory test conducted for other purposes. Of the 1224 participants from the initial study who endorsed an antisemitic conspiracy theory, 896 returned.

Following Lin et al. (2025), we test for persistence of the initial effect—i.e., the extent to which an initial effect is still present at followup—by conducting a regression in which change from baseline to immediately after the experimental intervention (post-treatment rating – pre-treatment rating) predicts change from baseline to recontact (rating one month later – pre-treatment rating), while controlling for baseline. The intuition behind this approach is most easily seen in the most extreme cases: if the experimental effect had not decayed at all, then the pre/post change observed during the initial experiment will equal the change one month later, and the coefficient on immediate change will be 1, whereas total decay would yield a coefficient of 0; see Lin et al. (2025) for supporting simulations. This test therefore estimates how much of the original effect is detectable at recontact. The advantage of this approach over the simpler alternative—effects of experimental condition on recontact ratings—is that it improves precision by accounting for the fact that participants who displayed little or no immediate effect would not be expected to display an effect at recontact.. This analysis finds that the immediate change in confidence in participants’ own statement significantly predicts the change observed at followup (b = .49, 95% CI[.45, .53], p < .001), with the b = 0.49 coefficient indicating that 49% of the original effect is evident a month later. This decay in effect size largely stems largely from regression to the mean belief in the control after delay, rather than belief in the treatment increasing substantially after delay: mean belief in the pre-treatment is 82.3, 95% CI[80.26, 84.35] immediately post-treatment, 67.4, 95% CI[64.02, 70.73] and at recontact is 70.3, 95% CI[67.34, 73.29] such that the decrease in belief from pre-to-recontact is 80% as large as the decrease from pre-to-immediately post. Similarly, among participants who initially rated Jews unfavorably, we find that 53% of the effect of the debunking dialogue on favorability of Jews evident a month later (b = .53, 95% CI[.39, .69], p < .001). For completeness, we note that post hoc analyses that simply predict ratings at followup using condition while controlling for pre-treatment rating find significant effects on antisemitic conspiracy beliefs, but not on attitudes towards Jews among those initially unfavorable towards Jews (perhaps unsurprisingly due to the smaller sample size and smaller initial effect for this latter analysis); see SM10.

Discussion

Here, we have demonstrated that a short dialogue with an LLM instructed to debunk unwarranted claims reduced people’s confidence in their own stated antisemitic conspiracy beliefs. These effects were not only statistically significant, but also quite large—on the order of half of a pre-treatment standard deviation, with the treatment reducing confidence in the truth of conspiracy beliefs by 16% relative to a control dialogue and 12% relative to a minimal treatment. We also find that among those who initially rated Jews unfavorably (i.e. who were antisemitic), the debunking dialogues significantly increased favorability by 25% relative to control and 21% relative to minimal treatment. Furthermore, we find evidence of persistence: To the extent that a participant changed their beliefs and/or attitudes immediately after the treatment, roughly 50% of that change was still evident after a month.

Notably, the treatment effect we observe here (13.1 point decrease in belief) is quite similar in magnitude to the debunking effect observed previously for classic conspiracies (Costello et al., 2024 Study 1: 16.8 points; Study 2: 12.3 points). This suggests that factual debunking can reduce belief in antisemitic conspiracies as effectively as classic conspiracies - and therefore that antisemitic conspiracy theories’ deep historical roots and ties to ethnic and religious prejudice do not protect against the power of facts and evidence. We also contribute to the literature on conspiracy debunking by providing the first evidence that LLM debunking is significantly more effective than an active control (our minimal treatment) which states that the belief is an antisemitic conspiracy theory but does not provide facts and evidence to back up that claim. Furthermore, we show that previously documented debunking effects are not model-specific: while prior work used OpenAI’s GPT-4o model, here we show similar results using Anthropic’s Claude 3.5 Sonnet model.

Our work also contributes to the literature on prejudice reduction. Historically, work on prejudice reduction has largely focused on encouraging “mentalizing” about outgroups using strategies such as intergroup contact, perspective-taking, or empathy-building dialogues (for a review see Paluck et al., 2021). In contrast, our LLM dialogue treatment focuses on directly correcting inaccurate beliefs and debunking conspiratorial narratives. This success suggests that, at least in the case of antisemitism, correcting false beliefs underpinning prejudice can meaningfully reduce that prejudice, even without explicitly invoking empathy or other traditional anti-bias tactics. Thus, factual correction of misperceptions - be it via LLMs or humans equipped with the relevant facts (or with an LLM aide) - is a useful piece of the prejudice-reduction toolkit. It is also worth nothing that our debunking dialogue intervention is less time/resource intensive than many effective interventions for prejudice reduction (Paluck et al., 2021). Finally, our observation that debunking antisemitic conspiracies reduces antisemitism implies a causal role for conspiracy beliefs in fueling prejudice against Jews, synergizing with an experiment conducted in the UK where exposing 57 people to a conspiracy about Jews not showing up for work on 9/11 increased prejudice against Jews (Jolley et al., 2019). Future work should test whether similar fact-based debunking interventions can combat other forms of prejudice, expanding our repertoire of evidence-based strategies for reducing intergroup animosity.

Importantly, the LLM debunking dialogue effects on conspiracy beliefs and favorability of Jews we document here cannot be dismissed as demand effects/social desirability effects. To the extent that such effects occur, our minimal treatment - in which participants are informed that their beliefs are antisemitic and dangerous but are not given debunking information - would also induce them. Yet we find little change in the minimal treatment, while the changes in the LLM debunking dialogue are much larger. This resonates with studies that find very little evidence of demand effects in online survey experiments such as ours (Mummolo & Peterson, 2018; Woodley et al., 2025).

Our results suggest that conversational AI may offer a potentially powerful tool for reducing antisemitic conspiracy beliefs and improving attitudes toward Jews at scale. It is important for future work to assess the generalizability of our findings to the broader population of conspiracy believers, as well as beyond the United States (given that antisemitism is a global problem). Even if LLMs are effective at reducing belief in antisemitic conspiracy theories, a central challenge for achieving impact is engagement: people must be willing to talk to the chatbot for it to correct their misperceptions. Future research should therefore investigate strategies for encouraging interaction with debunking chatbots. Possibilities include integration into search engines and social media platforms, recommendations from trusted messengers, and ad campaigns to drive traffic to the public-facing www.DebunkBot.com website we have created (or other similar AI-debunking resources). It is also important to consider how to avoid having people see the LLM debunking as itself part of a conspiracy aimed at influencing their beliefs - and thereby inadvertently wind up reinforcing the very theories we seek to debunk. Transparency about the model’s goals and prompting is a promising approach to explore to this end. Addressing these questions will be essential for translating the experimental efficacy we have documented here into practical solutions.

In sum, we have shown that brief back-and-forth dialogues between a debunking LLM and participants who believed antisemitic conspiracy theories led to large decreases in such beliefs. These results advance our understanding of both conspiracy beliefs and antisemitism, and offer hope for a scalable approach to help address a centuries-old societal ill.

Method

Design

The design and main predictions were preregistered on aspredicted.org (#206519). Data for the main experiment were collected January 7–10, 2025.

Participants first answered demographic questions and then rated how favorably they saw various religious and ethnic groups, including Jews (Pew, 2023) on 0 – 100 scale labeled Very unfavorably, Somewhat unfavorably, Somewhat favorably, Very favorably. They then rated the truth of fifteen items on a 0 – 100 scale labeled Definitely false, Probably false, Probably true, Definitely true. These items were adapted from the Belief In Conspiracy Theories Inventory (after Costello et al., 2024) to include: six focal antisemitic conspiracy items (e.g., “Powerful Jewish families like The Rothschilds or the Soros family manipulate world events to advance their own interests”), six non-antisemitic conspiracy theories (“US agencies intentionally created the AIDS epidemic and administered it to Black and gay men in the 1970s”), and five true conspiracies (“The CIA conducted secret experiments on human subjects in the 1950s and 1960s as part of Project MKUltra in an attempt to develop mind control techniques”). Focal items reflected existing conspiracy theories drawn from online extremism reports by Southern Poverty Law Center and Anti-Defamation League. All the focal items specifically mentioned Jews to prevent potential ambiguity (Weinberg et al., 2025). We avoided mention of Israel to retain experimental focus on antisemitism rather than anti-Zionism, with one exception: the longstanding conspiracy claim that Israel was responsible for the 9/11 attacks. See SM1 for complete instruments.

Participants who rated at least one antisemitic conspiracy item higher than the scale midpoint of 50 were included in the subsequent experiment. These participants were re-presented with the specific antisemitic conspiracy they rated the highest and asked to clarify their belief in their own words. They were also asked to explain what evidence supports their belief. These free responses were passed to an LLM (OpenAI’s GPT-4 Turbo) which was tasked with summarizing the belief and evidence in a single statement. Participants saw this summary statement (labeled as AI-generated) and were asked to rate their confidence in it (“On a scale of 0% to 100%, please indicate your level of confidence that this statement is true”). Participants were then randomly assigned to (1) the debunking dialogue condition, where they discussed the antisemitic conspiracy theory with an instance of Anthropic’s Claude 3.5 Sonnet, which had been instructed to persuade the participant not to believe the conspiracy (see SM2 for full system prompt), (2) the control condition, where they discussed an irrelevant topic with Claude (pros and cons of working from home), or (3) a minimal treatment condition in which they engaged in the irrelevant conversation from (2) and then received a message informing them that an AI had evaluated their belief, concluded that it was a conspiracy, and conspiracy theories are dangerous. The minimal treatment condition addresses concerns about demand effects, as it makes the goal of the experiment clear without actually providing any debunking information.

After the experimental stage, participants in all conditions rerated their confidence in their own stated beliefs, the truth of the original antisemitic conspiracy item, and their attitudes towards the religious and ethnic groups they had rated initially. The experiment concluded with measures of trust in various institutions included for other purposes, and a short debrief.

Participants

Pilot testing found roughly half of online participants endorsed at least one antisemitic conspiracy theory. We therefore set a target sample size of 2000 with an oversample of 500 in anticipation of attrition and attention check failures, to achieve N ≈ 1000 for the experiment (after Costello et al., 2024). Participants were US adults recruited through CloudConnect with roughly even thirds Democrats, Republicans, and Independents. We balanced on party identification because of debates over whether antisemitism is more common on the right (Hersh & Royden, 2022) or equally common at the ideological poles (Lewis, 2025). 1224 participants rated their belief in at least one antisemitic conspiracy theory higher than the scale midpoint; as anticipated, this portion constituted roughly half the sample (50.3%) that completed the survey and did not fail the attention check (N = 2432, with an additional 76 who failed).

Attrition

241 participants dropped out of the experiment before the treatment phase, 64 dropped out after. The latter subgroup showed no demographic differences with those who did complete the experiment (all ps > .11) and did not differ in how favorably they rated Jews, t(67) = -0.48, p = .6. In the followup experiment, whether or not the participants returned did not differ across conditions, 𝜒2 = 1.3, p = .5.

References

ADL. (2020). Racist, Extremist, Antisemitic Conspiracy Theories Surround Coronavirus Vaccine Rollout. https://www.adl.org/resources/article/racist-extremist-antisemitic-conspiracy-theories-surround-coronavirus-vaccine

ADL. (2021). Antisemitic Conspiracies About 9/11 Endure 20 Years Later. https://www.adl.org/resources/report/antisemitic-conspiracies-about-911-endure-20-years-later

Allington, D., Hirsh, D., & Katz, L. (2023). Correlation between coronavirus conspiracism and antisemitism: A cross-sectional study in the United Kingdom. Scientific Reports, 13(1), 21104. https://doi.org/10.1038/s41598-023-41794-y

Boissin, E., Costello, T. H., Spinoza-Martín, D., Rand, D. G., & Pennycook, G. (2025). Dialogues with large language models reduce conspiracy beliefs even when the AI is perceived as human. PNAS Nexus, pgaf325. https://doi.org/10.1093/pnasnexus/pgaf325

Costello, T. H., Pennycook, G., & Rand, D. (2025). Just the facts: How dialogues with AI reduce conspiracy beliefs. OSF. https://doi.org/10.31234/osf.io/h7n8u_v1

Costello, T. H., Pennycook, G., & Rand, D. G. (2024). Durably reducing conspiracy beliefs through dialogues with AI. Science, 385(6714), eadq1814. https://doi.org/10.1126/science.adq1814

Hersh, E., & Royden, L. (2023). Antisemitic Attitudes Across the Ideological Spectrum. Political Research Quarterly, 76(2), 697–711. https://doi.org/10.1177/10659129221111081

Hornsey, M. J., Bierwiaczonek, K., Sassenberg, K., & Douglas, K. M. (2023). Individual, intergroup and nation-level influences on belief in conspiracy theories. Nature Reviews Psychology, 2(2), 85–97. https://doi.org/10.1038/s44159-022-00133-0

Jolley, D., Meleady, R., & Douglas, K. M. (2020). Exposure to intergroup conspiracy theories promotes prejudice which spreads across groups. British Journal of Psychology, 111(1), 17–35. https://doi.org/10.1111/bjop.12385

Lewis, J. S. (2025). Conspiracy and Antisemitism in Contemporary Political Attitudes. Political Research Quarterly, 78(2), 619–634. https://doi.org/10.1177/10659129251318350

Lin, H., Czarnek, G., Lewis, B., White, J. P., Berinsky, A. J., Costello, T., Pennycook, G., & Rand, D. G. (in press). Persuading voters using human-AI dialogues. Nature.

Mummolo, J., & Peterson, E. (2019). Demand Effects in Survey Experiments: An Empirical Assessment. American Political Science Review, 113(2), 517–529. https://doi.org/10.1017/S0003055418000837

Nyhan, B., & Zeitzoff, T. (2018). Conspiracy and Misperception Belief in the Middle East and North Africa. The Journal of Politics, 80(4), 1400–1404. https://doi.org/10.1086/698663

Paluck, E. L., Porat, R., Clark, C. S., & Green, D. P. (2021). Prejudice Reduction: Progress and Challenges. Annual Review of Psychology, 72(Volume 72, 2021), 533–560. https://doi.org/10.1146/annurev-psych-071620-030619

Pew. (2023, March 15). Americans Feel More Positive Than Negative About Jews, Mainline Protestants, Catholics. Pew Research Center. https://www.pewresearch.org/religion/2023/03/15/americans-feel-more-positive-than-negative-about-jews-mainline-protestants-catholics/

SPLC. (2022). The Racist “Great Replacement” Conspiracy Theory Explained. Southern Poverty Law Center. https://www.splcenter.org/resources/hatewatch/racist-great-replacement-conspiracy-theory-explained/

Weinberg, D. B., Levy, M. D., Edwards, A., Kopstein, J. S., Frey, D., Antonaros, P., Baci, N., Cohen, N. D., Fernandez, J. A., & Ni, Y. (2025). Hidden in plain sight: Antisemitic content in QAnon subreddits. PLOS ONE, 20(3), e0318988. https://doi.org/10.1371/journal.pone.0318988

Woodley, L., Roberts-Gaal, X., Calcott, R., & Cushman, F. A. (2025). No Evidence of Experimenter Demand Effects in Three Online Psychology Experiments (g6xhf_v1). PsyArXiv. https://doi.org/10.31234/osf.io/g6xhf_v1

Supplemental Materials

SM1. Experimental materials

I view... |

| Jews |

| Christians |

| Muslims |

| Hindus |

| Atheists |

| Whites |

| Blacks |

| Hispanics |

| Middle Easterners |

| Africans |

| Asians |

| 0 – 100 scale labeled Very unfavorably, Somewhat unfavorably, Somewhat favorably, Very favorably |

Table SM1.1. Favorability ratings (order was randomized).

There is often debate about whether or not the public is told the whole truth about various important issues. The following questions are designed to assess your beliefs about some of these subjects. On a scale of 0% to 100%, please indicate your level of confidence that each of the following statements are true. | |

| Type | Item |

| Antisemitic conspiracy theory | A secretive group with significant Jewish influence controls governments and media worldwide. |

| Antisemitic conspiracy theory | Powerful Jewish families like The Rothschilds or the Soros family manipulate world events to advance their own interests. |

| Antisemitic conspiracy theory | The COVID-19 pandemic was an engineered emergency created in order to generate profits for Jewish pharmaceutical heads like Pfizer’s CEO Albert Bourla. |

| Antisemitic conspiracy theory | The historical evidence supporting the events of the Holocaust is unreliable or exaggerated. |

| Antisemitic conspiracy theory | Jewish interest groups are deliberately attempting to alter the demographic makeup of the US in order to tip elections in their favor and advance their policy objectives. |

| Antisemitic conspiracy theory | The 9/11 attacks were not undertaken by Al Qaeda but by Israeli operatives working in secret and trying to hide their involvement. |

| Other conspiracy theory | The Oklahoma City bombers, Timothy McVeigh and Terry Nichols, did not act alone, but rather received assistance from neo-Nazi groups. |

| Other conspiracy theory | Area 51 in Nevada, US, is a secretive millitary base that contains hidden alien spacecraft and/or bodies. |

| Other conspiracy theory | US agencies intentionally created the AIDS epidemic and administered it to Black and gay men in the 1970s. |

| Other conspiracy theory | The assassination of John F. Kennedy was not committed by the lone gunman, Lee Harvey Oswald, but was rather a detailed, organised conspiracy to kill the President. |

| Other conspiracy theory | The Apollo moon landings never happened and were staged in a Hollywood film studio. |

| True conspiracy | The tobacco industry deliberately hid evidence about the health risks of smoking from the public for many years. |

| True conspiracy | The U.S. Department of Defense created proposals for acts of terrorism to be committed on U.S. soil to justify military intervention in Cuba, known as Operation Northwoods. |

| True conspiracy | Special interest groups are suppressing, or have suppressed in the past, technologies that could provide energy at reduced cost or reduced pollution output. |

| True conspiracy | The CIA conducted secret experiments on human subjects in the 1950s and 1960s as part of Project MKUltra in an attempt to develop mind control techniques. |

| 0 – 100 scale labeled Definitely false, Probably false, Probably true, Definitely true | |

Table SM1.2. Conspiracy theory belief measure (order was randomized).

| How much do you trust ? |

| the federal government |

| the federal government to disclose information of public importance |

| the police |

| your state government |

| your local government |

| Congress |

| technology companies |

| artificial intelligence |

| the media |

| business |

| CEOs |

| bankers |

| the military |

| 0 – 100 scale labeled Completely distrust, Somewhat distrust, Neither trust nor distrust, Somewhat trust, Completely trust |

Table SM1.3. Institutional trust ratings (order was randomized).

SM2. System prompt for antisemitic conspiracy debunking dialogue

| “Your goal is to very effectively persuade users to stop believing in an antisemitic conspiracy theory. Namely, your conversation partner believes that [ITEM], endorsing this conspiracy as endorsed this conspiracy as [RATING] out of 100 (where 0 is Definitely False, 50 is Uncertain, and 100 is Definitely True). Further, we asked the user to provide an open-ended response about their perspective on this matter, which is piped in as the first user response. Please generate a response that will persuade the user that this conspiracy is not supported. Interpersonally, don’t be obsequious or sycophantic. Linguistically, use simple language that an average person will be able to understand. In terms of the scope of your aims, be ambitious and optimistic! Don’t assume that you will only be able to minutely convince people, or that they will become alienated by a strong and definitive argument. Make the strongest case you can. Mostly focus on the facts rather than the scolding people.” |

SM3. Demographic characteristics of antisemitic conspiracy theory endorsers and non-endorsers

SM4. Initial belief statement analysis

We developed a four-stage computational pipeline to extract and quantify participants’ initial conspiracy belief statements. The pipeline processes raw survey data containing participant conversations about conspiracy theories and outputs frequency analysis of distinct claims made by participants. Stage 1: Belief Extraction. Large Language Models (Claude Sonnet 4) parse free-form text into discrete belief statements. Stage 2: Embedding Generation. The system converts individual beliefs into high-dimensional vector representations using Google's Gemini embedding model. This creates numerical representations suitable for clustering analysis. Stage 3: Hierarchical Clustering and Exemplar Selection. We implement a two-part hierarchical clustering approach to organize beliefs by semantic similarity. Part 1 uses UMAP dimensionality reduction followed by EOM (Excess of Mass) HDBSCAN clustering to create broad thematic groups. For thematic groups with n > 50, part 2 applies a second round of UMAP and Leaf HDBSCAN clustering within each broad group to identify sub-clusters of duplicate beliefs. For each group of duplicates, we extract an “exemplar” belief using HDBSCAN’s exemplar parameter. This allows us to greatly reduce the number of beliefs per thematic group. Stage 4: Each thematic group is processed independently by LLMs to identify distinct factual claims. An additional consolidation step uses an LLM to identify and merge duplicate claims that may appear across different thematic groups. The output provides frequency counts showing how many participants endorsed each distinct claim.

Embedding model:

| Model Name | gemini-embedding-001 |

| Model Mode | CLUSTERING |

| Dimensions | 3072 |

UMAP + HDBSCAN parameters:

Part1_UMAP_PARAMS = { "n_components": 50, "n_neighbors": 10, "min_dist": 0.0, "metric": "cosine", "random_state": 42,} | Part2_UMAP_PARAMS = { "n_components": 20, "n_neighbors": 5, "min_dist": 0.0, "metric": "cosine", "random_state": 42,} |

Part1_HDBSCAN_PARAMS = { "min_cluster_size": 4, "min_samples": 1, "cluster_selection_epsilon": 0.4, "cluster_selection_method": "eom", "metric": "euclidean", "prediction_data": True,} | Part2_HDBSCAN_PARAMS = { "min_cluster_size": 2, "min_samples": 2, "cluster_selection_epsilon": 0.05, "cluster_selection_method": "leaf", "metric": "euclidean", "prediction_data": True,} |

Prompts used:

| Initial extraction | You are a specialized data analyst with expertise in extracting belief statements related to antisemitic conspiracy theories. from free‑form text. Your goal is to produce an *exhaustive* list of every belief the user expresses about a given conspiracy. - Extract *every single* belief relevant to the topic. - **Exclude off‑topic remarks.** **Exclude statements that do not support the conspiracy.** *Do not say things like 'the participant believes'* - Do not omit subtly distinct points (e.g. \"They want power\" vs. \"They profit\"). - When describing the belief, add context to make the belief understandable as a standalone statement. - Return *only* the JSON array; do not wrap it, annotate it, or add commentary. |

| Claim extraction | You are an expert research assistant specializing in analyzing conspiracy theory beliefs. You are the final stage in an analysis pipeline. You are given a list of belief statements related to a conspiracy theory. These beliefs have been previously clustered into themes by hdbscan. Your role is to determine whether the beliefs in the cluster represent: a. One distinct factual claim. OR b. several distinct factual claims. SAME claim: **variations in phrasing without substantive difference** |

| Consolidation | You are an expert research assistant specializing in conspiracy theory beliefs. You will be given a list of claims post clustering. Your task is to identify if there are duplicate claims in the list. Duplicates are claims that are **identical** in content, with differences in phrasing. If no consolidations needed, return empty array |

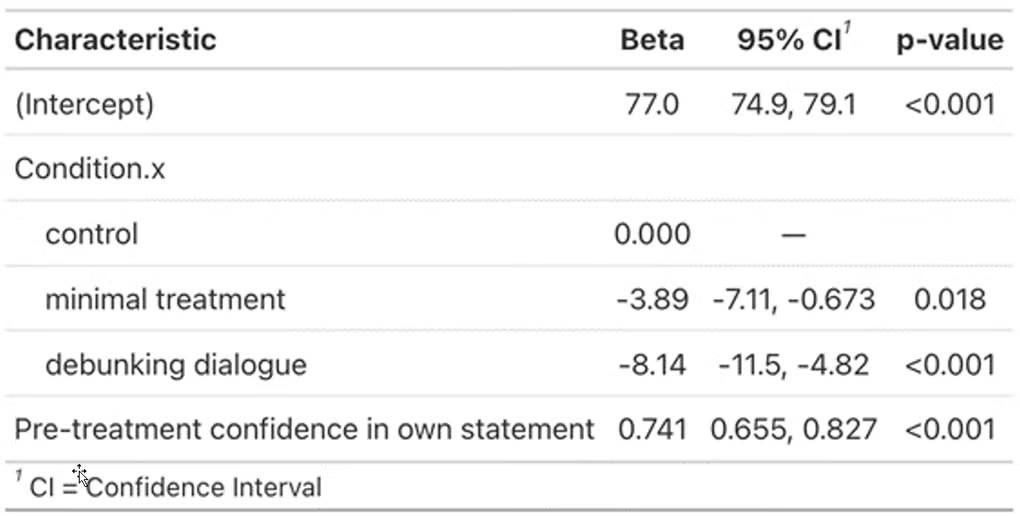

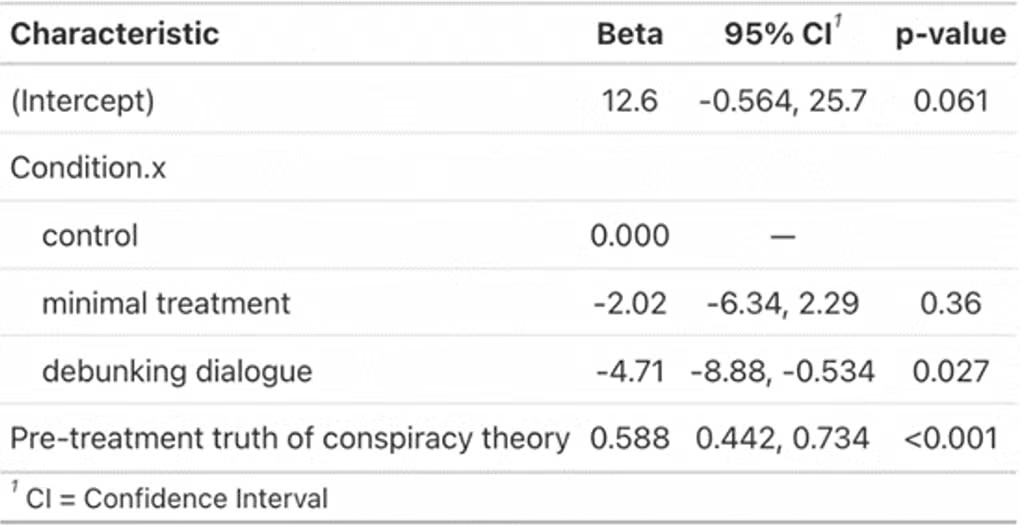

SM5. Results with additional exclusion criteria

The main paper reports results excluding only participants who failed an attention check so as not to exclude on inter- or post-treatment variables. Two such variables, however, could affect the results: (i) whether participants felt the LLM fairly summarized their views, which was measured between statement provision and LLM dialogue, and (ii) whether participants reported having technical difficulties during the dialogue, which was measured after the dialogue. The tables below show the main effects excluding participants who answered no to (i), yes to (ii), or both (n = 152). Patterns are the same as the main analysis.

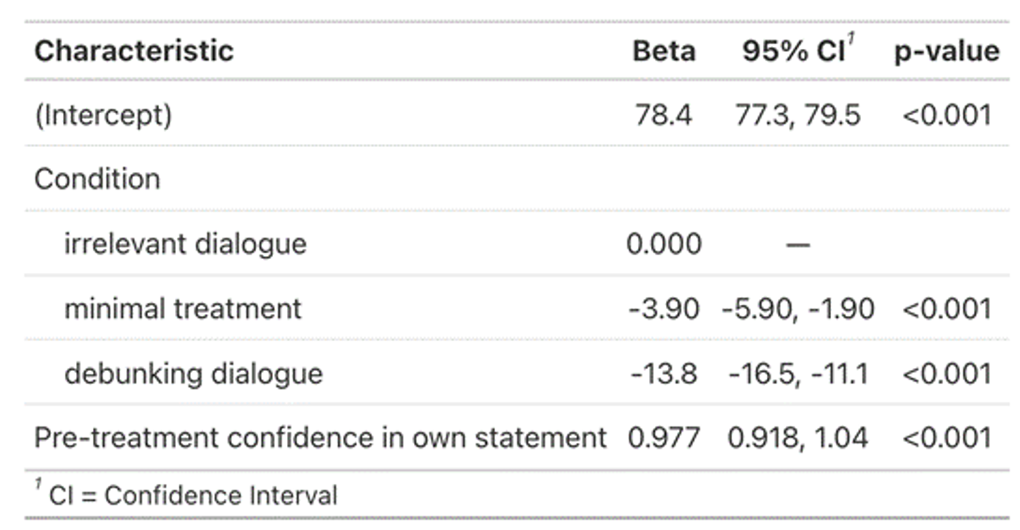

Table SM5.1. OLS on confidence in own statements with additional exclusions

Table SM5.2. OLS on truth of highest rated antisemitic conspiracy theory with additional exclusions

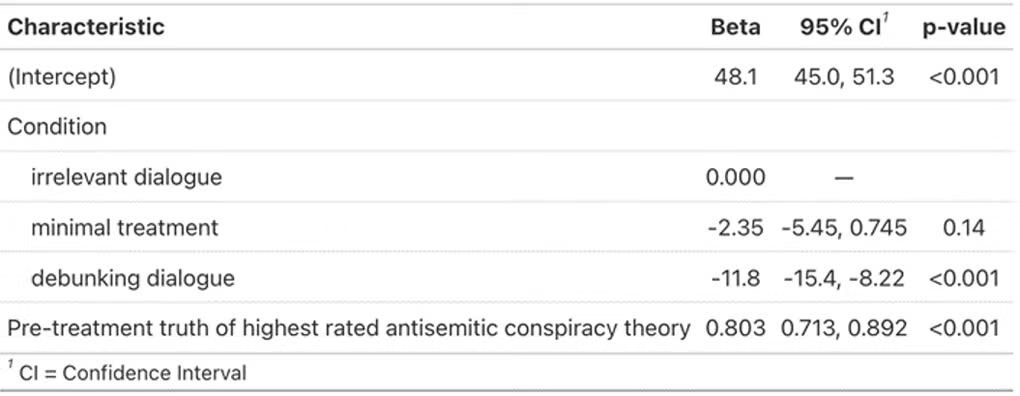

Table SM5.3. OLS on favorability of Jews with additional exclusions

Table SM5.4. OLS on favorability of Jews with additional exclusions for subgroup with pretreatment favorability of Jews < 50

SM6. Results with backfill

As noted in the main paper, a small number of participants began but did not complete the experiment. As a robustness check, we “backfill” post-treatment values for these participants using their pre-treatment ratings when available—in other words, assuming that had they continued, they would have exhibited no change (“first observation carried forward” imputation). The tables below show that the pattern of results remains under these conditions.

Table SM6.1. OLS on confidence in own statements with backfill

Table SM6.2. OLS on truth of highest rated antisemitic conspiracy theory with backfill

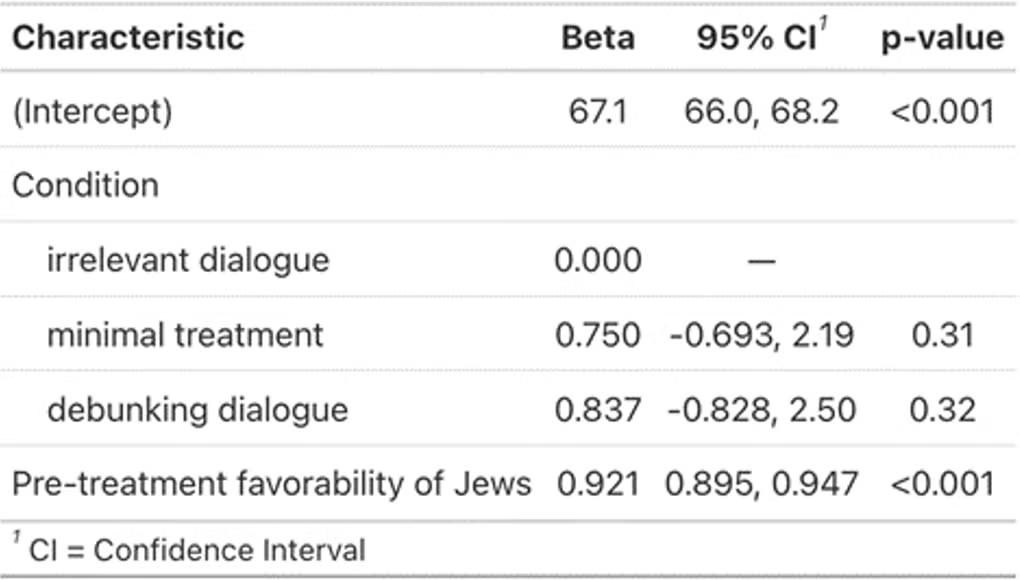

Table SM6.3. OLS on favorability of Jews with backfill

Table SM6.4. OLS on favorability of Jews with backfill for subgroup with pretreatment favorability of Jews < 50

SM7. Additional moderator analyses

As per our preregistration, we consider whether the effect of debunking on confidence in participants’ own conspiracy belief statement varies as a function (1) mean rated truth across all antisemitic conspiracy theories, (2) baseline favorability of Jews, and an important demographic characteristic, (3) political party identification.

We first assessed improved model performance of a generalized additive model (GAM) with a moderator relative to a GAM without a moderator using the joint criteria of a 3 or more decrease in Aikake information criterion, increased R2, and significance of an ANOVA on deviance explained. The moderator GAM showed clear improved model performance for mean rated truth across antisemitic conspiracy theories (ΔAIC = 3; ΔR2 = .005; ANOVA p = .052) and baseline favorability of Jews (ΔAIC = 9; ΔR2 = .052; ANOVA p = .001) but mixed evidence of improvement for political party identification (ΔAIC = -1; ΔR2 = .001; ANOVA p = .043). All relationships are visualized below.

We deviated from our preregistration in three ways. First, institutional trust and trust in AI were both measured post-treatment for design reasons. As such, we do not examine them as moderators because of causal ambiguity. Second, the feeling thermometer measure was cut from the survey due to redundancy with favorability ratings, and thus we were unable to use it as a moderator. Finally, we do not treat presence of antisemitic sentiment in participants’ contributions to the dialogues with LLMs as a moderator because this variable is constant in two of the three conditions, so a GAM cannot be fitted.

SM7.1. Moderator: mean rated truth across all antisemitic conspiracy theories

Figure SM7.1. Predicted effect of condition on confidence in own statement moderated by mean initial ratings of the truth of all six antisemitic conspiracy theories.

SM7.2. Moderator: baseline favorability of Jews

Figure SM7.2. Predicted effect of condition on confidence in own statement moderated by baseline favorability of Jews.

SM7.3. Moderator: political party identification

Figure SM7.3. Predicted effect of condition on confidence in own statement moderated by political party identification.

SM8. Demographic covariates

Table SM8.1. Condition effect on confidence in participants’ own statements with and without demographic covariates.

Table SM8.2. Condition effect on truth of discussed conspiracy theory with and without demographic covariates.

SM9. Main effects and item effects for structured conspiracy items

Figure SM9.1. Left: reduced confidence in the truth of the named conspiracy theory as described by a structured survey item rather than participants’ own stated beliefs. Right: effects by item.

SM10. Standard model of persistence

As noted in the main text, simply testing for an effect of condition assignment during the experiment on ratings after a one-month delay may estimate effects of intervening events. We report these tests for completeness and note that they also indicate the presence of an enduring effect of the debunking dialogue.

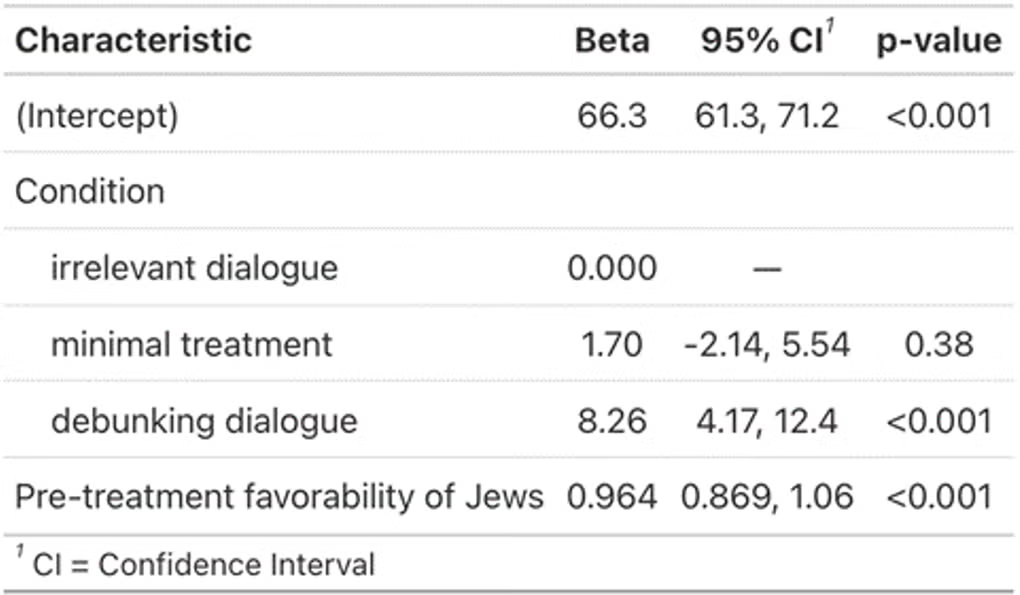

Table SM10.1. Effect of condition on confidence in participants’ own statements at recontact

Table SM10.2. Effect of condition on truth of discussed conspiracy theory at recontact

Table SM10.3. Effect of condition on favorability of Jews among the subset who rated favorability <50 pre-treatment.