October 11, 2021

Since 2020, Twitter has taken steps to decrease hate and disinformation on its platform, officially banning some forms of Covid-19 misinformation or purging QAnon-related handles after the January 6, 2021, Capitol insurrection. But while Twitter’s anti-extremist policies are more effective now than they were a year ago, the platform has not addressed the ease with which users are able to drive traffic to hate and misinformation hosted on outside sites.

To examine how fringe content and misinformation continues to spread on Twitter, ADL’s Center on Extremism (COE) analyzed how Twitter is used to disseminate content from Gab, the fringe social media platform founded by Andrew Torba. Gab has a long history as a haven for extremists, conspiracy theorists and misinformation, with Torba himself actively promoting misinformation about Covid-19 vaccines and the Capitol insurrection. As shown in the findings below, millions of Twitter users daily are potentially exposed to hate and disinformation as a result of Twitter permitting users to link to Gab content.

Gab’s Potential Reach on Twitter

Between June 7 and August 22, 2021, COE analyzed how many links to Gab content were shared on Twitter (our methodology is outlined at the bottom of this article). We found that during this time period, more than 112,000 tweets were posted containing links to Gab content, shared by more than 32,700 users with a reach of 254+ million potential views. The top 50 most shared links were rife with conspiratorial content and misinformation, some promoted by Gab itself via its verified Twitter account.

Figure 1 shows that the sharing of Gab content on Twitter peaked on July 27, which coincides with Twitter’s banning of numerous election “audit” accounts and a tweet from controversial Arizona state senator Wendy Rogers encouraging people to follow her on Gab following the bans (more on that below). Figure 2 shows the potential reach of Gab-linking tweets per day over the study period.

Figure 1 (Top Graph): Number of tweets containing Gab URLs sent each day.

Figure 2 (Bottom Graph): Potential number of Twitter views that Gab content could have reached each day, calculated by summing the follower counts of Twitter accounts that sent a Gab-linking tweet or retweet each day.

There were five days when Gab-linking tweets exceeded 7 million potential views on Twitter, peaking on August 10 with a potential reach of 8.69 million views. Additionally, Gab-linking tweets, at minimum, potentially reached at least 1.71 million Twitter views each day throughout the study period. Combined, these figures suggest that Gab’s content could be influencing millions of Twitter users and their behavior.

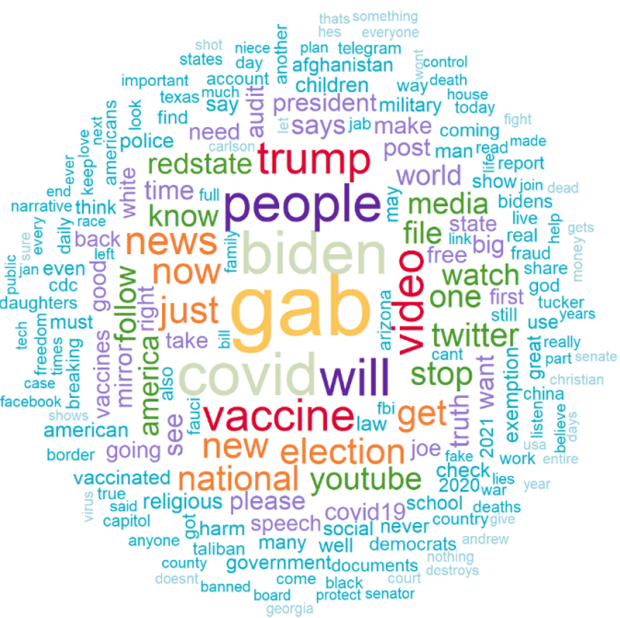

The word cloud below (Figure 3) shows the 200 most frequent words (excluding Twitter usernames) that were included in the text of tweets containing Gab links, scaled and colored by relative word frequency. We found that Covid-19 related terms (such as Covid, vaccine, vaccinated, exemption, etc.) were among the most frequently mentioned in tweets containing links to Gab content. Political references were also dominant in Gab-linking tweets, as shown by the high frequency of terms such as Biden, Trump, America, election, president, red state, democrats, and senate. Another prominent subset includes references to social media, traditional media outlets, and current news topics as observed by terms like Twitter, Gettr, YouTube, Telegram, news, and Tucker [Carlson]. This indicates that Gab-linking tweets frequently disseminated information related to Covid-19 and U.S. politics and news.

Figure 3: 200 most frequent words (without stemming) that were included in the text of tweets containing Gab links across the study period, excluding Twitter usernames.

Covid/Anti-Vaxx/Anti-Mask

Sixteen of the top 50 links to Gab content shared on Twitter during this time period promoted misinformation and conspiracy theories about Covid-19. An article written by Gab CEO Andrew Torba was the second most shared link in this time period and was shared nearly 1900 times, reaching nearly seven million potential Twitter views. Torba’s article, published in late July, shares documents that one can use to file an “’air tight religious exemption request’ for the Covid-19 vaccine if it is mandatory for you at work, school, or in the military.” The documents, which contain misinformation about the Covid-19 vaccine, were not created by Torba but were obtained from an Orthodox Christian Twitter account. Another document in Torba’s article appears to have come from the Solari Report, a website run by Catherine Austin Fitts, who was featured in the viral Covid-19 film “Planet Lockdown” and served as Assistant Secretary of the U.S. Department of Housing and Urban Development (HUD) during George H.W. Bush’s administration. Torba’s article was promoted by Gab’s Twitter account in several now-deleted tweets.

An example of a tweet sharing a link to Gab CEO Andrew Torba’s article about obtaining religious exemptions from Covid-19 vaccine mandates.

The third most shared Gab link during this time period was to a Gab TV video titled “Dr. Destroys the Entire Covid Narrative.” The video shows Dr. Dan Stock addressing the Mt. Vernon School Board in Indiana on August 7 in which he makes numerous false claims about Covid-19, such as that vaccines are causing the current surge in Covid-19 cases and that “everything being recommended by the CDC and State Board of Health are actually contrary to all the rules of science.” The video, which has gone viral and has been widely shared online, has been viewed more than 710K times on Gab. The Gab video is estimated to have reached 3.4+ million Twitter views and was retweeted by users such as Thierry Baudet, a conservative Dutch politician, and conservative podcast host Eric Matheny, who has more than 125K Twitter followers.

Conservative podcast host Eric Matheny was one of many Twitter users who shared a link to the Gab TV video showing Dr. Dan Stock addressing an Indiana school board in early August, during which he made numerous false claims about Covid-19. The video has been shared widely across the Internet. [Note: The Gab TV channel that posted this video has been deleted.]

Other top Covid-related Gab links shared on Twitter include posts falsely claiming that George Soros (a frequent target of antisemitic conspiracy theories) owns Moderna, that a Chinese scientist was the first person to acquire Covid-19 following a lab accident, another viral school board meeting video, and various posts from Japanese QAnon accounts promoting conspiracy theories about vaccine-related deaths and vaccine passports.

Antisemitism

Another Gab link, shared on Twitter more than 200 times during this time period, was a link to an antisemitic video montage featuring an image that evokes the absurd trope of Jewish blood libel as it frames a Jewish man drinking wine so that he looks as if he is drinking blood. The video then cuts to a series of closeup images of prominent Jewish people such as George Soros, Senator Chuck Schumer, Amy Winehouse and Dr. Rachel Levine. The montage then displays the message: “These creatures think they are people…and superior. God’s people? Hardly!” The link was almost exclusively shared by a single Twitter account, reaching nearly 300,000 potential Twitter views.

Japanese QAnon

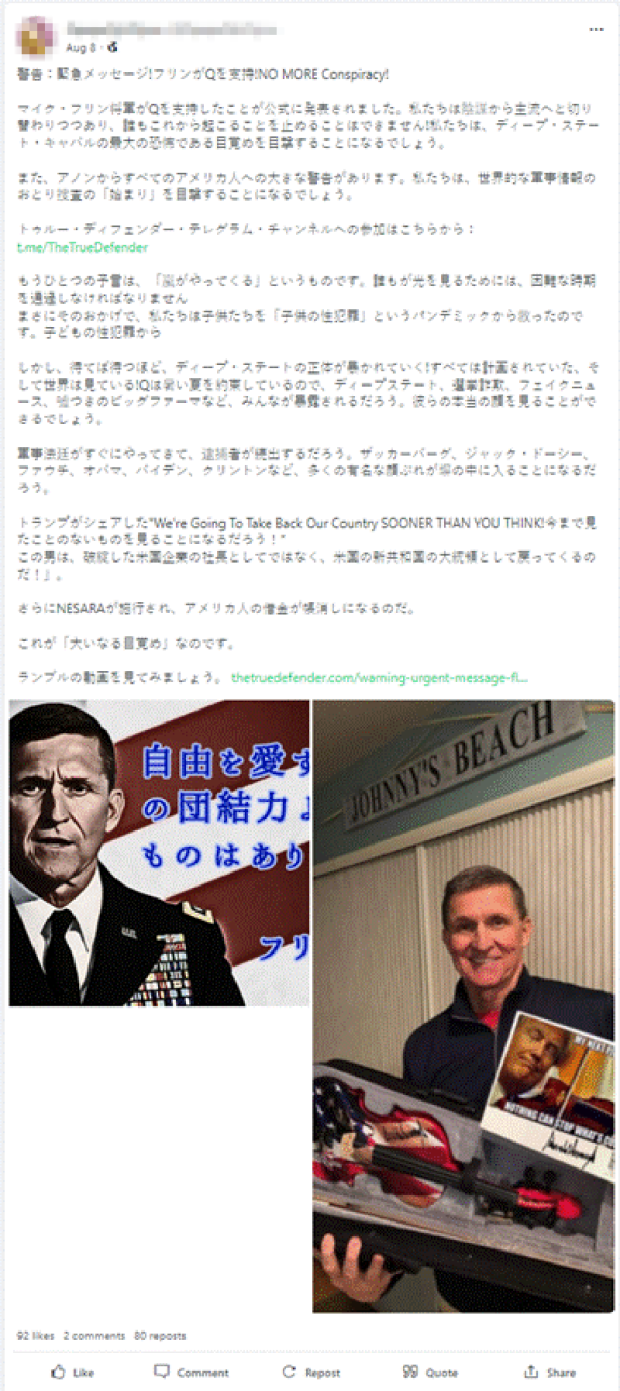

Twenty-one of the top 50 links to Gab content shared on Twitter during this time period contained conspiratorial content by Japanese-language accounts. Some of the conspiracies promoted by these accounts include theories that Bill Gates has been arrested for child trafficking, that the CIA works for the New World Order, and various theories spreading Covid-19 and vaccine misinformation. These links were posted and shared exclusively by Japanese-language Twitter accounts, with some being more explicit about their support for QAnon via their Twitter feed and biographies. Other accounts post more general conspiratorial content that suggests support of QAnon. Collectively, the links were shared over 5,700 times on Twitter, potentially reaching an estimated 3.9+ million Twitter views.

The Gab profiles that shared posts on Twitter also shared links to Japanese-language QAnon resources. These include links to a Japanese-language QAnon Telegram channel, a QAnon message board and a link to the Gab account of EriQ, who claims that he is a Japanese member of the US Q Information Special Forces, headed by Michael Flynn. As noted by members of US Congress, it is possible that these accounts are able to successfully share misinformation on Twitter because the content promoted is predominantly non-English.

This Japanese-language Gab post was shared on Twitter nearly 500 times. It claims that General Michael Flynn supports Q and warns that the “storm is coming.”

Original Gab Content (promoted by Gab)

As previously noted, the second most shared link during this time period was a Gab article written by CEO Andrew Torba that provided instructions and resources on how to request a religious exemption from Covid-19 vaccine mandates. The highest profile Twitter account that shared this link was Gab’s own verified Twitter account with over 390K followers. The tweets Gab posted containing this link have since been deleted, likely by Gab themselves.

This is not the only example of Gab utilizing its large following on Twitter to spread misinformation and promote content that violates Twitter’s own content policies. Gab’s official Twitter account also shared an article written by Torba dismissing claims that he is spreading Covid-19 and vaccine misinformation, and accusing various organizations of collaborating with Facebook to attack Torba and Gab. The link to this article was shared over 230 times on Twitter, potentially reaching an estimated 2.5+ million Twitter views. [Note: The primary image on Torba’s article suggests that ADL is working with the US government, Silicon Valley, and the pharmaceutical industry in order to persecute Christians.]

In addition, the eighth most shared Gab link during this time period sees Torba accusing New York Times reporter Sheera Frenkel, ADL CEO Jonathan Greenblatt, and Facebook COO Sheryl Sandberg of creating a “totally baseless lie” that individuals were planning the January 6 insurrection on right-wing platforms like Gab and Parler. In an open letter requesting Gab be investigated, ADL highlights that information on how to avoid the police and what tools to use to open doors were exchanged in Gab comments prior to the attack on the Capitol. The link to Torba’s article was shared over 530 times on Twitter, reaching an estimated 2.9+ million Twitter views.

Other content from Gab's Twitter account includes a link to a Gab comment section where users promoted Covid-19 and vaccine misinformation, as well as an article from Torba claiming that Christians are being persecuted by the government and big businesses because of vaccine mandates and online censorship. Combined, these links were shared nearly 300 times, potentially reaching an estimated 1.6+ million Twitter views. For reasons unclear, Gab often deletes the tweets containing these links sometime after posting them. Sometimes, Twitter users will even see the message “this page doesn’t exist” when they try to access Gab’s Twitter account, suggesting that Gab sometimes temporarily deletes their own account.

Wendy Rogers

The most popular piece of Gab content shared on Twitter during this time period came from a tweet by controversial Arizona Republican state senator Wendy Rogers. In this tweet, Rogers encouraged Twitter users to follow her on Gab and Telegram, two alternative social media platforms that are havens for conspiracy theorists and extremists, warning she’d “be next” after Twitter suspended multiple election audit accounts on July 27. Rogers, an Air Force veteran and self-described lifetime member of the Oath Keepers, has repeatedly spread election fraud claims. Earlier this month, she tweeted, “It is time to evict Biden and decertify the 2020 Election. Stolen elections have consequences.” She also appeared on TruNews in June and July 2021, where she promoted the ongoing Maricopa County election audit; the “Maricopa Arizona Audit” Twitter account was one of several suspended by Twitter on July 27. Roger’s tweet has been shared more than 4,200 times, including by Emerald Robinson, Newsmax’s White House correspondent who has more than 393K Twitter followers; the Columbia Bugle, a popular right-wing Twitter account followed by more than 200K users; and Christina Bobb, the host of Weekly Briefing on One America News (OAN) who has more than 149K followers. Roger’s tweet reached an estimated 7.8+ million users on Twitter.

Methodology

Between June 7 and August 22, COE used Twitter’s API to conduct daily downloads of tweets containing “gab.com” sent in the previous 24 hours. By doing so, we gathered all tweets and retweets returned by Twitter’s API at the time of collection that linked to a URL from the gab.com domain. This means, in addition to capturing all tweets that link to the base URL ‘gab.com’, we also captured tweets that linked to individual Gab posts or Gab user accounts that were being promoted on Twitter. We then counted the daily frequency of Gab-linking tweets which are presented in Figure 1.

We also calculated the potential reach of Gab-linking tweets by summing the follower counts of Twitter accounts that sent a Gab-linking tweet or retweet each day, which is presented in Figure 2. It must be noted that there might be an overlap in followers of the user originally posting a tweet and/or sharers (via retweets) of the same tweet in which case our potential reach metric would count such cases as separate views. It should also be noted that this methodology would not locate tweets containing links to Gab.com which were posted and then deleted, either by the user or by Twitter itself, within the past 24 hours.

We then analyzed the data to identify the top 50 shared Gab links, top five sharers for each of these links (top sharers were determined by accounts with highest follower counts; these were the most popular sharers, not necessarily the accounts that spread the links the most), top 50 overall sharers of Gab content (based on total number of Gab links tweeted out by a user), and potential exposure for each of these links (calculated by adding up the followers for each user who shared the link). We then examined each individual link and the top sharers using an open-ended technique, which allowed for primary content themes to emerge as we evaluated each link. For messages and websites that were not in English, we used Twitter’s own auto-translation tools as well as Google Translate in order to translate them into English.